# Load necessary packages

library(tidyverse)

library(readxl)

library(dynlm)

library(urca)

library(modelsummary)

options("modelsummary_factory_default" = "gt")

library(gridExtra)

library(knitr)

library(forecast)

library(zoo)

library(xts)

library(tsibble)

library(car)24 Introduction to Time Series Regression and Forecasting

24.1 Introduction

In this chapter, we cover the basic concepts of univariate time series methods. We first show how the time series data can be handled in R. We then cover basic terminology and concepts, such as lags, leads, differences, growth rates, and annualized growth rates. We define autocorrelation, strict and weak stationarity, forecasting, forecast error, and mean squared forecast error (MSFE).

We introduce two univariate time series models: the autoregressive (AR) model, and the autoregressive distributed lag (ARDL) model. For these models, we show how the number of lags can be selected by using information criteria. We specify assumptions under which we can use the OLS estimator for the estimation of these models.

We introduce three method for the estimation of MSFE: (i)the standard error of the regression (SER) estimator, (ii) the final prediction error (FPE) estimator, and (iii) the pseudo out-of-sample (POOS) forecasting estimator. We explain how nonstationarity can arise from deterministic and stochastic trends, as well as from structural breaks. We then discuss how stochastic trends in a time series can be detected using unit root tests. We also discuss how structural breaks can be detected and how to mitigate the problems they cause.

Finally, we conclude this chapter by introducing autoregressive-moving average (ARMA) and autoregressive-integrated-moving average (ARIMA) models.

24.2 Time Series Data

Time series data refer to data collected on a single entity at multiple points in time. Some examples are: (i) aggregate consumption and GDP for a country over time, (ii) annual cigarette consumption per capita in Florida, and (iii) the daily Euro/Dollar exchange rate. In Definition 24.1, we provide a formal definition of time series data.

Definition 24.1 (Time series data) Let \(Y\) denote the time series of interest. The sample data on \(Y\) consist of the realizations of the following process: \[ \{Y_t:\Omega \mapsto \mathbb{R}, t=1,2,\dots\}, \tag{24.1}\] where \(\Omega\) is some underlying suitable probability space.

According to Definition 24.1, \(Y_t\) is the \(t\)-th coordinate of the random variable \(Y\). Thus, a sample of \(T\) observations on \(Y\) is simply the collection \(\{Y_1, Y_2,\dots,Y_T\}\).

From here on, we will consider only consecutive, evenly-spaced observations (for example, quarterly observations from 1962 to 2004, with no missing quarters). Missing and unevenly spaced data introduce complications, which we will not consider in this chapter.

In economics, time series data are mainly used for (i) forecasting, (ii) estimating dynamic causal effects, and (iii) modeling risk. In forecasting exercises, we place greater emphasis on the model’s goodness-of-fit; however, avoiding overfitting is crucial for generating reliable out-of-sample forecasts. In these exercises, omitted variable bias is less of a concern, as interpreting coefficients is not the primary focus. Instead, external validity is essential because we want models estimated using historical data to remain applicable in the near future. In the case of estimating dynamic causal effects, emphasis is placed on the causal interpretation of a model’s coefficients and on the validity of the assumptions required for such interpretation. Specifically, we require that the variable of interest is exogenous in the sense that the expectation of the error term conditional on the present and past values of the variable of interest is zero, i.e., the variable of interest is past and present exogenous. In modeling risk, we focus on models that describe how the conditional variance of an outcome variable evolves over time.

24.3 Time Series Objects in R

We need to represent time series data as time series objects in R. There are different packages that we can use to create time series objects. Below, we list some of the most commonly used packages for handling time series data in R.

ts: This base R package provides thetsfunction to create time series objects. Thetsclass is suitable for regular time series data, such as monthly, quarterly or yearly data.zoo: This package provides thezooclass for handling regular and irregular time series data.xts: This package extends thezoopackage for financial time series and provides thextsclass. It is designed for high-frequency data and provides additional functionality for time-based indexing and manipulation.tsibble: This package provides thetsibbleclass for handling time series data in a tidy format. It integrates well with thetidyversepackages.

24.3.1 Sample Data

To illustrate these time series objects, we consider quarterly macroeconomic variables from the us_macro_quarterly.xlsx file. The file includes the following variables:

GDPC96: Real gross domestic productJAPAN_IP: Total industrial production in Japan (FRED series name: JPNPROINDQISMEI)PCECTPI: Personal consumption expenditures, chain-type price indexGS10: 10-year Treasury constant maturity rate (quarterly average of monthly values)GS1: 1-year Treasury constant maturity rate (quarterly average of monthly values)TB3MS: 3-month Treasury bill, secondary market rate (quarterly average of monthly values)UNRATE: Civilian unemployment rate (quarterly average of monthly values)EXUSUK: U.S.–U.K. foreign exchange rate (quarterly average of daily values)CPIAUCSL: Consumer price index for all urban consumers, all items (quarterly average of monthly values)

# Import data

data <- read_excel(

"data/us_macro_quarterly.xlsx",

sheet = 1,

col_names = TRUE,

skip = 0

)

# Column names

colnames(data) [1] "Quarter" "GDPC96" "JAPAN_IP" "PCECTPI" "GS10" "GS1"

[7] "TB3MS" "UNRATE" "EXUSUK" "CPIAUCSL"24.3.2 The ts class

The ts function is from the stats package and it is used for equispaced data. The observations of equispaced time series are collected at regular points in time. Typical examples are daily, monthly, quarterly, or yearly data. The syntax of the ts function is

ts(

data = NA,

start = 1,

end = numeric(),

frequency = 1,

deltat = 1,

ts.eps = getOption("ts.eps"),

class = ,

names =

)The important arguments of the ts function are:

data: a vector or matrix (data frame) of the observed time-series values.start: the time of the first observation. For example,start = 1998: data starts at 1998,start = c(1998, 2): data starts at year 1998, second month/quarter.

frequency: the number of observations per unit of time. For example:frequency = 1: yearly data,frequency = 4: quarterly data,frequency = 12: monthly data

Now, we will convert data into a ts object. Since the data are quarterly and start at the first quarter of 1957, we specify start = c(1957, 1) and frequency = 4 in the ts function.

# Create a ts object

tsData <- ts(data, start = c(1957, 1), frequency = 4)

# Explore the ts object

class(tsData)[1] "mts" "ts" "matrix" "array" start(tsData)[1] 1957 1end(tsData)[1] 2013 4frequency(tsData)[1] 4A subset of the ts object can be extracted by using the window() function.

# Taking a subset

newdata <- window(tsData, start = c(1960, 1), end = c(2013, 4))

# Start and end of the new ts object

start(newdata)[1] 1960 1end(newdata)[1] 2013 4We can also use [ to extract a subset of the ts object. For example, the first five observations of the UNRATE variable can be extracted in the following way:

# Extract the first five observations of the UNRATE variable

tsData[1:5, "UNRATE"][1] 3.933333 4.100000 4.233333 4.933333 6.300000# The first three observations of the GDPC96, UNRATE, EXUSUK, and JAPAN_IP variables

head(tsData[, c("GDPC96", "UNRATE", "EXUSUK", "JAPAN_IP")], 3) GDPC96 UNRATE EXUSUK JAPAN_IP

1957 Q1 2851.778 3.933333 NA 8.414363

1957 Q2 2845.453 4.100000 NA 9.097347

1957 Q3 2873.169 4.233333 NA 9.042708The tsData[, "Column name"] syntax can be used to extract the entire column of a variable as a ts object.

UNRATE <- tsData[, "UNRATE"]

class(UNRATE)[1] "ts"To add a column to a ts object, we can use the cbind function. For example, we can add the logarithm of the real GDP variable to the tsData object by using the following code:

# Add log of real GDP to the ts object

combineData <- cbind(tsData, log_GDPC96 = log(tsData[, "GDPC96"]))

head(combineData[, c("tsData.GDPC96", "log_GDPC96")], 3) tsData.GDPC96 log_GDPC96

1957 Q1 2851.778 7.955698

1957 Q2 2845.453 7.953478

1957 Q3 2873.169 7.963171Note that the new column is added to the end of the ts object and the column name is log_GDPC96. The original column name of the real GDP variable is tsData.GDPC96 because the cbind function adds the prefix tsData. to the original column name.

In the following example, we use the R base lag and diff functions to compute the first lag and the first difference of the GDPC96 variable in the tsData object.

# Define the GDP variable as the GDPC96 column of the ts object

GDP <- tsData[, "GDPC96"]

# Compute the first lag of GDPC96

lag_GDP <- stats::lag(GDP, k = -1)

# Compute the first difference of GDPC96

diff_GDP <- diff(GDP, lag = 1)

# Combine the original series, the lag, and the difference into a new ts object

combineData <- cbind(GDP, lag_GDP, diff_GDP)

head(combineData) GDP lag_GDP diff_GDP

1957 Q1 2851.778 NA NA

1957 Q2 2845.453 2851.778 -6.325

1957 Q3 2873.169 2845.453 27.716

1957 Q4 2843.718 2873.169 -29.451

1958 Q1 2770.000 2843.718 -73.718

1958 Q2 2788.278 2770.000 18.278Alternatively, we can also use the ts.union function to combine the original series, the lag, and the difference into a new ts object.

# Using ts.union to combine series

combineData1 <- ts.union(GDP, lag_GDP, diff_GDP)

head(combineData1) GDP lag_GDP diff_GDP

1957 Q1 2851.778 NA NA

1957 Q2 2845.453 2851.778 -6.325

1957 Q3 2873.169 2845.453 27.716

1957 Q4 2843.718 2873.169 -29.451

1958 Q1 2770.000 2843.718 -73.718

1958 Q2 2788.278 2770.000 18.278When we use the ts object in plots, the x-axis will automatically be set to the time period. In the following, we use the base R approach for plotting.

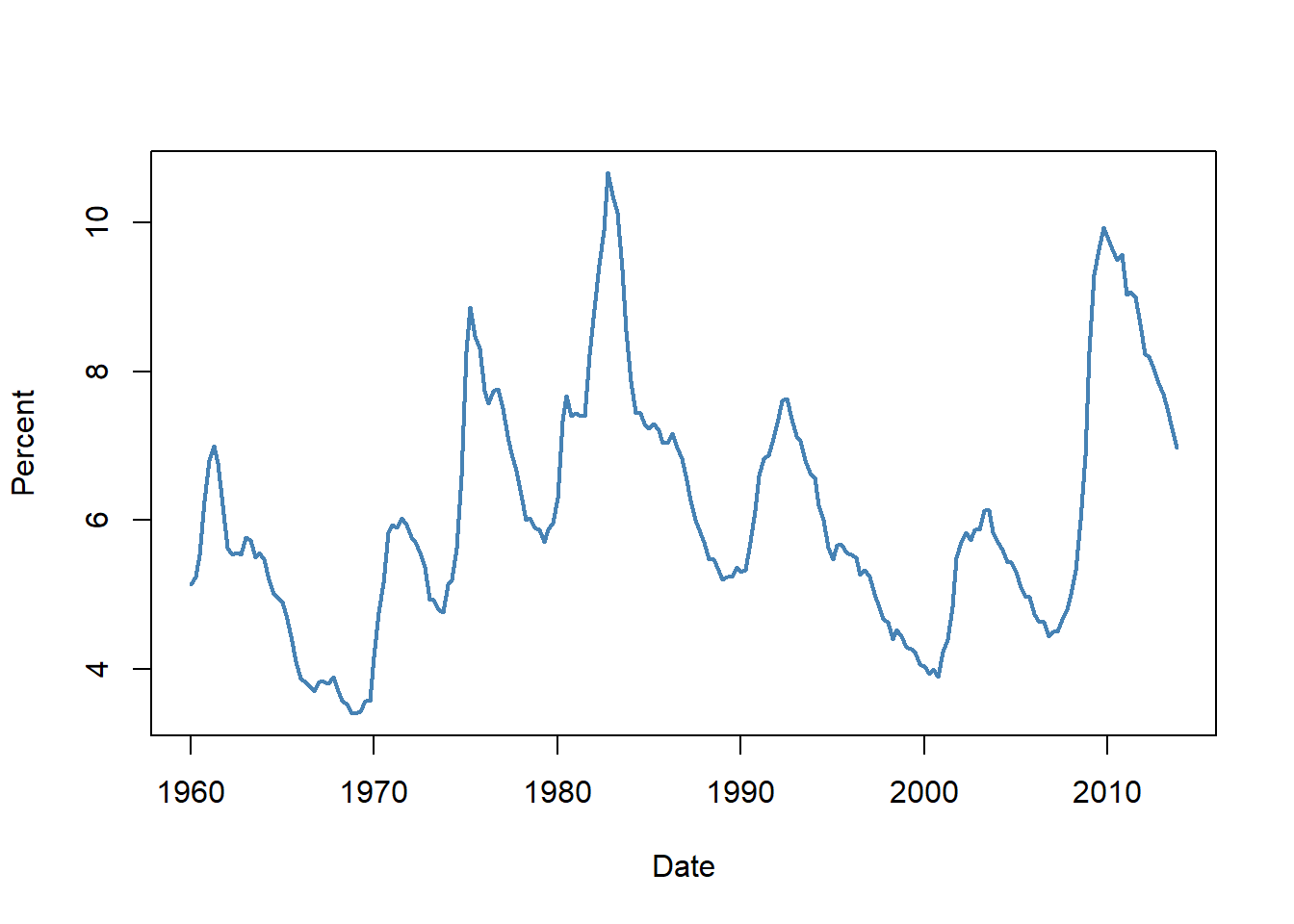

plot(

newdata[, "UNRATE"],

col = "steelblue",

lwd = 2,

ylab = "Percent",

xlab = "Date",

cex.main = 1

)

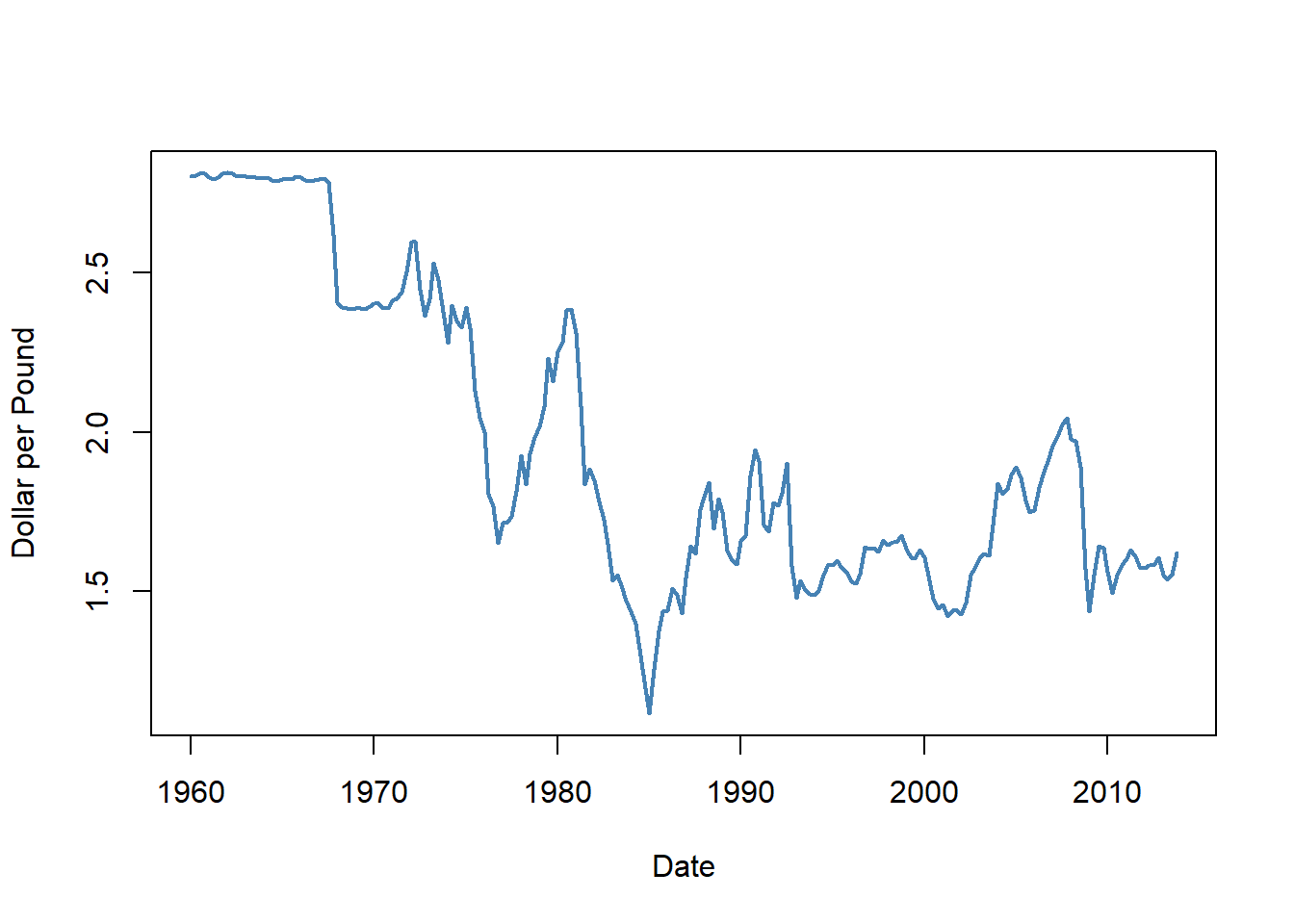

plot(

newdata[, "EXUSUK"],

col = "steelblue",

lwd = 2,

ylab = "Dollar per Pound",

xlab = "Date",

cex.main = 1

)

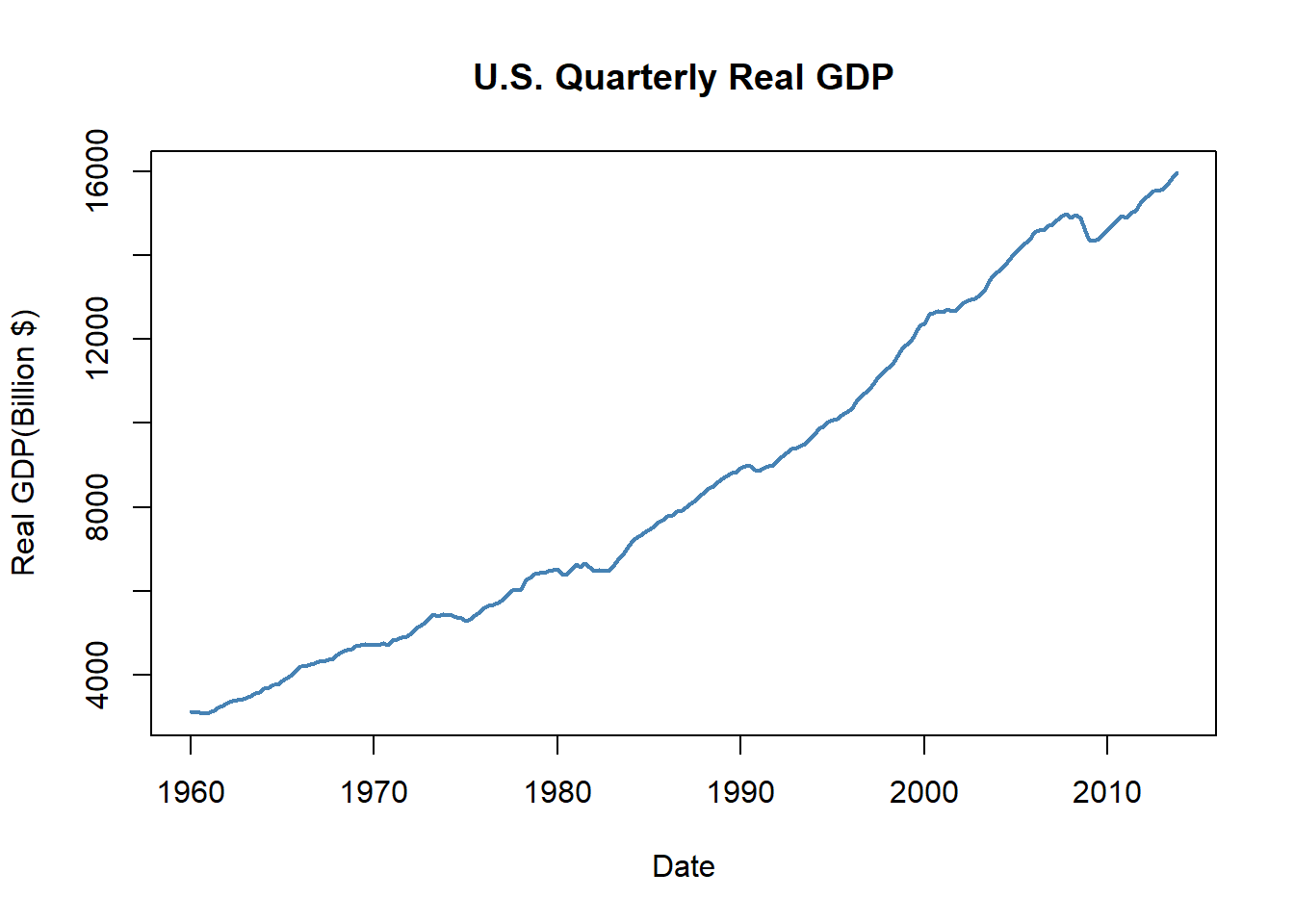

plot(

newdata[, "GDPC96"],

type = "l",

col = "steelblue",

lwd = 2,

ylab = "Real GDP(Billion $)",

xlab = "Date",

main = "U.S. Quarterly Real GDP"

)

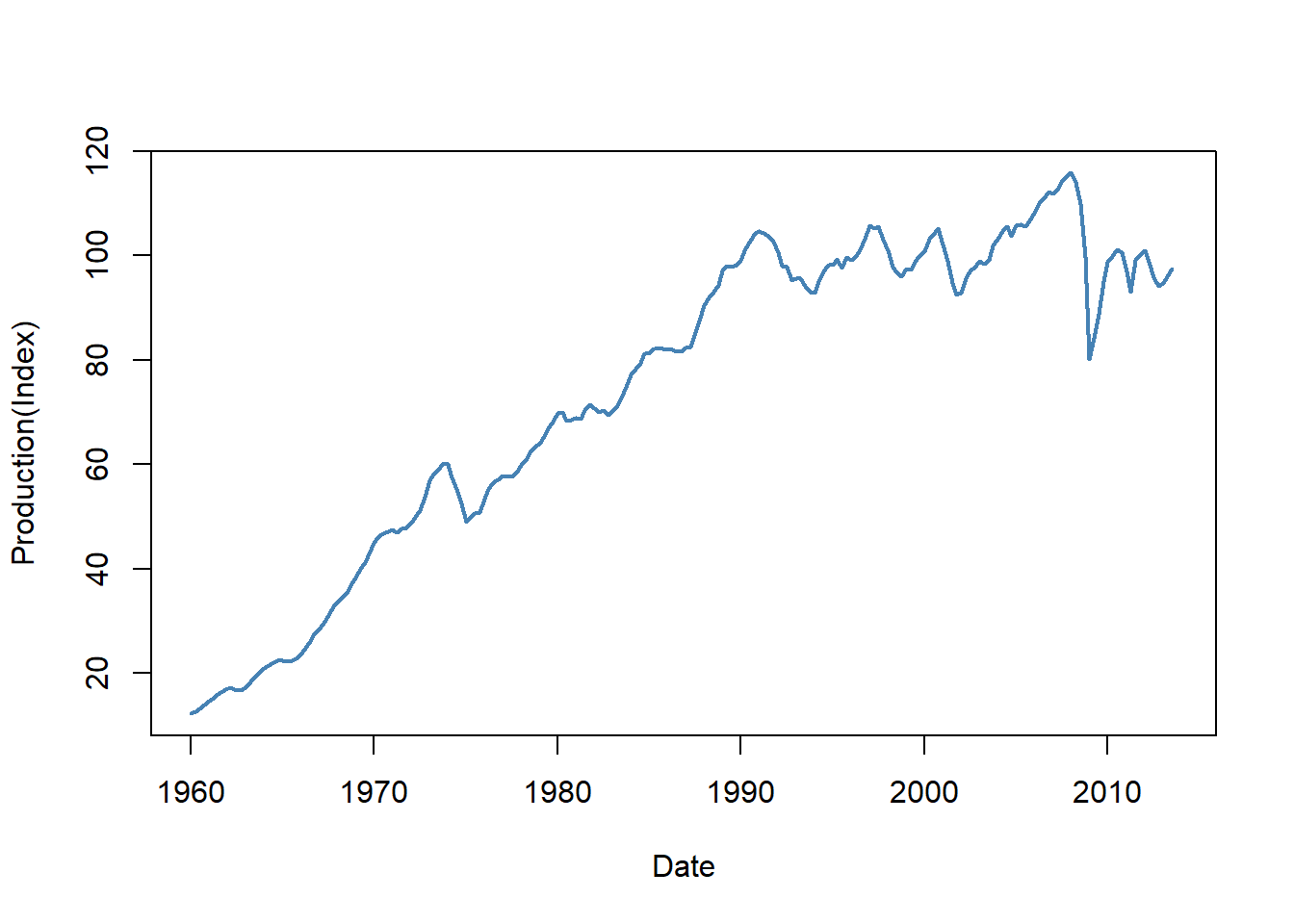

plot(

newdata[, "JAPAN_IP"],

col = "steelblue",

lwd = 2,

ylab = "Production(Index)",

xlab = "Date",

cex.main = 1

)

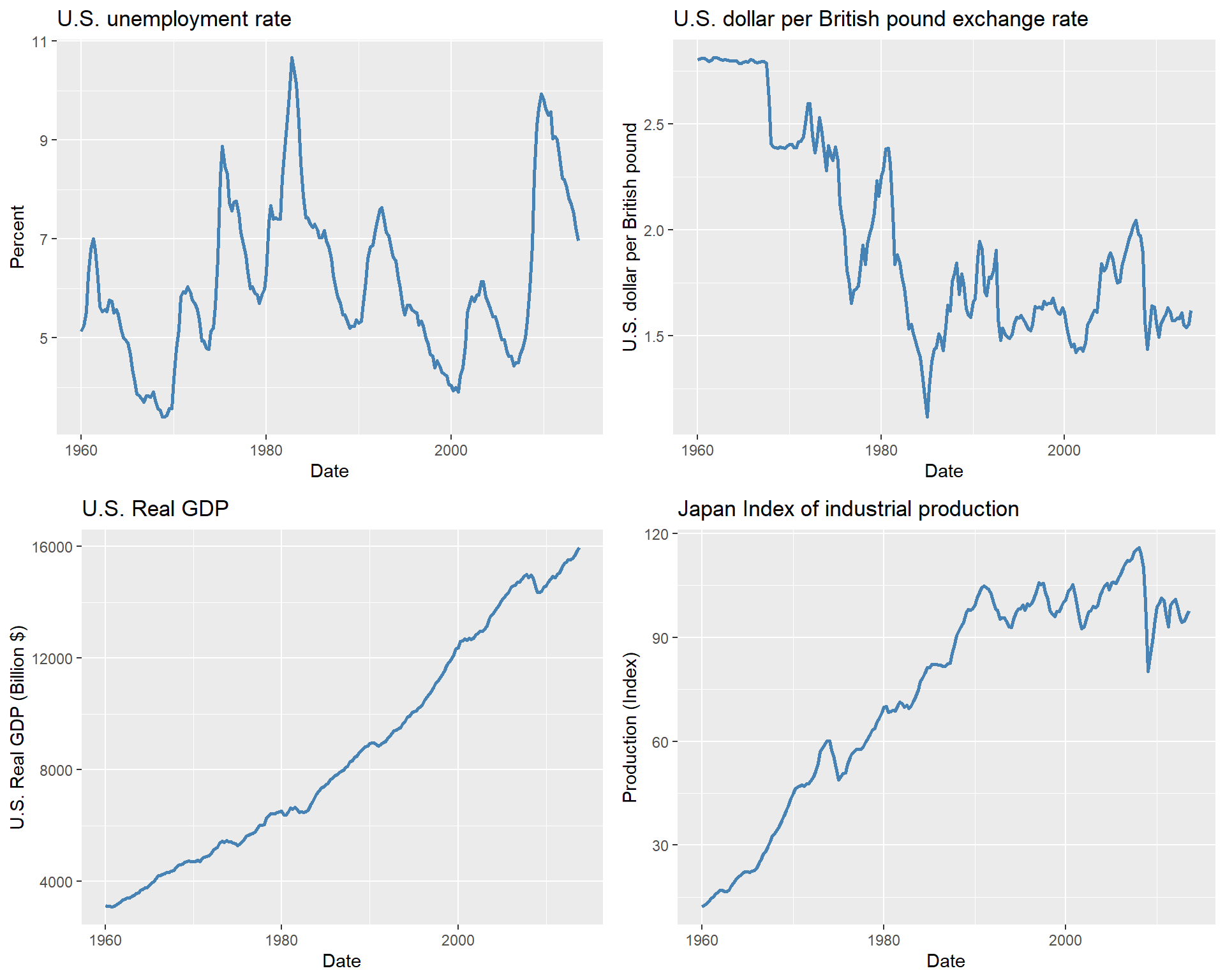

In the next example, we use the tidyverse approach to create the same plots. We first convert the ts object to a data frame. Note that we add a date column to the data frame, which is created by using the time function on the ts object. The time function returns the time index of the ts object, which we convert to a date format using the as.Date function.

# Convert ts object to data frame

df_plot <- data.frame(

date = as.Date(time(newdata)),

UNRATE = newdata[, "UNRATE"],

EXUSUK = newdata[, "EXUSUK"],

GDPC96 = newdata[, "GDPC96"],

JAPAN_IP = newdata[, "JAPAN_IP"]

)

# Plot 1: Unemployment Rate

p1 <- ggplot(df_plot, aes(x = date, y = UNRATE)) +

geom_line(linewidth = 1, color = "steelblue") +

labs(

title = "U.S. unemployment rate",

x = "Date",

y = "Percent"

)

# Plot 2: Exchange Rate

p2 <- ggplot(df_plot, aes(x = date, y = EXUSUK)) +

geom_line(linewidth = 1, color = "steelblue") +

labs(

title = "U.S. dollar per British pound exchange rate",

x = "Date",

y = "U.S. dollar per British pound"

)

# Plot 3: Real GDP

p3 <- ggplot(df_plot, aes(x = date, y = GDPC96)) +

geom_line(linewidth = 1, color = "steelblue") +

labs(

title = "U.S. Real GDP",

x = "Date",

y = "U.S. Real GDP (Billion $)"

)

# Plot 4: Industrial Production

p4 <- ggplot(df_plot, aes(x = date, y = JAPAN_IP)) +

geom_line(linewidth = 1, color = "steelblue") +

labs(

title = "Japan Index of industrial production",

x = "Date",

y = "Production (Index)"

)

# Arrange plots in a 2x2 grid

grid.arrange(p1, p2, p3, p4, ncol = 2)

Finally, in Table 24.1, we collect some useful functions for the ts objects.

ts objects

| Function | Description |

|---|---|

start |

Returns the start time of the ts object. |

end |

Returns the end time of the ts object. |

frequency |

Returns the frequency of the ts object. |

window |

Extracts a subset of the ts object based on specified start and end times. |

time |

Returns the time index of the ts object. |

cbind/ts.union |

Combines multiple ts objects together by concatenating their columns and aligning them based on their time indices. |

rbind |

Combines multiple ts objects together by concatenating their rows and aligning them based on their time indices. |

lag |

Returns a lagged version of the ts object when k is negative and a lead version when k is positive. |

diff |

Returns the differences of the ts object. |

colnames |

Returns the column names of the ts object. |

summary |

Provides summary statistics of the ts object. |

24.3.3 The zoo class

The zoo package provides the zoo class for handling irregular time series data. We use the zoo function to create a zoo object. The syntax of the zoo function is

zoo(

x = NULL,

order.by = index(x),

frequency = NULL,

calendar = getOption("zoo.calendar", TRUE)

)The important arguments of the zoo function are:

xis the vector or matrix, or data frame of observationsorder.byis the index by which the observations should be ordered. In our case, this argument will be set to the time period of our data set.

The zoo object is essentially like a matrix but including an index attribute that specifies the time period of each observation. We can use several standard generic functions for the zoo objects, such as print, summary, str, head, tail, $ and [ for sub-setting.

Zdata <- data

# Convert Date to the quarterly data

Zdata$Date <- as.yearqtr(Zdata$Quarter, format = "%Y:0%q")

# Create a zoo object

zooData <- zoo(Zdata, order.by = Zdata$Date)

# Check class and frequency of the zoo object

class(zooData)[1] "zoo"frequency(zooData)[1] 4Above, we first convert the Quarter column in Zdata to a quarterly data by using as.yearqtr(Zdata$Date, format = "%Y:0%q"). Then, we set the index of the zoo object to the quarterly data: zoo(Zdata, order.by = Zdata$Date). In this way, we added an index of time to the data set (the first column below).

head(zooData[, c("GDPC96", "UNRATE", "EXUSUK", "JAPAN_IP")], 3) GDPC96 UNRATE EXUSUK JAPAN_IP

1957 Q1 2851.778 3.933333 <NA> 8.414363

1957 Q2 2845.453 4.100000 <NA> 9.097347

1957 Q3 2873.169 4.233333 <NA> 9.042708Alternatively, we can use the yearqtr function to create a time index and then create a zoo object.

Zdata <- data

# Create a time index

time_index <- yearqtr(seq(from = 1957, by = 0.25, length.out = nrow(Zdata)))

# Create a zoo object

zooData1 <- zoo(Zdata[-1], order.by = time_index)

# Check the first three observations

head(zooData1[, c("GDPC96", "UNRATE", "EXUSUK", "JAPAN_IP")], 3) GDPC96 UNRATE EXUSUK JAPAN_IP

1957 Q1 2851.778 3.933333 NA 8.414363

1957 Q2 2845.453 4.100000 NA 9.097347

1957 Q3 2873.169 4.233333 NA 9.042708We can also take a subset of the zoo object by using the window function.

# Taking a subset

zooData2 <- window(

zooData,

start = as.yearqtr("1960 Q1"),

end = as.yearqtr("2013 Q4")

)

# Check start and end of the new zoo object

start(zooData2)[1] "1960 Q1"end(zooData2)[1] "2013 Q4"# Return the index of the zoo object

head(zoo::index(zooData2), 5)[1] "1960 Q1" "1960 Q2" "1960 Q3" "1960 Q4" "1961 Q1"We can convert a strictly regular series with numeric index to a ts object by using the as.ts function.

tsdata <- as.ts(zooData)

class(tsdata)[1] "mts" "ts" "matrix" "array" Finally, in Table 24.2, we list some of the functions that are available for the zoo objects.

zoo objects

| Function | Description |

|---|---|

coredata |

Extracts the core data from a zoo object, returning it as a vector or matrix without the time index. |

index |

Returns the index of the zoo object. |

time |

Returns the time index of the zoo object. |

yearqtr |

Converts a date to a quarterly format. |

yearmon |

Converts a date to a monthly format. |

window |

Extracts a subset of a zoo object based on specified start and end times. |

subset |

Extracts a subset of a zoo object based on specified conditions. |

na.omit |

Removes any rows with missing values from a zoo object. |

merge |

Merges multiple zoo objects together based on their time indices. |

cbind |

Combines multiple zoo objects together by concatenating their core data and aligning them based on their time indices. |

rbind |

Combines multiple zoo objects together by concatenating their rows and aligning them based on their time indices. |

24.3.4 The xts class

The xts package extends the zoo package and provides the xts class. The xts class is designed for high-frequency data and provides additional functionality for time-based indexing and manipulation. We can create an xts object by using the xts function. The syntax of the xts function is the same as the zoo function. We can use the as.xts function to convert objects of class timeSeries, ts, irts, fts, matrix, data.frame, and zoo to xts objects.

Zdata <- data

# Convert Date to the quarterly data

Zdata$Date <- as.yearqtr(Zdata$Quarter, format = "%Y:0%q")

# GDP series as xts object

xtsData <- xts(data[, -1], order.by = Zdata$Date)

class(xtsData)[1] "xts" "zoo"head(xtsData[, c("GDPC96", "UNRATE", "EXUSUK", "JAPAN_IP")], 3) GDPC96 UNRATE EXUSUK JAPAN_IP

1957 Q1 2851.778 3.933333 NA 8.414363

1957 Q2 2845.453 4.100000 NA 9.097347

1957 Q3 2873.169 4.233333 NA 9.042708The xts object allows for time-based indexing and manipulation. For example, we can extract the data for 1960:Q1 to 1961:Q4 for the GDPC96 variable in the following way:

# Extract data for the first quarter of 1960

xtsData["1960/1961", "GDPC96"] GDPC96

1960 Q1 3120.195

1960 Q2 3108.361

1960 Q3 3116.104

1960 Q4 3078.384

1961 Q1 3099.314

1961 Q2 3156.922

1961 Q3 3209.561

1961 Q4 3274.61024.3.5 The tsibble class

The tsibble objects extend tidy data frames (tibble objects) by introducing time structure.

y <- tsibble(

Year = 2015:2019,

Observation = c(123, 39, 78, 52, 110),

index = Year

)

class(y)[1] "tbl_ts" "tbl_df" "tbl" "data.frame"print(y)# A tsibble: 5 x 2 [1Y]

Year Observation

<int> <dbl>

1 2015 123

2 2016 39

3 2017 78

4 2018 52

5 2019 110In the following, we convert data to a tsibble object. We first use the yearquarter function from the tsibble package to create a quarterly date format. We then use the seq function to create a sequence of quarterly dates starting from the first quarter of 1957. Finally, we create a tsibble object by using the tsibble function and specifying the index as the Quarters column.

# Convert Date to the quarterly date format

qtr <- yearquarter("1957 Q1")

data$Quarters <- seq(from = qtr, by = 1, length.out = nrow(data))

# Create a tsibble object

tsibbleData <- tsibble(data[, -1], index = Quarters)

class(tsibbleData)[1] "tbl_ts" "tbl_df" "tbl" "data.frame"glimpse(tsibbleData)Rows: 228

Columns: 10

$ GDPC96 <dbl> 2851.778, 2845.453, 2873.169, 2843.718, 2770.000, 2788.278, 2…

$ JAPAN_IP <dbl> 8.414363, 9.097347, 9.042708, 8.796834, 8.632918, 8.414363, 8…

$ PCECTPI <dbl> 16.449, 16.553, 16.687, 16.773, 16.978, 17.009, 17.022, 17.01…

$ GS10 <dbl> 3.403333, 3.626667, 3.926667, 3.633333, 3.040000, 2.923333, 3…

$ GS1 <dbl> 3.390000, 3.540000, 3.963333, 3.586667, 2.160000, 1.350000, 2…

$ TB3MS <dbl> 3.0966667, 3.1400000, 3.3533333, 3.3100000, 1.7566667, 0.9566…

$ UNRATE <dbl> 3.933333, 4.100000, 4.233333, 4.933333, 6.300000, 7.366667, 7…

$ EXUSUK <dbl> NA, NA, NA, NA, NA, NA, NA, NA, 2.809507, 2.814537, 2.808283,…

$ CPIAUCSL <dbl> 27.77667, 28.01333, 28.26333, 28.40000, 28.73667, 28.93000, 2…

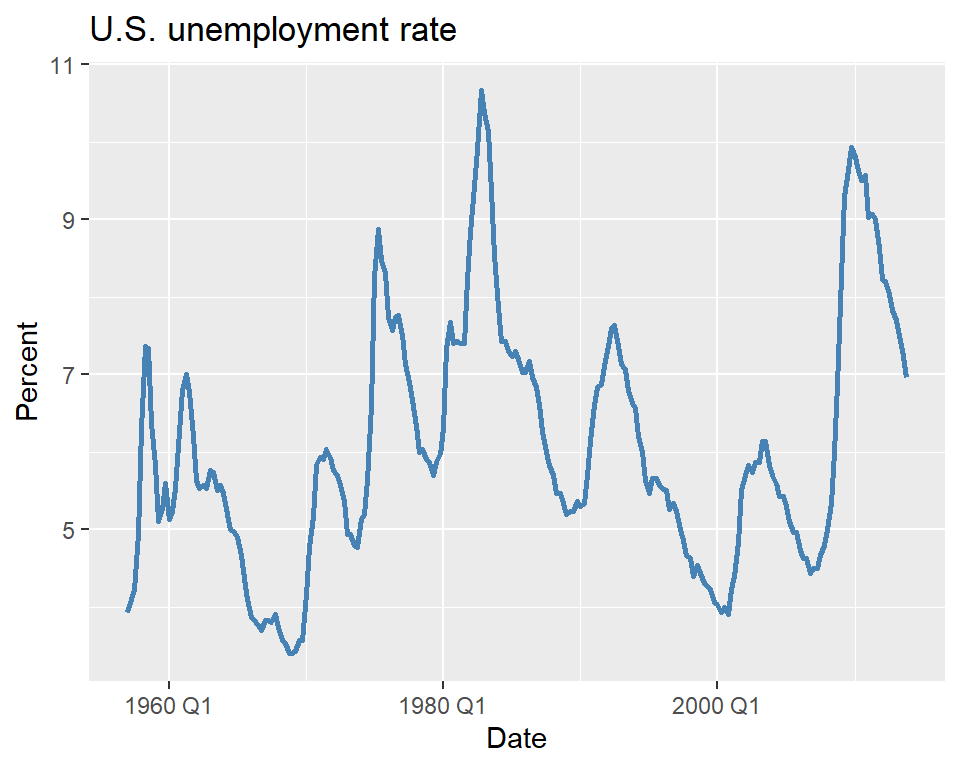

$ Quarters <qtr> 1957 Q1, 1957 Q2, 1957 Q3, 1957 Q4, 1958 Q1, 1958 Q2, 1958 Q3…We can use the standard tidyverse functions for the tsibble objects. In the following, we plot the U.S. unemployment rate.

# Plot the unemployment rate

ggplot(tsibbleData, aes(x = Quarters, y = UNRATE)) +

geom_line(linewidth = 1, color = "steelblue") +

labs(

title = "U.S. unemployment rate",

x = "Date",

y = "Percent"

)

Finally, we can convert a tsibble object to a tibble object by using the as_tibble function:

tibbleData <- as_tibble(tsibbleData)

class(tibbleData)[1] "tbl_df" "tbl" "data.frame"24.4 Terminology

We need to define some new tools and notations for the analysis of time series data. These include:

- Lags, leads and differences over time.

- Logarithms, growth rates, and annualized growth rates.

Definition 24.2 (Lags, leads, differences, growth rates, and annualized growth rates) Let \(\{Y_t\}\) for \(t=1,\ldots,T\) be the time series of interest.

- The first lag of \(Y_t\) is \(Y_{t-1}\); the \(j\)th lag is \(Y_{t-j}\).

- The first lead of \(Y_t\) is \(Y_{t+1}\); the \(j\)th lead is \(Y_{t+j}\).

- The first difference of \(Y_t\) is \(\Delta Y_t = Y_t - Y_{t-1}\).

- The second difference of \(Y_t\) is \(\Delta^2 Y_t = \Delta Y_t - \Delta Y_{t-1}\).

- The one-period growth rate of \(Y\) is given by \(\frac{\Delta Y_t}{Y_{t-1}} = \frac{Y_t - Y_{t-1}}{Y_{t-1}} = \left(\frac{Y_t}{Y_{t-1}}-1\right)\).

- The annualized growth rate of \(Y\) with frequency \(s\) (i.e., annual growth rate if the one period growth rate were compounded) is \[ \left(\left(\frac{Y_t}{Y_{t-1}}\right)^{s} - 1\right). \]

The one-period growth rate of \(Y_t\) from period \(t-1\) to \(t\) defined in Definition 24.2 can be approximated by \(\Delta \ln Y_t\). This approximation is based on the following argument: \[ \begin{align} \Delta \ln Y_t&= \ln\left(\frac{Y_t}{Y_{t-1}}\right) = \ln\left(\frac{Y_{t-1}+\Delta Y_t}{Y_{t-1}}\right)= \ln\left(1+\frac{\Delta Y_t}{Y_{t-1}}\right)\\ &\approx \frac{\Delta Y_t}{Y_{t-1}} = \frac{Y_t - Y_{t-1}}{Y_{t-1}} = \frac{Y_t}{Y_{t-1}} - 1, \end{align} \] where the approximation follows from \(\ln(1+x)\approx x\) when \(x\) is small. Therefore, the annualized growth rate in \(Y_t\) with frequency \(s\) is approximately \(s\times 100 \Delta \ln Y_t\).

As an example, we consider the quarterly real GDP time series contained in the GrowthRate.xlsx file. This file contains data on the following variables:

Y: Logarithm of real GDPYGROWTH: Annual real GDP growth rateRECESSION: Recession indicator (1 if recession, 0 otherwise)

In the following, we compute the first lag and annualized growth rate of the real GDP variable. The annualized percentage growth rate is calculated using the logarithmic approximation: \(4\times100\times\left(\ln(GDP_t)-\ln(GDP_{t-1})\right)=400\times\Delta\ln(GDP_t)\). The results for 2016 and 2017 are given in Table 24.3. For example, the annualized growth rate of the real GDP from 2016:Q4 to 2017:Q1 is \(400\times\left(\ln(16903.24)-\ln(16851.42)\right)=1.23\%\).

# Load data

dfGrowth <- read_excel("data/GrowthRate.xlsx")

# Create time index and compute transformations

dfGrowth <- dfGrowth %>%

mutate(

Time = as.yearqtr(seq.Date(from = as.Date("1960-01-01"), by = "quarter", length.out = n())),

# Transformations

GDP = exp(Y),

AnnualRate = c(NA, diff(Y) * 400),

GDP_lag1 = dplyr::lag(AnnualRate, 1),

ChYGROWTH = YGROWTH - dplyr::lag(YGROWTH, 1)

)

# Subset for 2016–2017

df_subset <- dfGrowth %>%

dplyr::filter(

Time >= as.yearqtr("2016 Q1") & Time <= as.yearqtr("2017 Q4")

) %>%

dplyr::select(Time, GDP, Y, AnnualRate, GDP_lag1)# Display the subset of the data with custom column names

colnames <- c(

"Quarter",

"GDP (billons of $ 2009)",

"Logarithm of GDP",

"Growth Rate of GDP at an Annual Rate",

"First Lag of GDP Growth Rate"

)

kable(df_subset, col.names = colnames, digits = 2, align = "c")| Quarter | GDP (billons of $ 2009) | Logarithm of GDP | Growth Rate of GDP at an Annual Rate | First Lag of GDP Growth Rate |

|---|---|---|---|---|

| 2016 Q1 | 16571.57 | 9.72 | 0.58 | 0.48 |

| 2016 Q2 | 16663.52 | 9.72 | 2.21 | 0.58 |

| 2016 Q3 | 16778.15 | 9.73 | 2.74 | 2.21 |

| 2016 Q4 | 16851.42 | 9.73 | 1.74 | 2.74 |

| 2017 Q1 | 16903.24 | 9.74 | 1.23 | 1.74 |

| 2017 Q2 | 17031.09 | 9.74 | 3.01 | 1.23 |

| 2017 Q3 | 17163.89 | 9.75 | 3.11 | 3.01 |

| 2017 Q4 | 17271.70 | 9.76 | 2.50 | 3.11 |

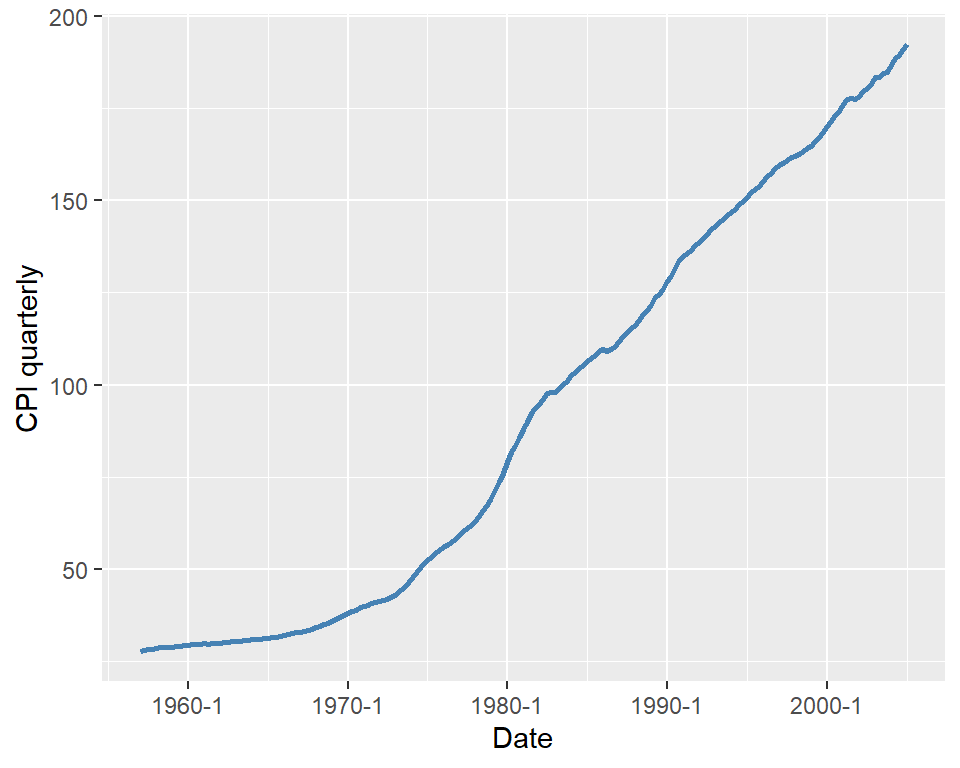

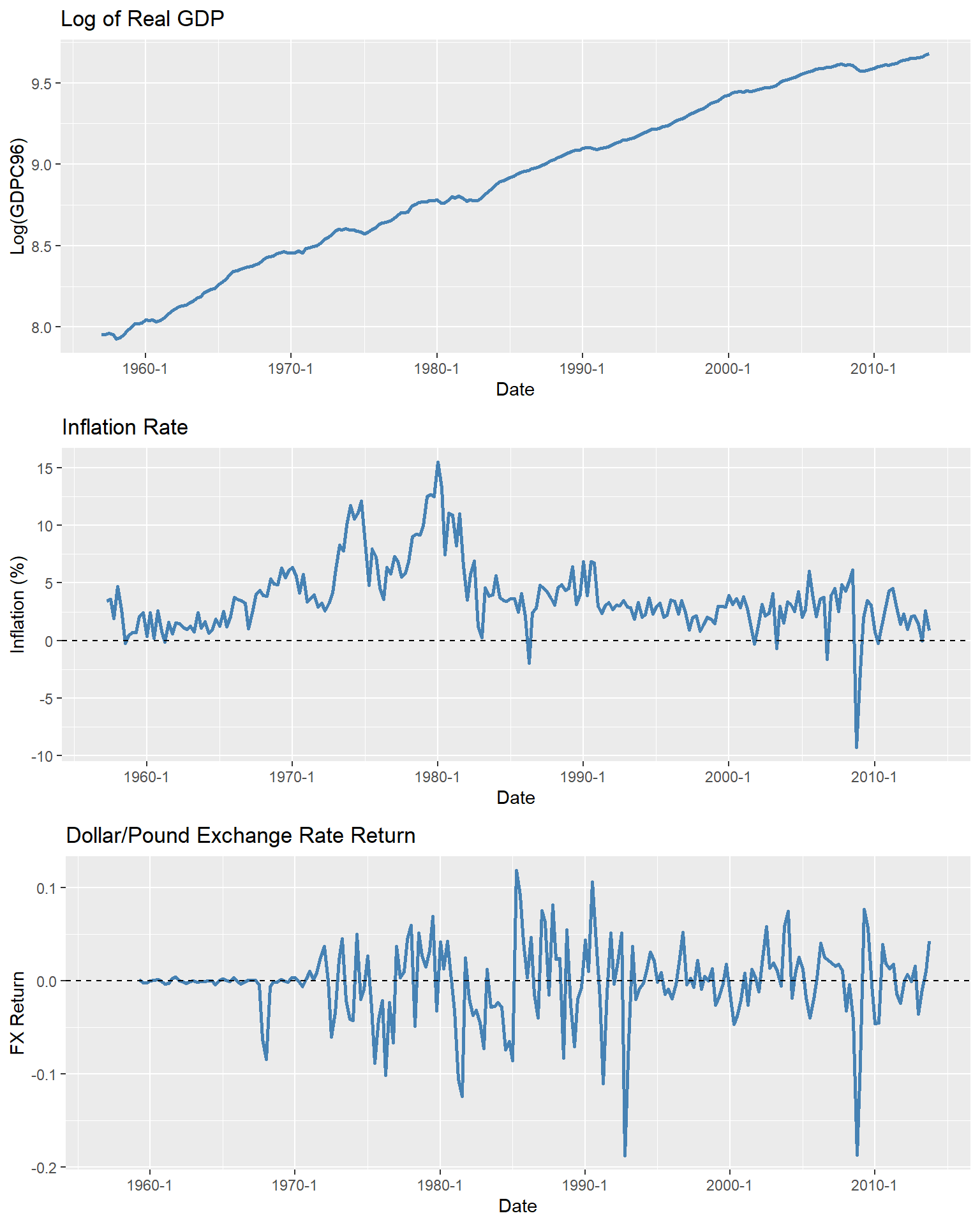

As another example, we consider the quarterly U.S. macroeconomic dataset contained in the macroseries.csv file. We compute the first lag, the first difference, and the annualized growth rate of the U.S. consumer price index (CPI). Figure 24.4 displays the quarterly U.S. CPI index from 1957 to 2005. The shaded areas indicate recession periods, during which the real GDP growth rate is negative. The figure shows that the index exhibits an upward trend over time, particularly after the 1970s.

# Load the macroeconomic data set

dfInflation <- read_csv("data/macroseries.csv")

# Create quarterly date index (equivalent to pandas date_range with QS)

dfInflation <- dfInflation %>%

mutate(

date = as.yearqtr(seq(from = 1957, by = 0.25, length.out = n()))

)

colnames(dfInflation) [1] "periods" "u_rate" "cpi"

[4] "ff_rate" "3_m_tbill" "1_y_tbond"

[7] "dollar_pound_erate" "gdp_jp" "t_from_1960"

[10] "recession" "GS10" "TB3MS"

[13] "RSPREAD" "date" # Plot of the U.S. CPI index

ggplot(dfInflation, aes(x = date, y = cpi)) +

geom_line(color = "steelblue", linewidth =1) +

labs(

x = "Date",

y = "CPI quarterly"

)

For the CPI variable, we compute lags, differences, and annualized growth rates. The CPI in the first quarter of 2004 (2004:Q1) was 186.57, and in the second quarter of 2004 (2004:Q2), it was $188.60. Then, the one-period percentage growth rate of the CPI from 2004:Q1 to 2004:Q2 is

\[ 100\times\left(\frac{188.60-186.57}{186.57}\right)=1.088\%. \]

The annualized growth rate of the CPI (inflation rate) from 2004:Q1 to 2004:Q2 is \(4\times 1.088=4.359\%\). Using the logarithmic approximation, the annualized growth rate of the CPI from 2004:Q1 to 2004:Q2 is \(4\times 100\times(\ln 188.60 - \ln 186.57)=4.329\%\). In Table 24.4, we present the annualized growth rate, the first lag, and the first difference of the CPI for the last five quarters.

# Compute inflation measures

dfInflation <- dfInflation %>%

mutate(

Inflation = 400 * (log(cpi) - dplyr::lag(log(cpi), 1)),

Inf_lag1 = dplyr::lag(Inflation, 1),

Delta_Inf = Inflation - Inf_lag1

)

# Subset last 5 rows and round

df_subset <- dfInflation %>%

dplyr::filter(

date >= as.yearqtr("2004 Q1") & date <= as.yearqtr("2005 Q1")

) %>%

dplyr::select(date, cpi, Inflation, Inf_lag1, Delta_Inf)# Set the column names

colnames <- c(

"Quarter",

"CPI",

"Inflation",

"First Lag of Inflation",

"Change in Inflation"

)

kable(df_subset, col.names = colnames, digits = 2, align = "c")| Quarter | CPI | Inflation | First Lag of Inflation | Change in Inflation |

|---|---|---|---|---|

| 2004 Q1 | 186.57 | 3.81 | 0.87 | 2.94 |

| 2004 Q2 | 188.60 | 4.34 | 3.81 | 0.53 |

| 2004 Q3 | 189.37 | 1.62 | 4.34 | -2.71 |

| 2004 Q4 | 191.03 | 3.51 | 1.62 | 1.88 |

| 2005 Q1 | 192.17 | 2.37 | 3.51 | -1.14 |

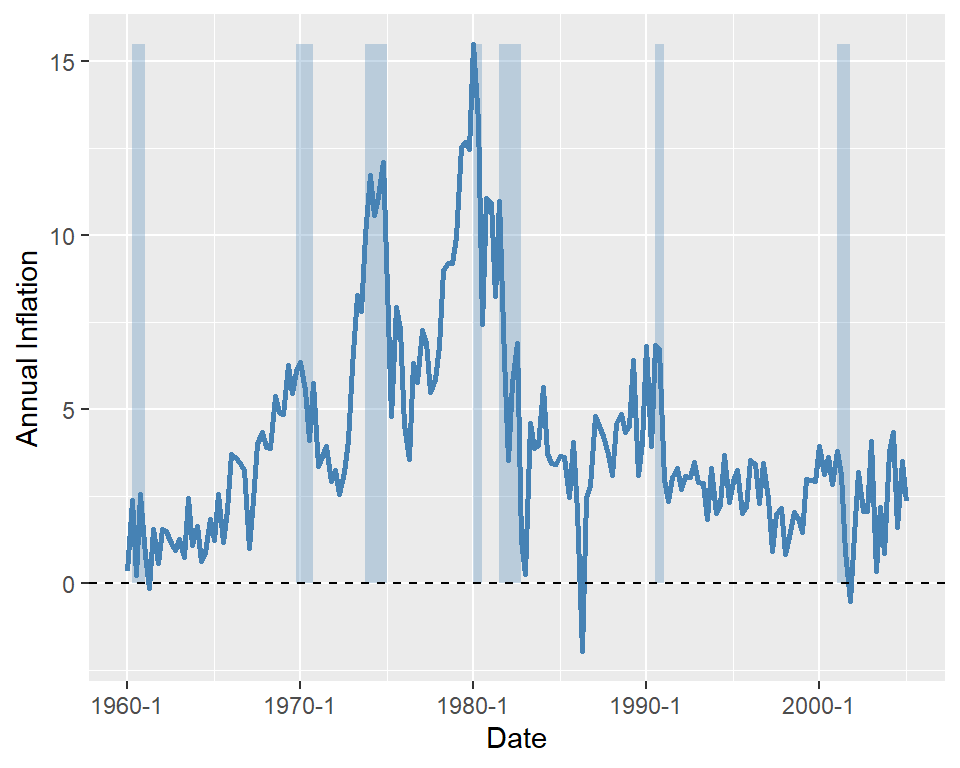

Finally, in Figure 24.5, we plot the U.S. quarterly inflation rate over 1960–2005. The figure shows that inflation fluctuates over time, exhibiting an upward trend until 1980, followed by a decline.

# Plot of U.S. quarterly inflation rate

# Subset for 1960 Q1 to 2005 Q1

filter <- dfInflation$date >= as.yearqtr("1960 Q1") &

dfInflation$date <= as.yearqtr("2005 Q1")

df_plot <- dfInflation[filter, ]

ymin <- min(df_plot$Inflation, na.rm = TRUE)

ymax <- max(df_plot$Inflation, na.rm = TRUE)

ggplot(df_plot, aes(x = date)) +

geom_rect(

data = subset(df_plot, recession == 1),

aes(xmin = date - 0.1, xmax = date + 0.1, ymin = 0, ymax = ymax),

inherit.aes = FALSE,

fill = "steelblue",

alpha = 0.3

) +

geom_line(aes(y = Inflation), color = "steelblue", linewidth = 1) +

geom_hline(yintercept = 0, linetype = "dashed", color = "black") +

labs(

x = "Date",

y = "Annual Inflation"

)

24.5 Stationarity and autocorrelation

The idea of random sampling from a large population is not suitable for time series data. It is very common for time series data to exhibit correlation over time. Therefore, in order to learn about the distribution \(\{Y_1,\dots,Y_T\}\) (joint, marginals, conditionals) a notion of constancy is needed (Hansen (2022)). Such a notion is stationarity, which is one of the most important concepts in time series analysis. It enables us to use historical relationships to forecast future values, making it a key requirement for ensuring external validity. Intuitively, if a time series is stationary, its future resembles its past in a probabilistic sense.

Definition 24.3 (Strict stationarity) A time series \(Y\) is strictly stationary if its probability distribution does not change over time, i.e., if the joint distribution of \((Y_{s+1},Y_{s+2},\dots,Y_{s+T})\) does not depend on \(s\) regardless of the value of \(T\). Otherwise, \(Y\) is said to be nonstationary. A pair of time series \(Y\) and \(X\) is said to be jointly stationary if the joint distribution of \((X_{s + 1}, Y_{s + 1}, X_{s + 2}, Y_{s + 2},\ldots, X_{s + T}, Y_{s + T})\) does not depend on \(s\) regardless of the value of \(T\).

This definition can be generalized the \(k\)-vector of time series processes. Strict stationarity is a strong assumption as it does not allow for heterogeneity and/or strong persistence. Therefore, a weaker notion of stationarity is often used in time series analysis, which is called weak stationarity.

Definition 24.4 (Weak stationarity) A time series \(Y\) is weakly stationary if its first and second moments exist and are finite, and they do not change over time. Also, its \(k\)-th autocovariance must only depend on \(k\) regardless of the value of \(T\).

Assume that \(Y\) is weakly stationary such that \(\text{E}(Y_t) = \mu\) and \(\text{var}(Y_t) = \sigma_Y^2\) for all \(t\). It is natural to expect that the leads and lags of \(Y\) are correlated. The correlation of \(Y_t\) with its lag terms is called autocorrelation or serial correlation.

Definition 24.5 (Autocorrelation) The \(k\)-th autocovariance of a series \(Y\) (denoted by \(\gamma(k)\equiv\text{cov}(Y_{t},Y_{t-k})\)) is the covariance between \(Y_t\) and its \(k\)-th lag \(Y_{t-k}\) (or \(k\)-th lead \(Y_{t+k}\)). The \(k\)-th autocorrelation coefficient between \(Y_t\) and \(Y_{t-k}\) is \[ \begin{align*} \rho(k) = \text{corr}(Y_{t},Y_{t-k})=\frac{\gamma(k)}{\sqrt{\text{var}(Y_t)\text{var}(Y_{t-k})}}=\frac{\gamma(k)}{\sigma_Y^2}. \end{align*} \tag{24.2}\]

The \(k\)-th autocorrelation coefficient is also called the \(k\)-th serial correlation coefficient and it is a measure of linear dependence. We can use the sample counterparts of the terms in Definition 24.5 to estimate the autocorrelation coefficients. The sample variance of \(Y\) is given by \[ \begin{align*} s_Y^2 = \frac{1}{T}\sum_{t=1}^T (Y_t - \overline{Y})^2, \end{align*} \]

where \(\overline{Y}\) is the sample average of \(Y\). The \(k\)-th sample autocovariance can be computed as \[ \begin{align*} \widehat{\gamma}(k) = \frac{1}{T}\sum_{t=k+1}^T(Y_t - \overline{Y}_{k+1,T})(Y_{t-k} - \overline{Y}_{1,T-k}), \end{align*} \tag{24.3}\]

where \(\overline{Y}_{k+1,T}\) is the sample average of \(Y\) for \(t=k+1,k+2,\dots,T\). Then, we can estimate the \(\rho(k)\) by the \(k\)th sample autocorrelation (serial correlation), which is given by \[ \begin{align*} \widehat{\rho}(k) = \frac{\widehat{\gamma}(k)}{s_Y^2}. \end{align*} \tag{24.4}\]

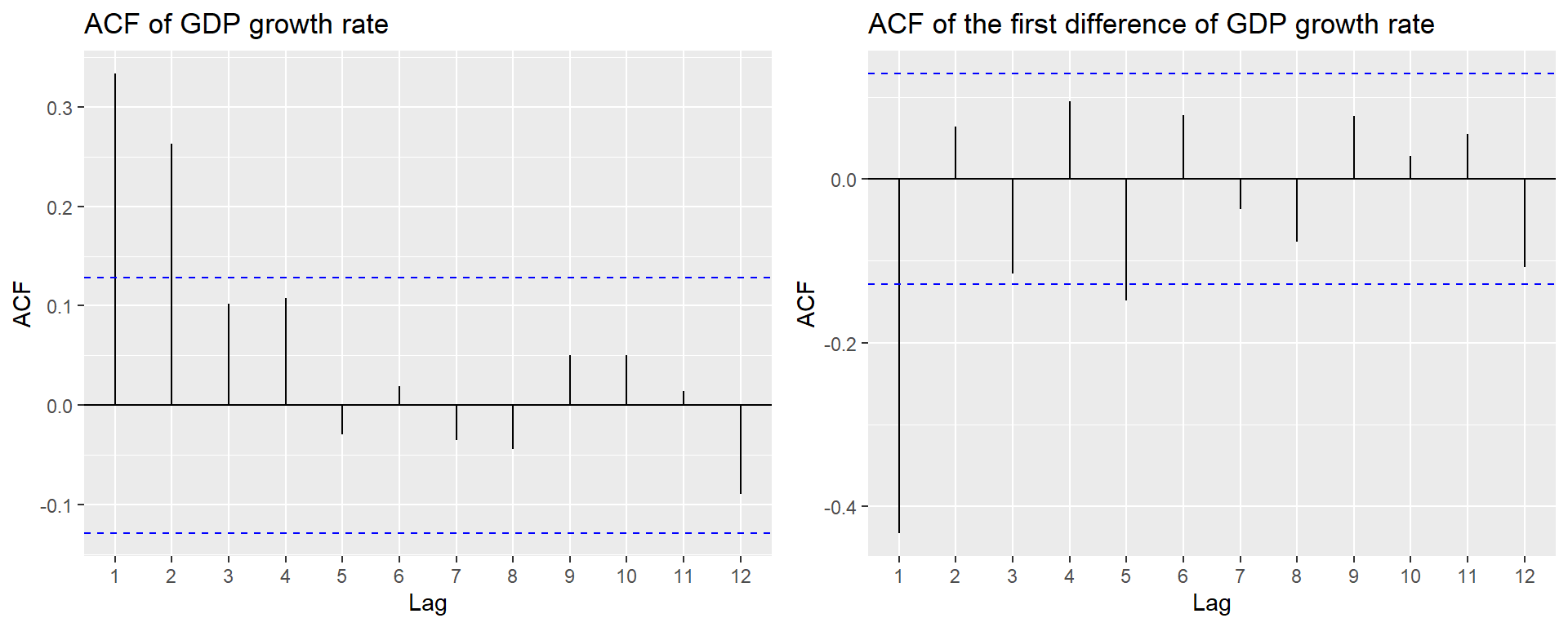

In R, there are multiple functions from different packages that can be used to compute sample autocorrelations. The base R function acf can be used to compute the sample autocorrelation coefficients and plot the sample autocorrelation function. Another function is the ggAcf function from the forecast package. As an example, we compute the sample serial autocorrelations of the U.S. GDP growth rate. Below, we use the ggAcf function. The first four sample autocorrelations are \(\hat{\rho}_1 = 0.33\), \(\hat{\rho}_2 = 0.26\), \(\hat{\rho}_3 = 0.10\), and \(\hat{\rho}_4 = 0.11\). These values suggest that GDP growth rates are mildly positively autocorrelated: when GDP grows faster than average in one period, it tends to grow faster than average in the subsequent period as well. In Figure 24.6, we plot the sample autocorrelation function of the GDP growth rate and its first difference. The figure shows that the GDP growth rate is positively autocorrelated, while the first difference of the GDP growth rate is negatively autocorrelated at lag 1 and has no significant autocorrelation at lags greater than 1.

ggAcf(dfGrowth$YGROWTH, lag.max = 12, plot = FALSE)

Autocorrelations of series 'dfGrowth$YGROWTH', by lag

0 1 2 3 4 5 6 7 8 9 10

1.000 0.333 0.263 0.102 0.108 -0.029 0.019 -0.035 -0.044 0.051 0.051

11 12

0.015 -0.089 # Serial correlation of the US GDP growth rate and its first difference

p1 <- ggAcf(dfGrowth$YGROWTH, lag.max = 12, plot = TRUE) +

labs(title = "ACF of GDP growth rate")

p2 <- ggAcf(dfGrowth$ChYGROWTH, lag.max = 12, plot = TRUE) +

labs(title = "ACF of the first difference of GDP growth rate")

grid.arrange(p1, p2, ncol = 2)

24.6 Forecast and forecast errors

Forecasting refers to predicting the values of a variable for the near future (out-of-sample). We denote the forecast for period \(T+h\) of a time series \(Y\), based on past observations \(Y_{T}, Y_{T-1}, \ldots\), and using a forecast rule (e.g., the oracle predictor), by \(Y_{T+h|T}\). When the forecast is based on an estimated rule, we denote it by \(\widehat{Y}_{T+h|T}\). Here, \(h\) represents the forecast horizon. When \(h = 1\), \(\widehat{Y}_{T+1|T}\) is called a one-step-ahead forecast; when \(h > 1\), \(\widehat{Y}_{T+h|T}\) is referred to as a multi-step-ahead forecast.

The forecast error is the difference between the actual value of the time series and the forecasted value: \[ \begin{align*} \text{Forecast error} = Y_{T+1} - \widehat{Y}_{T+1|T}. \end{align*} \tag{24.5}\]

To assess the forecast accuracy, we can use the mean squared forecast error (MSFE) and the root mean squared forecast error (RMSFE).

Definition 24.6 (Mean squared forecast error) The mean squared forecast error (MSFE) is \(\text{E}(Y_{T+1} - \widehat{Y}_{T+1|T})^2\), and the square root of the MSFE is called the root mean squared forecast error (RMSFE).

The MSFE and RMSFE can be used to compare the forecast accuracy of different models. There are two sources of randomness in the MSFE: (i) the randomness of the future value \(Y_{T+1}\), and (ii) the randomness of the estimated forecast \(\widehat{Y}_{T+1|T}\). Both randomness contributes to the forecast error. Assume that we use the mean \(\mu_Y\) of \(Y\) as the forecast for \(Y_{T+1}\). In this case, the estimated forecast is \(\widehat{Y}_{T+1|T}=\hat{\mu}_Y\), where \(\hat{\mu}_Y\) is an estimate of \(\mu_Y\). Then, the MSFE can be decomposed as follows: \[ \begin{align*} \text{MSFE} = \text{E}\left(Y_{T+1} - \hat{\mu}_Y\right)^2 = \E(Y_{T+1}-\mu_Y)^2+\E(\mu_Y-\hat{\mu}_Y)^2, \end{align*} \tag{24.6}\] where the second equality follows from the assumption that \(Y_{T+1}\) is uncorrelated with \(\hat{\mu}_Y\). The first term shows the error due to the deviation of \(Y_{T+1}\) from the mean \(\mu_Y\). The second term shows the error due to the deviation of the estimated forecast \(\hat{\mu}_Y\) from the mean \(\mu_Y\).

Definition 24.7 (Oracle forecast) The forecast rule that minimizes the MSFE is the conditional mean of \(Y_{T+1}\) given the in-sample observations \(Y_{1}, Y_{2},\dots,Y_T\), i.e., \(\E(Y_{T+1}|Y_{1}, Y_{2},\dots,Y_T)\). This forecast rule is called the oracle forecast.

The MSFE of the oracle predictor is the minimum possible MSFE. In Section 24.11, we discuss methods for estimating the MSFE and RMSFE in practice.

24.7 Autoregressions

To forecast the future values of the time series \(Y_t\), a natural starting point is to use information available from the history of \(Y_t\). An autoregression (AR) model is a regression in which \(Y_t\) is regressed on its own past values (time lag terms). The number of lags included as regressors is referred to as the order of the regression.

Definition 24.8 (The AR(\(p\)) model) The \(p\)-th order autoregressive (AR(p)) model represents the conditional expectation of \(Y_t\) as a linear function of \(p\) of its lagged values: \[ Y_t = \beta_0+\beta_1 Y_{t-1}+\beta_2Y_{t-2}+\dots+\beta_p Y_{t-p}+u_t, \tag{24.7}\]

where \(\E\left(u_t|Y_{t-1},Y_{t-2}, \dots\right)=0\). The number of lags \(p\) is called the order, or the lag length, of the autoregression.

The coefficients in the AR(p) model do not have a causal interpretation. For example, if we fail to reject \(H_0:\beta_1=0\), then we will conclude that \(Y_{t-1}\) is not useful for forecasting \(Y_t\).

The condition \(\E\left(u_t|Y_{t-1}, Y_{t-2}, \dots\right) = 0\) in Definition 24.8 has two important implications. The first implication is that the error terms \(u_t\)’s are uncorrelated, i.e., \(\text{corr}(u_t,u_{t-k})=0\) for \(k\geq 1\). To see this, we know that if \(\E(u_t|u_{t-1})=0\), then \(\corr(u_t,u_{t-1})=0\) by the law of iterated expectations. The AR(p) model implies that \[ u_{t-1}=Y_{t-1}-\beta_0-\beta_1 Y_{t-2}-\cdots-\beta_p Y_{t-p-1}=f(Y_{t-1},Y_{t-2},\dots,Y_{t-p-1}), \] where \(f\) indicates that \(u_{t-1}\) is a function of \(Y_{t-1},Y_{t-2},\dots,Y_{t-p-1}\). Then, \(\E(u_t|Y_{t-1},Y_{t-2},\dots)=0\) suggests that \[ \E(u_t|u_{t-1})=\E(u_t|f(Y_{t-1},Y_{t-2},\dots,Y_{t-p-1}))=0. \]

Thus, \(\corr(u_t,u_{t-1})=0\). A similar argument can be made for \(\corr(u_t,u_{t-2})=0\), and so on.

The second implication is that the best forecast of \(Y_{T+1}\) based on its entire history depends on only the most recent \(p\) past values: \[ \begin{align*} &Y_t = \beta_0+\beta_1 Y_{t-1}+\beta_2Y_{t-2}+\dots+\beta_pY_{t-p}+u_t\\ &\implies Y_{T+1} = \beta_0+\beta_1 Y_{T}+\beta_2Y_{T-1}+\dots+\beta_pY_{T-p+1}+u_{T+1}\\ &\implies \underbrace{\E(Y_{T + 1}| Y_1,\dots, Y_{T})}_{Y_{T+1|T}}=\beta_0+\beta_1 Y_{T}+\beta_2Y_{T-1}+\dots+\beta_pY_{T-p+1}. \end{align*} \]

In R, we can use the dynlm function from the dynlm package to estimate AR models. This function uses the OLS estimator to estimate the coefficients in the AR model. Alternatively, we can also use the arima function from the stats package to estimate AR models. The arima function uses the maximum likelihood estimator to estimate the coefficients in the AR model.

As an example, we use the data set in dfGrowth on the U.S. GDP growth rate over the period 1962:Q1-2017:Q3 to estimate the following AR models: \[

\begin{align}

&\text{GDPGR}_t=\beta_0+\beta_1\text{GDPGR}_{t-1}+u_t,\\

&\text{GDPGR}_t=\beta_0+\beta_1\text{GDPGR}_{t-1}+\beta_2\text{GDPGR}_{t-2}+u_t,

\end{align}

\tag{24.8}\] where \(\text{GDPGR}_t\) denotes the GDP growth rate at time \(t\). We use the OLS estimator with heteroskedasticity-robust standard errors to estimate these models.

# Convert dfGrowth to a ts object

tsGrowth <- ts(dfGrowth, start = c(1960, 1), frequency = 4)

# Model 1

r1 <- dynlm(

YGROWTH ~ L(YGROWTH, 1),

start = c(1962, 1),

end = c(2017, 3),

data = tsGrowth

)

# Model 2

r2 <- dynlm(

YGROWTH ~ L(YGROWTH, 1) + L(YGROWTH, 2),

start = c(1962, 1),

end = c(2017, 3),

data = tsGrowth

)# Estimation results for the AR(1) and AR(2) models

modelsummary(

models = list("AR(1)" = r1, "AR(2)" = r2),

vcov = "HC1",

stars = TRUE

)| AR(1) | AR(2) | |

|---|---|---|

| (Intercept) | 1.950*** | 1.603*** |

| (0.324) | (0.372) | |

| L(YGROWTH, 1) | 0.341*** | 0.279*** |

| (0.073) | (0.077) | |

| L(YGROWTH, 2) | 0.177* | |

| (0.077) | ||

| Num.Obs. | 223 | 223 |

| R2 | 0.117 | 0.145 |

| R2 Adj. | 0.113 | 0.138 |

| AIC | 1134.9 | 1129.7 |

| BIC | 1145.1 | 1143.3 |

| Log.Lik. | -564.431 | -560.848 |

| F | 21.599 | 13.370 |

| RMSE | 3.04 | 2.99 |

| Std.Errors | HC1 | HC1 |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | ||

The estimation results are presented in Table 24.5. Note the option vcov = "HC1" in the modelsummary function indicates that we use heteroskedasticity-robust standard errors. The estimated models can be expressed as follows: \[

\begin{align}

&\widehat{\text{GDPGR}}_t=1.955+0.336\text{GDPGR}_{t-1},\\

&\widehat{\text{GDPGR}}_t=1.608+0.276\text{GDPGR}_{t-1}+0.176\text{GDPGR}_{t-2}.

\end{align}

\]

Note that we use the sample data over the period 1962:Q1-2017:Q3 to estimate both models. Thus, the last time period in the sample is \(T=2017:Q3\). We want to obtain the forecast of the growth rate in \(T+1=2017:Q4\) based on the estimated AR(2) model. The estimated model suggests that \[ \begin{align*} \text{GDPGR}_{2017:Q4|2017:Q3} &= 1.61 + 0.28 \text{GDPGR}_{2017:Q3} + 0.18 \text{GDPGR}_{2017:Q2}\\ & = 1.61 + 0.28\times 3.11 + 0.18 \times 3.01\approx3.0. \end{align*} \] Since the actual growth rate in 2017:Q4 is \(2.5\%\), the forecast error is \(2.5\% - 3.0\% = -0.5\) percentage points.

Note that we use the OLS estimator to estimate the AR models. In R, we can also use arima function from the stats package to estimate AR models. In the following, we use this function to estimate the AR(2) model for the GDP growth rate.

# Estimate the AR(2) model for the GDP growth rate

arima_model <- arima(tsGrowth[, "YGROWTH"], order = c(2, 0, 0))

summary(arima_model)

Call:

arima(x = tsGrowth[, "YGROWTH"], order = c(2, 0, 0))

Coefficients:

ar1 ar2 intercept

0.2774 0.1691 2.9993

s.e. 0.0653 0.0655 0.3609

sigma^2 estimated as 9.346: log likelihood = -588.54, aic = 1185.08

Training set error measures:

ME RMSE MAE MPE MAPE MASE ACF1

Training set -0.01068332 3.057146 2.249573 -82.59193 166 0.6926154 0.005397039For forecasting, we can use either the predict function from the stats package or the forecast function from the forecast package. Below, we use the forecast function to obtain the forecast of the GDP growth rate in 2017:Q4 based on the estimated AR(2) model. Note that the forecast is close to the forecast obtained from the dynlm function, which is approximately 3.0%.

forecast::forecast(arima_model, h = 1, level = 95) Point Forecast Lo 95 Hi 95

2018 Q1 2.880319 -3.111578 8.872216In the next example, we use the data set in macroseries.csv to estimate the AR(1) and AR(4) models for the change in the U.S. inflation rate.

# Convert dfInflation to a ts object

tsInflation <- ts(dfInflation, start = c(1957, 1), frequency = 4)

# Model 1

AR_1 <- dynlm(

Delta_Inf ~ L(Delta_Inf, 1),

start = c(1962, 1),

end = c(2004, 4),

data = tsInflation

)

# Model 2

AR_4 <- dynlm(

Delta_Inf ~ L(Delta_Inf, 1) + L(Delta_Inf, 2) + L(Delta_Inf, 3) + L(Delta_Inf, 4),

start = c(1962, 1),

end = c(2004, 4),

data = tsInflation

)# Estimation results for the AR(1) and AR(4) models

modelsummary(

models = list("AR(1)" = AR_1, "AR(4)" = AR_4),

vcov = "HC1",

stars = TRUE

)| AR(1) | AR(4) | |

|---|---|---|

| (Intercept) | 0.017 | 0.022 |

| (0.127) | (0.118) | |

| L(Delta_Inf, 1) | -0.238* | -0.258** |

| (0.097) | (0.093) | |

| L(Delta_Inf, 2) | -0.322*** | |

| (0.081) | ||

| L(Delta_Inf, 3) | 0.158+ | |

| (0.084) | ||

| L(Delta_Inf, 4) | -0.030 | |

| (0.093) | ||

| Num.Obs. | 172 | 172 |

| R2 | 0.056 | 0.204 |

| R2 Adj. | 0.051 | 0.185 |

| AIC | 667.3 | 644.0 |

| BIC | 676.7 | 662.9 |

| Log.Lik. | -330.634 | -316.023 |

| F | 6.085 | 7.932 |

| RMSE | 1.65 | 1.52 |

| Std.Errors | HC1 | HC1 |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | ||

The estimation results are presented in Table 24.6. The estimated AR(\(1\)) model is \[ \widehat{\Delta \text{inf}}_t = 0.017 - 0.238 \Delta \text{inf}_{t-1}. \]

The coefficient estimate for the lag of the change in inflation is -0.238 and it is statistically significant at the conventional levels. In the dataset, the inflation rate for 2004:Q3 is 1.6 and for 2004:Q4 is 3.5. Hence, \(\Delta \text{inf}_{\text{2004:Q4}}=3.5-1.6=1.9\). Then, \(\widehat{\Delta \text{inf}}_{\text{2005:Q1}}=0.017 - 0.238\times 1.9 = -0.4352\) and \(\widehat{\text{inf}}_{\text{2005:Q1}}=-0.4352 + 3.5 = 3.0648\).

The estimated AR(4) model for the change in inflation is \[ \widehat{\Delta \text{inf}}_t = 0.022 - 0.258 \Delta \text{inf}_{t-1} - 0.322 \Delta \text{inf}_{t-2} + 0.158 \Delta \text{inf}_{t-3} - 0.030 \Delta \text{inf}_{t-4}. \]

We observe that the fit of the model improves significantly compared to the AR(1) model, as indicated by an increase in the adjusted R-squared to 0.185. Furthermore, in the AR(4) model, the additional time lag terms are jointly statistically significant, as shown by the \(F\)-test result below.

# F-test for the joint significance of the lagged changes in inflation

linearHypothesis(AR_4, c("L(Delta_Inf, 2) = 0", "L(Delta_Inf, 3) = 0", "L(Delta_Inf, 4) = 0"), white.adjust = "hc1")

Linear hypothesis test:

L(Delta_Inf, 2) = 0

L(Delta_Inf, 3) = 0

L(Delta_Inf, 4) = 0

Model 1: restricted model

Model 2: Delta_Inf ~ L(Delta_Inf, 1) + L(Delta_Inf, 2) + L(Delta_Inf,

3) + L(Delta_Inf, 4)

Note: Coefficient covariance matrix supplied.

Res.Df Df F Pr(>F)

1 170

2 167 3 6.7064 0.0002666 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 124.8 Autoregressive distributed lag model

In AR models, we use only the lagged values of the dependent variable \(Y\) as predictors. However, if there are other variables that may help in predicting \(Y\), we can incorporate them into the regression model. The autoregressive distributed lag (ADL) model is such a model. It includes lags of both the dependent variable \(Y\) and other explanatory variables as predictors.

Definition 24.9 (Autoregressive distributed lag model) The autoregressive distributed lag model with \(p\) lags of \(Y_t\) and \(q\) lags of \(X_t\), denoted ADL(\(p,q\)), is \[ Y_t = \beta_0 + \beta_1 Y_{t-1} + \dots + \beta_p Y_{t-p}+ \delta_1 X_{t-1}+\dots+ \delta_q X_{t-q}+u_t, \tag{24.9}\]

where \(\beta_0, \beta_1,\dots,\beta_p, \delta_1,\dots,\delta_q\), are unknown coefficients and \(u_t\) is the error term with \(\E\left(u_t|Y_{t-1}, Y_{t-2},\dots, X_{t-1}, X_{t-2},\dots\right) = 0\).

The assumption \(\E\left(u_t|Y_{t-1}, Y_{t-2},\dots, X_{t-1}, X_{t-2},\dots\right) = 0\) suggests that the error terms \(u_t\)’s are serially uncorrelated. Moreover, it indicates that the lag lengths \(p\) and \(q\) are correctly specified, such that the coefficients of lag terms beyond \(p\) and \(q\) are zero.

If there are multiple predictors \(X_1,\ldots,X_k\), then the ADL model defined in Definition 24.9 can be extended as follows:

\[

\begin{align}

Y_t &= \beta_0 + \beta_1Y_{t-1} + \beta_2Y_{t-2} +\ldots+ \beta_pY_{t-p}\nonumber\\

&+\delta_{11}X_{1t-1} + \delta_{12}X_{1t-2} + \ldots+\delta_{1q_1}X_{1t-q_1}\nonumber\\

&+\ldots+\delta_{k1}X_{kt-1} + \delta_{k2}X_{kt-2} + \ldots+\delta_{kq_k}X_{kt-q_k}+u_t,

\end{align}

\tag{24.10}\]

where \(q_1\) lags of the first predictor, \(q_2\) lags of the second predictor, and so forth are included in the model.

In the following ADL(2,1) and ADL(2,2) models, we consider the U.S. term spread (TSpread) as an additional predictor for the GDP growth rate. \[

\begin{align*}

&\text{GDPGR}_t=\beta_0+\beta_1{\tt GDPGR}_{t-1}+\beta_2{\tt GDPGR}_{t-2}+\delta_1{\tt TSpread}_{t-1}+u_t,\\

&\text{GDPGR}_t=\beta_0+\beta_1{\tt GDPGR}_{t-1}+\beta_2{\tt GDPGR}_{t-2}+\delta_1{\tt TSpread}_{t-1}+\delta_2{\tt TSpread}_{t-2}+u_t,

\end{align*}

\tag{24.11}\] where TSpread is the term spread, measured as the difference between the 10-year Treasury bond rate (the long-term interest rate) and the 3-month Treasury bill rate (the short-term interest rate).

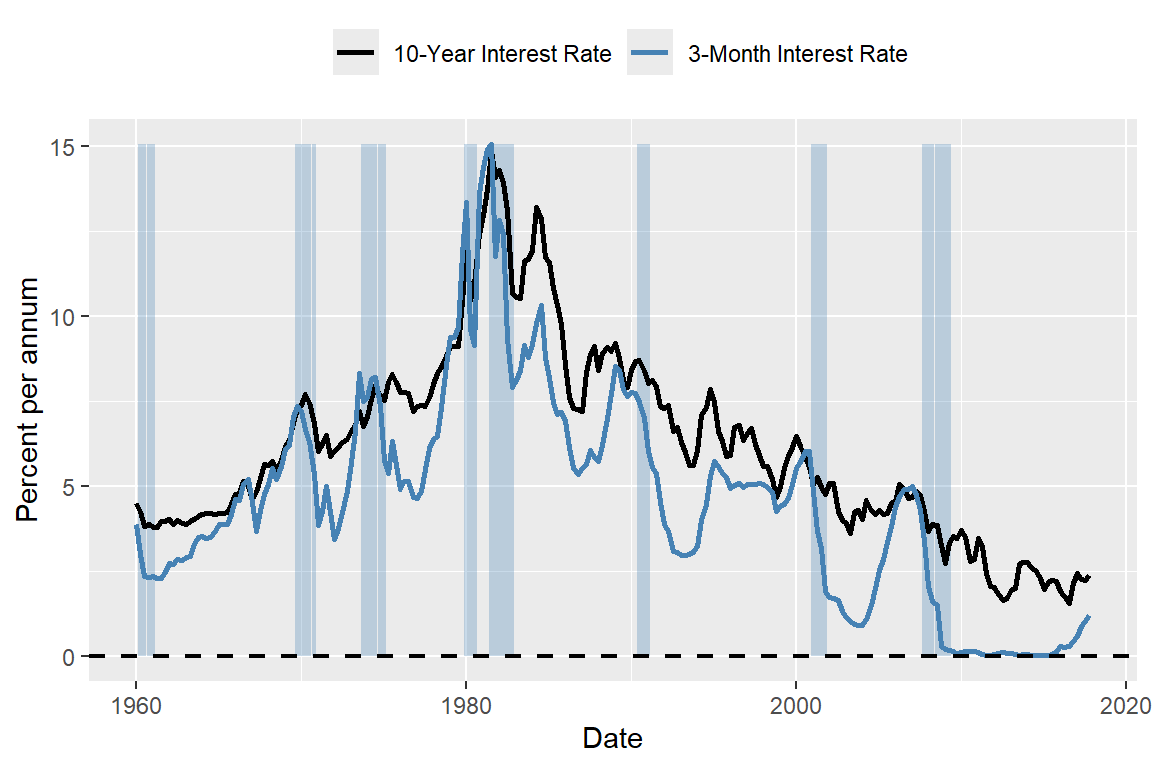

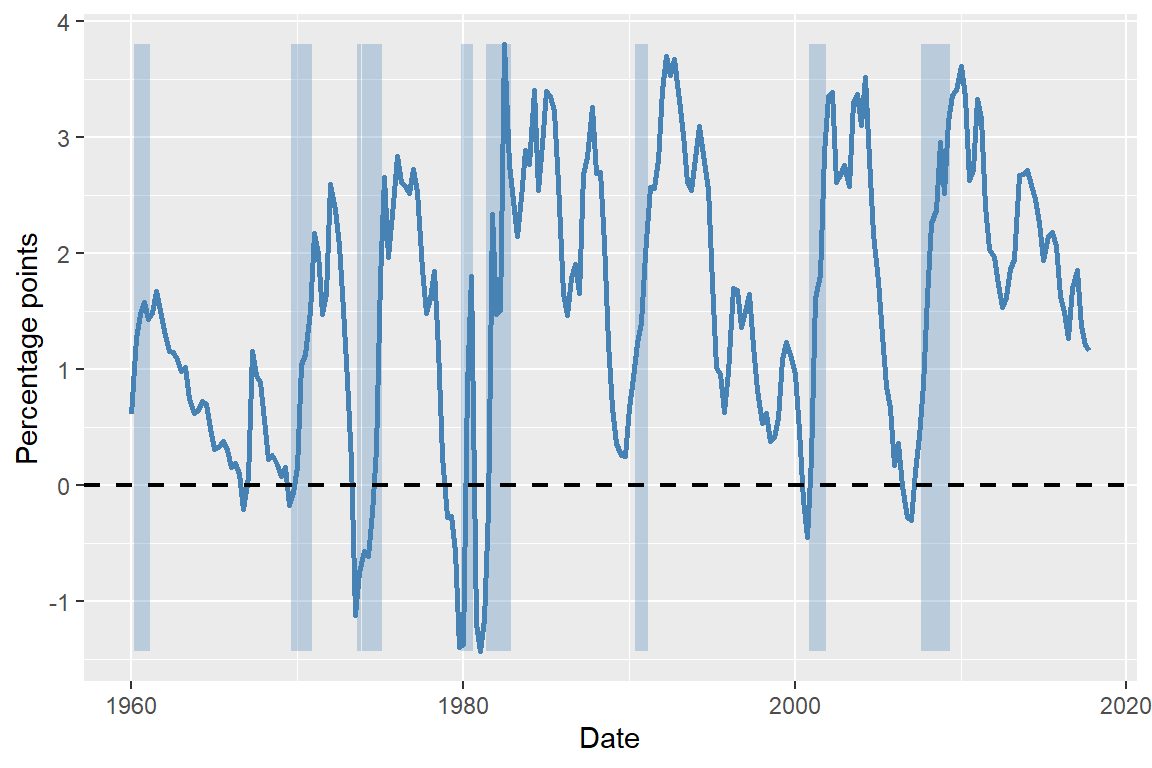

We use the dataset provided in the TermSpread.csv file to estimate these models. In Figure 24.7, we plot the U.S. long-term and short-term interest rates. The figure shows that both interest rates tend to move together over time. Figure 24.8 displays the term spread, which is generally positive and tends to decline before the recession periods. Therefore, the term spread may serve as a useful predictor for the GDP growth rate.

# Read the term spread data

dfTermSpread <- read.csv("data/TermSpread.csv", header = TRUE)

# Convert to quarterly time series starting in 1960 Q1

tsTermSpread <- ts(dfTermSpread, start = c(1960, 1), frequency = 4)# Long-term and short-term interest rates in the US

# Create quarterly date index

dfTermSpread$Date <- as.yearqtr(seq(

from = as.Date("1960-01-01"),

by = "quarter",

length.out = nrow(dfTermSpread)

))

# Convert to Date for ggplot

dfTermSpread$Date <- as.Date(dfTermSpread$Date)

# Create a ymax for shading (like fill_between)

ymax <- max(dfTermSpread$TB3MS, na.rm = TRUE)

# Plot

ggplot(dfTermSpread, aes(x = Date)) +

# Recession shading

geom_rect(

data = subset(dfTermSpread, RECESSION == 1),

aes(xmin = Date - 45, xmax = Date + 45, ymin = 0, ymax = ymax),

inherit.aes = FALSE,

fill = "steelblue",

alpha = 0.3

) +

# Lines

geom_line(aes(y = GS10, color = "10-Year Interest Rate"), linewidth = 1) +

geom_line(aes(y = TB3MS, color = "3-Month Interest Rate"), linewidth = 1) +

# Horizontal line at zero

geom_hline(yintercept = 0, linetype = "dashed", linewidth = 0.8) +

# Labels

labs(

x = "Date",

y = "Percent per annum",

color = ""

) +

# Colors

scale_color_manual(

values = c(

"10-Year Interest Rate" = "black",

"3-Month Interest Rate" = "steelblue"

)

) +

# legend position

theme(legend.position = "top")

# The term spread in the US

# Get min and max for shading

ymin <- min(dfTermSpread$RSPREAD, na.rm = TRUE)

ymax <- max(dfTermSpread$RSPREAD, na.rm = TRUE)

# Plot

ggplot(dfTermSpread, aes(x = Date)) +

# Recession shading

geom_rect(

data = subset(dfTermSpread, RECESSION == 1),

aes(xmin = Date - 45, xmax = Date + 45, ymin = ymin, ymax = ymax),

inherit.aes = FALSE,

fill = "steelblue",

alpha = 0.3

) +

# Term spread line

geom_line(aes(y = RSPREAD), color = "steelblue", linewidth = 1) +

# Horizontal zero line

geom_hline(

yintercept = 0,

linetype = "dashed",

linewidth = 0.8,

color = "black"

) +

# Labels

labs(

x = "Date",

y = "Percentage points"

)

To estimate the ADL(2,1) and ADL(2,2) models, we first merge the tsGrowth and tsTermSpread datasets to create a new dataset ADLdata. We keep only the relevant columns for the GDP growth rate and the term spread, and rename them as GDPGR and TSpread, respectively.

# Merge tsGrowth and tsTermSpread

ADLdata <- cbind(tsGrowth, tsTermSpread)

# Keep only the relevant columns and convert to data frame

ADLdata <- ADLdata[, c("tsGrowth.YGROWTH", "tsTermSpread.RSPREAD")]

colnames(ADLdata) <- c("GDPGR", "TSpread")The estimation results are presented in Table 24.8. The estimated models are \[ \begin{align} &\widehat{\text{GDPGR}}_t=0.95+0.27{\tt GDPGR}_{t-1}+0.19{\tt GDPGR}_{t-2}+0.42{\tt TSpread}_{t-1},\\ &\widehat{\text{GDPGR}}_t=0.95+0.24{\tt GDPGR}_{t-1}+0.18{\tt GDPGR}_{t-2}-0.13{\tt TSpread}_{t-1}+0.62{\tt TSpread}_{t-2}. \end{align} \]

# Model 1: ADL(2,1)

m1 <- dynlm(

GDPGR ~ L(GDPGR, 1) + L(GDPGR, 2) + L(TSpread, 1),

start = c(1962, 1),

end = c(2017, 3),

data = ADLdata

)

# Model 2: ADL(2,2)

m2 <- dynlm(

GDPGR ~ L(GDPGR, 1) + L(GDPGR, 2) + L(TSpread, 1) + L(TSpread, 2),

start = c(1962, 1),

end = c(2017, 3),

data = ADLdata

)# Estimation results using `modelsummary`

modelsummary(

models = list("ADL(2,1)" = m1, "ADL(2,2)" = m2),

vcov = "HC1",

stars = TRUE

)| ADL(2,1) | ADL(2,2) | |

|---|---|---|

| (Intercept) | 0.941* | 0.944* |

| (0.474) | (0.462) | |

| L(GDPGR, 1) | 0.268** | 0.246** |

| (0.080) | (0.076) | |

| L(GDPGR, 2) | 0.190* | 0.176* |

| (0.076) | (0.076) | |

| L(TSpread, 1) | 0.421* | -0.129 |

| (0.181) | (0.420) | |

| L(TSpread, 2) | 0.618 | |

| (0.428) | ||

| Num.Obs. | 223 | 223 |

| R2 | 0.170 | 0.180 |

| R2 Adj. | 0.158 | 0.165 |

| AIC | 1125.2 | 1124.6 |

| BIC | 1142.3 | 1145.0 |

| Log.Lik. | -557.624 | -556.285 |

| F | 12.425 | 9.514 |

| RMSE | 2.95 | 2.93 |

| Std.Errors | HC1 | HC1 |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | ||

The estimated coefficients in the ADL(2,2) model on the first and second lags of TSpread are statistically insignificant at the 5% level. The joint null hypothesis \(H_0:\delta_1=\delta_2=0\) can be tested using the F-statistic. The \(F\)-test result below shows that the coefficients on the term spread are jointly statistically significant.

hypotheses = c('L(TSpread, 1)=0', 'L(TSpread, 2)=0')

linearHypothesis(m2, hypotheses, white.adjust = "hc1")

Linear hypothesis test:

L(TSpread,0

L(TSpread, 2) = 0

Model 1: restricted model

Model 2: GDPGR ~ L(GDPGR, 1) + L(GDPGR, 2) + L(TSpread, 1) + L(TSpread,

2)

Note: Coefficient covariance matrix supplied.

Res.Df Df F Pr(>F)

1 220

2 218 2 3.9695 0.02026 *

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Finally, we use the ADL(2,2) model to forecast the GDP growth rate in 2017:Q4. The forecasted GDP growth rate is \[ \begin{align*} \widehat{\text{GDPGR}}_{2017:Q4|2017:Q3} &=0.95+0.24{\tt GDPGR}_{2017:Q3}+0.18{\tt GDPGR}_{2017:Q2}\\ &-0.13{\tt TSpread}_{2017:Q3}+0.62{\tt TSpread}_{2017:Q2} \\ &=0.95 + 0.24 \times 3.11 + 0.18 \times 3.01 - 0.13 \times 1.21 + 0.62 \times 1.37\\ &= 0.95 + 0.7464 + 0.5418 - 0.1573 + 0.8494 = 2.93. \end{align*} \]

The actual GDP growth rate in 2017:Q4 was 2.5. Hence, the forecast error is \(2.5 - 2.93 = -0.43\) percentage points.

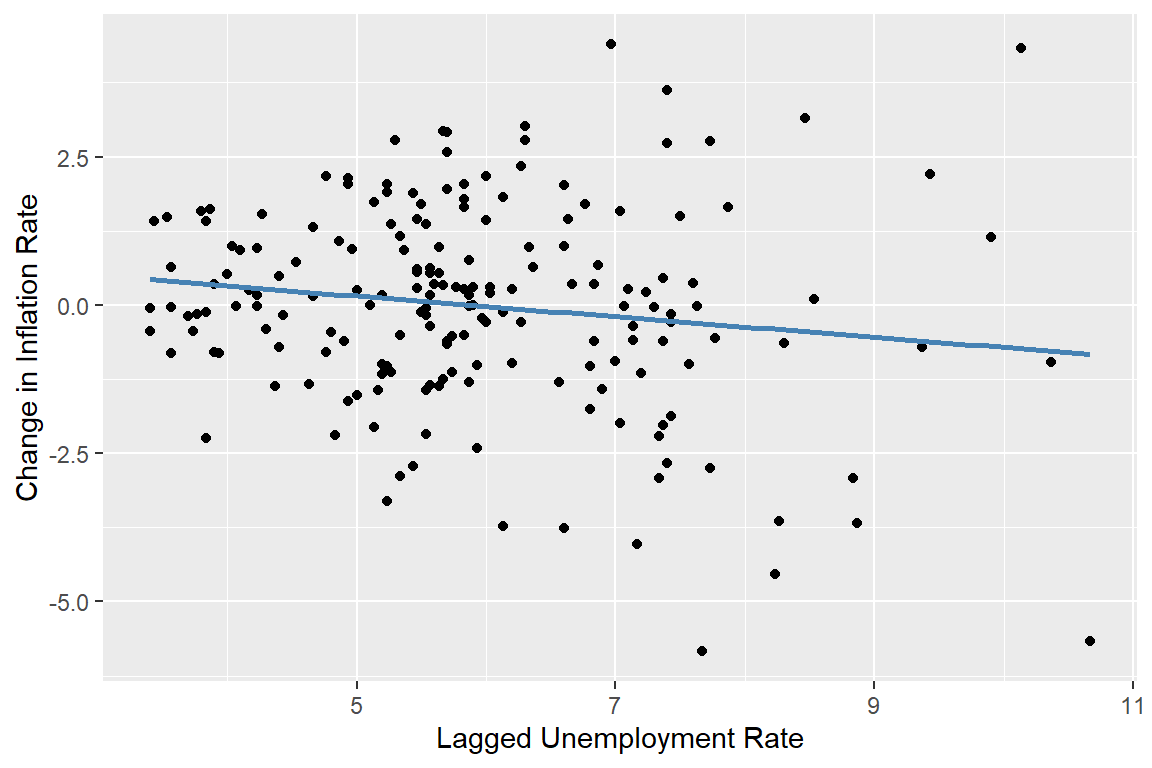

As an another example, we consider the inflation rate in the tsInflation file. According to the well-known Phillips curve, if unemployment is above its natural rate, then the inflation rate is predicted to decrease. Hence, the change in inflation is negatively related to the lagged unemployment rate. Accordingly, Figure 24.9 shows that there is a negative relationship between the change in inflation and the lagged unemployment rate.

# The relationship between the change in inflation and the lagged unemployment rate

# OLS regression of the change in inflation on the lagged unemployment rate

m3 <- dynlm(Delta_Inf ~ L(u_rate), start = c(1962, 1), end = c(2004, 4), data = tsInflation)

# Scatter plot

df_plot <- data.frame(

L_u_rate = stats::lag(tsInflation[, "u_rate"]),

Delta_Inf = tsInflation[, "Delta_Inf"]

)

ggplot(df_plot, aes(x = L_u_rate, y = Delta_Inf)) +

geom_point() +

geom_smooth(method = "lm", se = FALSE, color = "steelblue") +

labs(x = "Lagged Unemployment Rate", y = "Change in Inflation Rate")

We consider the following ARDL(4,4) model for the change in inflation rate: \[ \begin{align*} \Delta \text{Inf}_t &= \beta_0 + \beta_1\Delta \text{Inf}_{t-1} + \beta_2\Delta \text{Inf}_{t-2} + \beta_3\Delta \text{Inf}_{t-3} + \beta_4\Delta \text{Inf}_{t-4}\\ &\quad + \delta_1\text{Urate}_{t-1} + \delta_2\text{Urate}_{t-2} + \delta_3\text{Urate}_{t-3} + \delta_4\text{Urate}_{t-4} + u_t, \end{align*} \tag{24.12}\] where \(\text{Urate}_t\) is the unemployment rate at time \(t\). The ARDL(4,4) model includes four lags of the change in inflation and four lags of the unemployment rate as predictors. The estimation results are presented in Table 24.9.

# Model 3: ARDL(4,4)

m3 <- dynlm(

Delta_Inf ~ L(Delta_Inf, 1) + L(Delta_Inf, 2) + L(Delta_Inf, 3) + L(Delta_Inf, 4) +

L(u_rate, 1) + L(u_rate, 2) + L(u_rate, 3) + L(u_rate, 4),

start = c(1962, 1),

end = c(2004, 4),

data = tsInflation

)# Estimation results for the ARDL(4,4) model

modelsummary(

models = list("ARDL(4,4)" = m3),

vcov = "HC1",

stars = TRUE

)| ARDL(4,4) | |

|---|---|

| (Intercept) | 1.304** |

| (0.452) | |

| L(Delta_Inf, 1) | -0.420*** |

| (0.089) | |

| L(Delta_Inf, 2) | -0.367*** |

| (0.094) | |

| L(Delta_Inf, 3) | 0.057 |

| (0.085) | |

| L(Delta_Inf, 4) | -0.036 |

| (0.084) | |

| L(u_rate, 1) | -2.636*** |

| (0.475) | |

| L(u_rate, 2) | 3.043*** |

| (0.880) | |

| L(u_rate, 3) | -0.377 |

| (0.912) | |

| L(u_rate, 4) | -0.248 |

| (0.461) | |

| Num.Obs. | 172 |

| R2 | 0.366 |

| R2 Adj. | 0.335 |

| AIC | 612.8 |

| BIC | 644.3 |

| Log.Lik. | -296.397 |

| F | 8.953 |

| RMSE | 1.36 |

| Std.Errors | HC1 |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | |

According to the estimation results in Table 24.9, the fit of the ARDL(4,4) model is significantly better than the AR(4) model in Table 24.6. The adjusted R-squared increases from 0.185 in the AR(4) model to 0.335 in the ARDL(4,4) model. Furthermore, the F-statistic for the joint null hypothesis \(H_0:\delta_1=\ldots=\delta_4=0\) computed below indicates that the additional time lag terms for the unemployment rate are jointly statistically significant at the 5% level.

# F-test for the joint significance of the lagged unemployment rates

hypotheses = c('L(u_rate, 1)=0', 'L(u_rate, 2)=0', 'L(u_rate, 3)=0', 'L(u_rate, 4)=0')

linearHypothesis(m3, hypotheses, white.adjust = "hc1")

Linear hypothesis test:

L(u_rate,0

L(u_rate, 2) = 0

L(u_rate, 3) = 0

L(u_rate, 4) = 0

Model 1: restricted model

Model 2: Delta_Inf ~ L(Delta_Inf, 1) + L(Delta_Inf, 2) + L(Delta_Inf,

3) + L(Delta_Inf, 4) + L(u_rate, 1) + L(u_rate, 2) + L(u_rate,

3) + L(u_rate, 4)

Note: Coefficient covariance matrix supplied.

Res.Df Df F Pr(>F)

1 167

2 163 4 8.4433 3.242e-06 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 124.9 The least squares assumptions for forecasting with multiple predictors

In this section, we state the assumptions that we need for the estimation of the AR and ADL models by the OLS estimator. We consider the following general ADL model, which includes \(m\) additional predictors. In this model, \(q_1\) lags of the first predictor, \(q_2\) lags of the second predictor, and so forth are incorporated: \[ \begin{align*} Y_t &= \beta_0 + \beta_1Y_{t-1} + \beta_2Y_{t-2} +\dots+ \beta_pY_{t-p}\\ &\quad +\delta_{11}X_{1,t-1} + \delta_{12}X_{1,t-2} + \dots +\delta_{1q_1}X_{1,t-q_1} + \dots \\ &\quad + \delta_{m1}X_{m,t-1} + \delta_{m2}X_{m,t-2} + \dots +\delta_{mq_m}X_{m,t-q_m}+u_t. \end{align*} \tag{24.13}\]

The first assumption indicates that the error term \(u_t\) has zero conditional mean given the lagged values of the dependent variable and the predictors. This assumption has two implications: (i) the error terms \(u_t\)’s are serially uncorrelated, and (ii) the lag lengths \(p\), \(q_1\), \(q_2\), , \(q_m\) are correctly specified, such that the coefficients of lag terms beyond \(p\), \(q_1\), \(q_2\), , \(q_m\) are zero. The first part of the second assumption indicates that the distribution of data does not change over time. This part can be considered as the time series version of the i.i.d asumption we used for the cross-sectional data. The second part indicates that \(\left(Y_t, X_{1t},\dots, X_{mt}\right)\) and \(\left(Y_{t-k}, X_{1,t-k},\dots,X_{m,t-k}\right)\) become independent as \(k\) gets larger. This assumption is sometimes referred to as the weak dependence assumption. The third and fourth assumptions are same as the ones we used for the multiple linear regression model.

Theorem 24.1 (Properties of OLS estimator using time series data) Under the least squares assumptions, the large-sample properties of the OLS estimator are similar to those in the case of cross-sectional data. Namely, the OLS estimator is consistent and has an asymptotic normal distribution when \(T\) is large.

24.10 Lag order selection

How should we choose the number of lags \(p\) in an AR(p) model? One approach to determining the number of lags is to use sequential downward \(t\) or \(F\) tests. However, this method may select a large number of lags. Alternatively, we can use information criteria to select the lag order. Information criteria balance bias (too few lags) against variance (too many lags).

Two widely used information criteria are the Bayesian information criterion (BIC) and the Akaike information criterion (AIC). For an AR(p) model, the BIC and AIC are defined as \[ \begin{align*} \text{BIC}(p) &= \ln\frac{SSR(p)}{T} + (p+1)\frac{\ln T}{T}, \\ \text{AIC}(p) &= \ln\frac{SSR(p)}{T} + (p+1)\frac{2}{T}, \end{align*} \tag{24.14}\] where \(SSR(p)\) is the sum of squared residuals from the AR(p) model. The estimator of \(p\) is the value that minimizes the BIC or AIC. That is, if \(\hat{p}\) is the estimator of \(p\), then it is defined as \[ \hat{p} = \arg\min_{p} \text{BIC}(p) \quad \text{or} \quad \hat{p} = \arg\min_{p} \text{AIC}(p). \tag{24.15}\]

Note that the first term in both BIC and AIC is always decreasing in \(p\) (larger \(p\), better fit). The second term is always increasing in \(p\). The second term is a penalty for using more parameters, and thus increasing the forecast variance. The penalty term is smaller for AIC than BIC, hence AIC estimates more lags (larger \(p\)) than the BIC. This may be desirable if we believe that longer lags are important for the dynamics of the process.

Theorem 24.2 The BIC estimator of \(p\) is a consistent estimator in the sense that \(P(\hat{p}=p)\to 1\), whereas the AIC estimator is not consistent.

Proof (Proof of Theorem 24.2). For simplicity, assume that true \(p\) is \(1\), then we need to show that (i) \(P(\hat{p}=0)\to 0\) and (ii) \(P(\hat{p}=2)\to 0\). To choose \(\hat{p}=0\), it must be the case that \(\text{BIC}(0)-\text{BIC}(1)<0\). Consider \(\text{BIC}(0)-\text{BIC}(1)\): \[ \text{BIC}(0)-\text{BIC}(1)=\ln(SSR(0)/T)-\ln(SSR(1)/T) -\ln(T)/T. \]

It follows that \(SSR(0)/T=((T-1)/T)s_Y^2\to\sigma^2_Y\), where \(\sigma^2_Y\) is the variance of the process \(Y\) and \(s_Y^2\) is its sample counterpart. Similarly, \(SSR(1)/T=((T-2)/T)s^2_{\hat{u}}\to\sigma^2_{u}\), where \(\sigma^2_u\) is the variance of the error term. Thus, \[ \text{BIC}(0)-\text{BIC}(1)\to\ln(\sigma^2_Y)-\ln(\sigma^2_u), \] because \(\ln(T)/T\to 0\). Since \(\sigma^2_Y>\sigma^2_u\), it follows that \(\text{BIC}(0)-\text{BIC}(1)>0\). This implies that \(P(\hat{p}=0)\to 0\).

Similarly, to choose \(\hat{p}=2\), it must be the case that \(\text{BIC}(2)-\text{BIC}(1)<0\). Consider \(T[\text{BIC}(2)-\text{BIC}(1)]\): \[ \begin{align*} &T[\text{BIC}(2)-\text{BIC}(1)]= T[\ln(SSR(2)/T)-\ln(SSR(1)/T)] +\ln(T)\\ &=T\ln(SSR(2)/SSR(1))+\ln(T)=-T\ln(1+F/(T-2))+\ln(T), \end{align*} \] where \(F=(SSR(1)-SSR(2))/(SSR(2)/(T-2))\) is the homoskedasticity-only \(F\)-statistic for testing \(H_0:\beta_2=0\) in the AR(2) model. Then, \[ \begin{align*} P(\text{BIC}(2)-\text{BIC}(1)<0)&= P(T[\text{BIC}(2)-\text{BIC}(1)]<0)\\ &=P([-T\ln(1+F/(T-2))+\ln(T)]<0)\\ \end{align*} \] Since \(T\ln(1+F/(T-2))-F\approx TF/(T-2)-F\xrightarrow{p}0\), we have \[ \begin{align*} P(\text{BIC}(2)-\text{BIC}(1)<0)&=P(T\ln(1+F/(T-2))>\ln(T))\to P(F>\ln(T))\to 0. \end{align*} \] Thus, \(P(\hat{p}=2)\to0\).

For the general case where \(0\leq p\leq p_{max}\), we can use the same argument to show that \(P(\hat{p}<p)\to0\) and \(P(\hat{p}>p)\to0\). Therefore, the BIC estimator is consistent.

In the case of AIC, we can use the same argument to show that \(P(\hat{p}=0)\to 0\). However, to show that \(P(\hat{p}=2)\to 0\), we need to show that \(P(\text{AIC}(2)-\text{AIC}(1)<0)\to 0\). Using the same argument as above, we have \[ \begin{align*} P(\text{AIC}(2)-\text{AIC}(1)<0)&=P(F>2)>0. \end{align*} \]

Therefore, the AIC estimator is not consistent. For example, if the error terms are homoskedastic, then \[ \begin{align*} P(\text{AIC}(2)-\text{AIC}(1)<0)&=P(F>2)\to P(\chi^2_1>2)=0.16. \end{align*} \]

In general, we have \(P(\hat{p}<p)\to0\), but \(P(\hat{p}>p)\not\to0\). Thus, the AIC can overestimate the number of lags even in large samples.

As in an autoregression, the BIC and the AIC can also be used to estimate the number of lags and variables in the time series regression model with multiple predictors. If the regression model has \(k\) coefficients (including the intercept), then \[ \begin{align} &\text{BIC}(k)=\ln\left(SSR(k)/T\right)+k\ln(T)/T,\\ &\text{AIC}(k)=\ln\left(SSR(k)/T\right)+2k/T. \end{align} \]

For each value of \(k\), the BIC (or AIC) can be computed, and the model with the lowest value of the BIC (or AIC) is preferred based on the information criterion.

24.11 Estimation of the MSFE and forecast intervals

Stock and Watson (2020) consider three estimators for estimating the MSFE. These estimators are:

- The standard error of the regression (SER) estimator,

- The final prediction error (FPE) estimator, and

- The pseudo out-of-sample (POOS) forecasting estimator.

The first two estimators are derived from the expression for the MSFE and rely on the stationarity assumption. Under stationarity, the MSFE for an AR(p) model can be expressed as: \[ \begin{align*} \text{MSFE}&=\E\left[\left(Y_{T+1}-\widehat{Y}_{T+1|T}\right)^2\right]\\ &=\E\big[(\beta_0+\beta_1 Y_{T}+\beta_2Y_{T-1}+\ldots+\beta_pY_{T-p+1}+u_{T+1}\\ &\quad- (\hat{\beta}_0+\hat{\beta}_1 Y_{T}+\hat{\beta}_2Y_{T-1}+\ldots+\hat{\beta}_pY_{T-p+1}))^2\big]\\ &=\E(u^2_{T+1})+\E\left(\left((\hat{\beta}_0-\beta_0)+(\hat{\beta}_1-\beta_1)Y_T+\ldots+(\beta_p-\hat{\beta}_p)Y_{T-p+1}\right)^2\right). \end{align*} \tag{24.16}\]

Since the variance of the OLS estimator is proportional to \(1/T\), the second term is proportional to \(1/T\). Consequently, if the number of observations \(T\) is large relative to the number of autoregressive lags \(p\), then the contribution of the second term is small relative to the first term. This leads to the approximation MSFE\(\approx\sigma^2_u\), where \(\sigma^2_u\) is the variance of \(u_t\). Based on this simplification, the SER estimator of the MSFE is formulated as \[ \begin{align} \widehat{MSFE}_{SER}=s^2_{\hat{u}},\quad\text{where}\quad s^2_{\hat{u}}=\frac{SSR}{T-p-1}, \end{align} \tag{24.17}\] and \(SSR\) is the sum of squared residuals from the autoregression. Note that this method assumes stationarity and ignores estimation error stemming from the estimation of the forecast rule.

The FPE method incorporates both terms in the MSFE formula under the additional assumption that the error are homoskedastic. Under homoskedasticity, it can be shown that (see Appendix B) \[ \E\left(\left((\hat{\beta}_0-\beta_0)+(\hat{\beta}_1-\beta_1)Y_T+\ldots+(\beta_p-\hat{\beta}_p)Y_{T-p+1}\right)^2\right)\approx \sigma^2_u((p+1)/T). \]

Then, the MSFE can be expressed as \(\text{MSFE}=\sigma^2_u+\sigma^2_u((p+1)/T)=\sigma^2_u(T+p+1)/T\). Then, the FPE estimator is given by \[ \begin{align} \widehat{MSFE}_{FPE}=\left(\frac{T+p+1}{T}\right)s^2_{\hat{u}}=\left(\frac{T+p+1}{T-p-1}\right)\frac{SSR}{T}. \end{align} \tag{24.18}\]

The third method, the pseudo out-of-sample (POOS) forecasting, does not require the stationarity assumption. In the following callout, we show how the POOS method is implemented.

Once we have an estimate of the MSFE, we can construct a forecast interval for \(Y_{T+1}\). Assuming that \(u_{T+1}\) is normally distributed, the 95% forecast interval is given by \[ \widehat{Y}_{T|T-1}\pm 1.96\times \widehat{\text{RMSFE}}. \]

The 95% forecast interval is not a confidence interval for \(Y_{T+1}\). The key distinction is that \(Y_{T+1}\) is a random variable, not a fixed parameter. Nevertheless, the forecast interval is widely used in practice as a measure of forecast uncertainty and can still provide a reasonable approximation, even when \(u_{T+1}\) is not normally distributed.

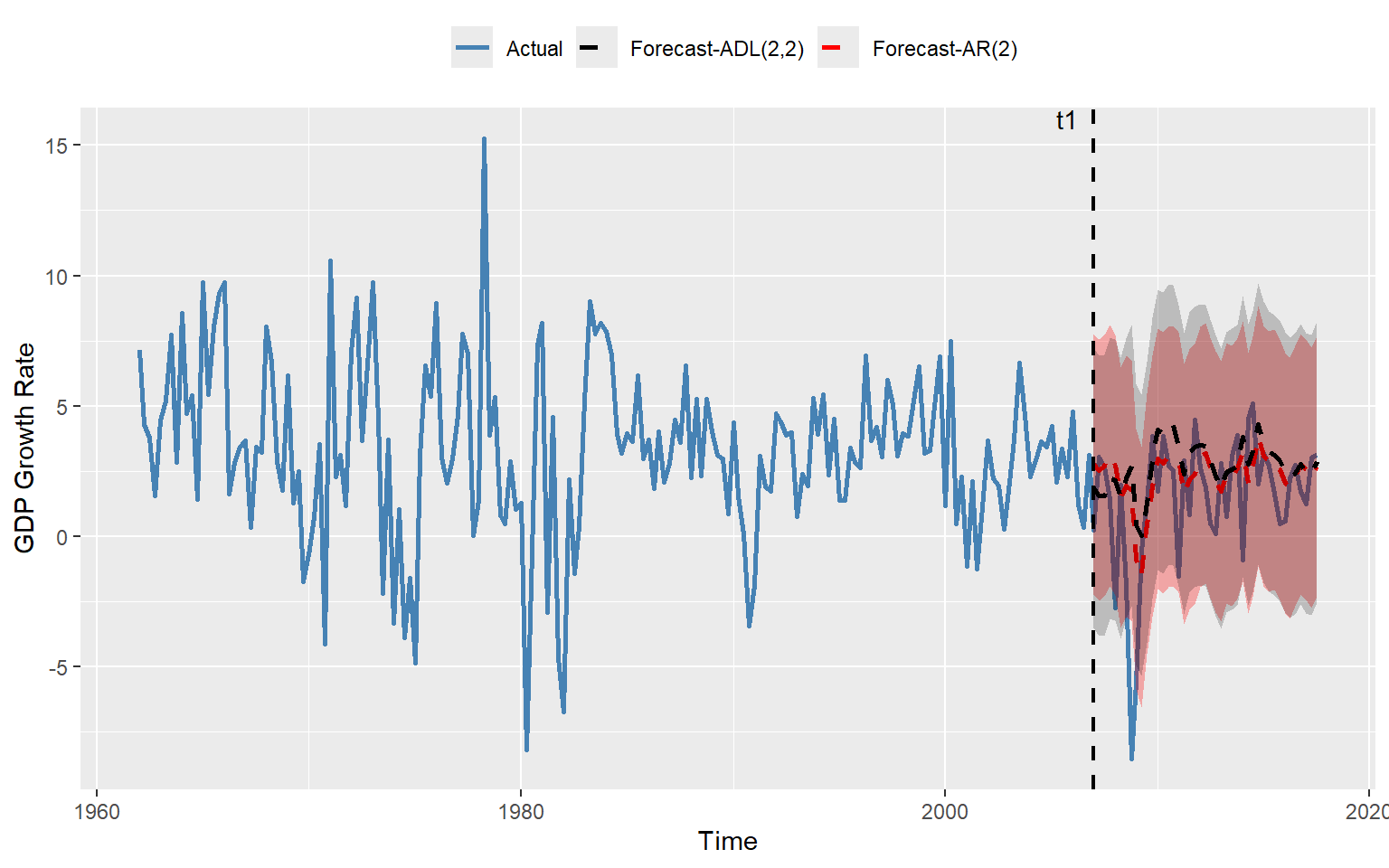

As an example, we compare the forecasting performance of the AR(2) model in Equation 24.8 with that of the ADL(2,2) model in Equation 24.11. In the following code chunk, we estimate both models and compute the SER and FPE estimates of the RMSFE. Using the POOS method, we forecast the GDP growth rate over the period 2007:Q1 to 2017:Q3 with both models.

# We use the ADLdata

mydata <- ADLdata

# Keep relevant sample

mydata <- window(mydata, start = c(1962, 1), end = c(2017, 3))

# Remove NA

mydata <- na.omit(mydata)

# AR(2) model

ar_result <- dynlm(GDPGR ~ L(GDPGR, 1) + L(GDPGR, 2), data = mydata)

# ADL(2,2) model

adl_result <- dynlm(

GDPGR ~ L(GDPGR, 1) + L(GDPGR, 2) + L(TSpread, 1) + L(TSpread, 2),

data = mydata

)

# RMSFE (SER and FPE)

n_ar <- length(residuals(ar_result))

k_ar <- 2 # number of regressors (lags)

n_adl <- length(residuals(adl_result))

k_adl <- 4

RMSFE_SER_AR <- sqrt(sum(residuals(ar_result)^2) / (n_ar - k_ar - 1))

RMSFE_FPE_AR <- sqrt(

((n_ar + k_ar + 1) / (n_ar - k_ar - 1)) * sum(residuals(ar_result)^2) / n_ar

)

RMSFE_SER_ADL <- sqrt(sum(residuals(adl_result)^2) / (n_adl - k_adl - 1))

RMSFE_FPE_ADL <- sqrt(

((n_adl + k_adl + 1) / (n_adl - k_adl - 1)) * sum(residuals(adl_result)^2) / n_adl

)

# Define evaluation sample

time_index <- time(mydata)

t1 <- which(time_index == 2007.0) # 2007 Q1

T <- which(time_index == 2017.5) # 2017 Q3

# Preallocate

savers_ar2 <- matrix(NA, nrow = T - t1 + 1, ncol = 2)

savers_adl <- matrix(NA, nrow = T - t1 + 1, ncol = 2)

# Recursive (rolling) forecasts

for (t in 1:(T - t1 + 1)) {

idx0 <- t1 + t - 1 # forecast target

idx1 <- idx0 - 1 # t-1

idx2 <- idx0 - 2 # t-2

# Expanding window

data_slice <- window(mydata, end = time_index[idx1])

# AR(2)

ar_model <- dynlm(GDPGR ~ L(GDPGR, 1) + L(GDPGR, 2), data = data_slice)

b <- coef(ar_model)

forecast_ar <- b[1] +

b[2] * mydata[idx1, "GDPGR"] +

b[3] * mydata[idx2, "GDPGR"]

savers_ar2[t, 1] <- mydata[idx0, "GDPGR"] - forecast_ar

savers_ar2[t, 2] <- forecast_ar

# ADL(2,2)

adl_model <- dynlm(

GDPGR ~ L(GDPGR, 1) + L(GDPGR, 2) + L(TSpread, 1) + L(TSpread, 2),

data = data_slice

)

b2 <- coef(adl_model)

forecast_adl <- b2[1] +

b2[2] * mydata[idx1, "GDPGR"] +

b2[3] * mydata[idx2, "GDPGR"] +

b2[4] * mydata[idx1, "TSpread"] +

b2[5] * mydata[idx2, "TSpread"]

savers_adl[t, 1] <- mydata[idx0, "GDPGR"] - forecast_adl

savers_adl[t, 2] <- forecast_adl

}

# RMSFE (Pseudo Out-of-Sample)

RMSFE_POOS_AR <- sqrt(mean(savers_ar2[, 1]^2, na.rm = TRUE))

RMSFE_POOS_ADL <- sqrt(mean(savers_adl[, 1]^2, na.rm = TRUE))The estimated RMSFE values are presented in Table 24.10. The results show that, for both models, the FPE estimate is slightly larger than the SER estimate, consistent with the formulas in Equation 24.17 and Equation 24.18. The POOS method yields the smallest RMSFE estimates for both models. Since the POOS estimate is slightly lower for the AR(2) model, we conclude that the AR(2) model is preferred over the ADL(2,2) model for forecasting the GDP growth rate.

# Collect results in a data frame

results <- data.frame(

Model = c("AR(2)", "ADL(2,2)"),

RMSFE_SER = c(RMSFE_SER_AR, RMSFE_SER_ADL),

RMSFE_FPE = c(RMSFE_FPE_AR, RMSFE_FPE_ADL),

RMSFE_POOS = c(RMSFE_POOS_AR, RMSFE_POOS_ADL)

)

kable(results, digits = 3)| Model | RMSFE_SER | RMSFE_FPE | RMSFE_POOS |

|---|---|---|---|

| AR(2) | 3.023 | 3.043 | 2.551 |

| ADL(2,2) | 2.975 | 3.008 | 2.749 |

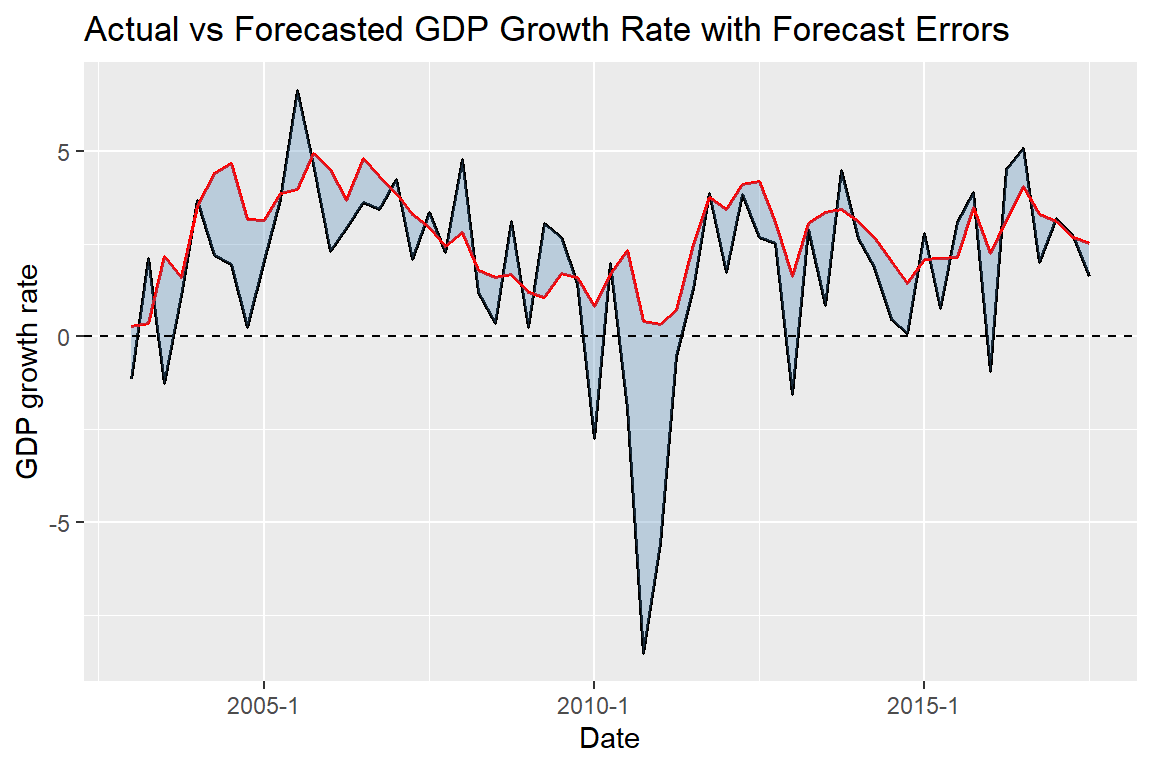

In Figure 24.10, we plot the one-step-ahead forecasted values obtained using the POOS method from both models. We also include the 95% forecast intervals. Note that the forecast intervals include the true values, except during the Great Recession of 2007.

mydata <- ADLdata

# Keep relevant variables and sample

mydata <- window(mydata, start = c(1962, 1), end = c(2017, 3))

# Time index from ts object

time_index <- time(mydata)

# Convert to Date

Date <- as.Date(as.yearqtr(time_index))

# Define indices

t1 <- which(time_index == 2007.0)

T <- which(time_index == 2017.5)

# Create forecast vectors aligned with full sample

forecasts_ar <- rep(NA, T)

forecasts_adl <- rep(NA, T)

# Fill forecasts

forecasts_ar[t1:T] <- savers_ar2[, 2]

forecasts_adl[t1:T] <- savers_adl[, 2]

# Forecast intervals

forecast_interval_ar <- 1.96 * RMSFE_POOS_AR

forecast_interval_adl <- 1.96 * RMSFE_POOS_ADL

# Build plotting data frame

df_plot <- data.frame(

Date = Date,

GDPGR = as.numeric(mydata[, "GDPGR"]),

AR = forecasts_ar,

ADL = forecasts_adl

)

# Plot

ggplot(df_plot, aes(x = Date)) +

# Actual series

geom_line(aes(y = GDPGR, color = "Actual"), linewidth = 1) +

# AR(2) forecast + interval

geom_line(aes(y = AR, color = "Forecast-AR(2)"),

linetype = "dashed", linewidth = 1) +

geom_ribbon(aes(ymin = AR - forecast_interval_ar,

ymax = AR + forecast_interval_ar),

fill = "red", alpha = 0.3, na.rm = TRUE) +

# ADL(2,2) forecast + interval

geom_line(aes(y = ADL, color = "Forecast-ADL(2,2)"),

linetype = "dashed", linewidth = 1) +

geom_ribbon(aes(ymin = ADL - forecast_interval_adl,

ymax = ADL + forecast_interval_adl),

fill = "black", alpha = 0.2, na.rm = TRUE) +

# Vertical line at t1

geom_vline(xintercept = Date[t1],

linetype = "dashed", linewidth = 0.8) +

# Annotation for t1

annotate("text",

x = Date[t1-5],

y = max(df_plot$GDPGR, na.rm = TRUE),

label = "t1",

vjust = -0.5) +

# Labels

labs(

x = "Time",

y = "GDP Growth Rate",

color = ""

) +

# Colors

scale_color_manual(values = c(

"Actual" = "steelblue",

"Forecast-AR(2)" = "red",

"Forecast-ADL(2,2)" = "black"

)) +

theme(legend.position = "top")

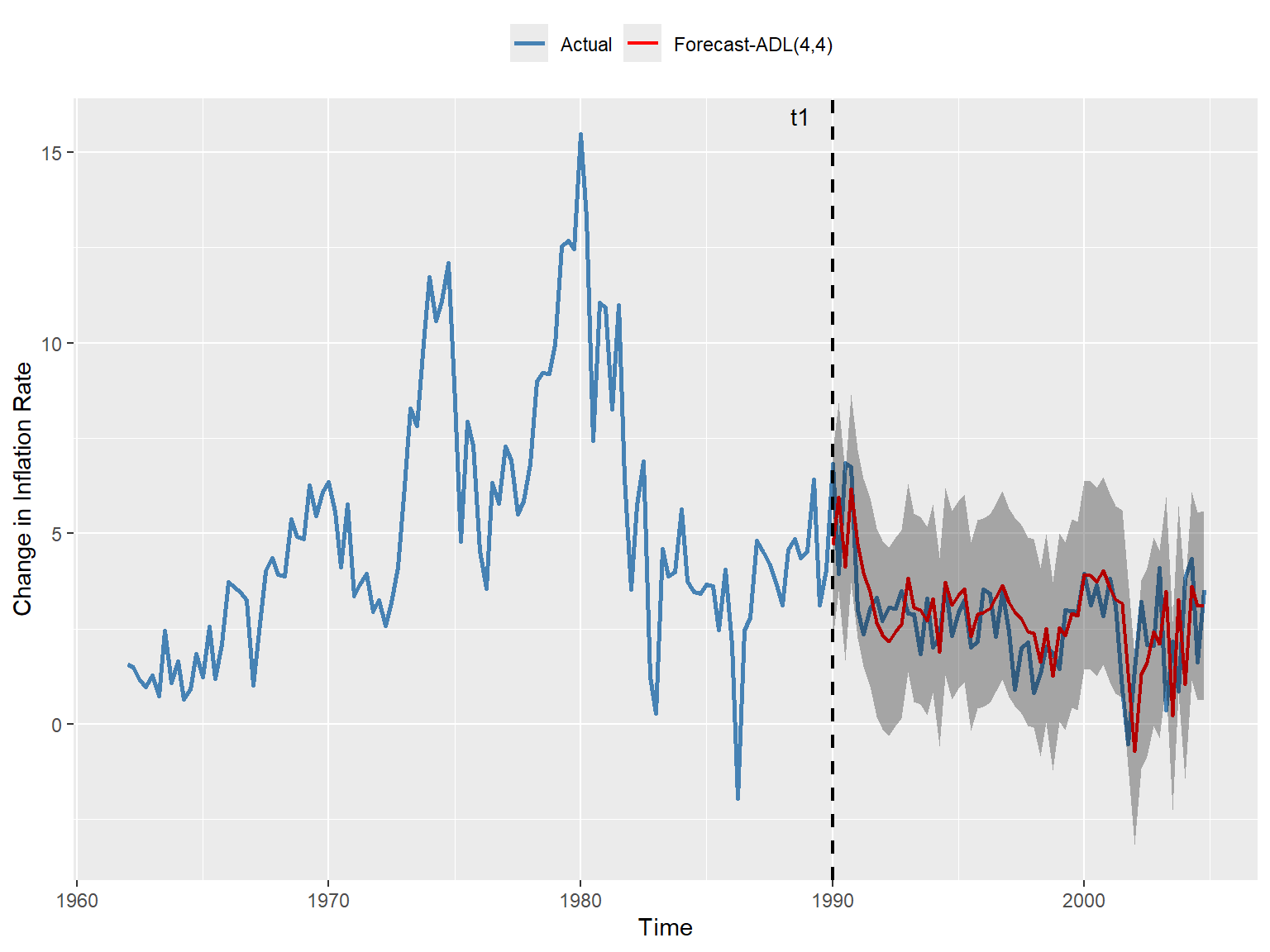

In the next example, we consider the ADL(4,4) model in Equation 24.12 for the change in inflation. We set \(t_1\) to 1990:Q1 and \(T\) to 2004:Q4. The estimated values of the RMSFE using the SER, FPE, and POOS methods are presented in Table 24.9. The results show that the FPE estimate is slightly larger than the SER estimate, which is consistent with the formulas given in Equation 24.17 and Equation 24.18. The POOS method provides the smallest estimate of the RMSFE.

# Data preparation

mydata <- tsInflation

mydata <- window(mydata, start = c(1962, 1), end = c(2004, 4))

mydata <- na.omit(mydata)

# ARDL(4,4) model

adl_result <- dynlm(

Delta_Inf ~ L(Delta_Inf, 1) + L(Delta_Inf, 2) + L(Delta_Inf, 3) + L(Delta_Inf, 4) +

L(u_rate, 1) + L(u_rate, 2) + L(u_rate, 3) + L(u_rate, 4),

data = mydata

)

# RMSFE (SER and FPE)

n_adl <- length(residuals(adl_result))

k_adl <- 8 # number of regressors (lags)

RMSFE_SER_ADL <- sqrt(sum(residuals(adl_result)^2)/(n_adl - k_adl - 1))

RMSFE_FPE_ADL <- sqrt(

((n_adl + k_adl + 1) / (n_adl - k_adl - 1)) * sum(residuals(adl_result)^2 / n_adl)

)

# Define evaluation sample

time_index <- time(mydata)

t1 <- which(time_index == 1990.0) # 1990 Q1

T <- which(time_index == 2004.75) # 2004 Q4

# Preallocate

savers_adl <- matrix(NA, nrow = T - t1 + 1, ncol = 2)

# Recursive (rolling) forecasts

for (t in 1:(T - t1 + 1)) {

idx0 <- t1 + t - 1 # forecast target

idx1 <- idx0 - 1 # t-1

idx2 <- idx0 - 2 # t-2

idx3 <- idx0 - 3 # t-3

idx4 <- idx0 - 4 # t-4

# Expanding window

data_slice <- window(mydata, end = time_index[idx1])

# ADL(4,4)

adl_model <- dynlm(

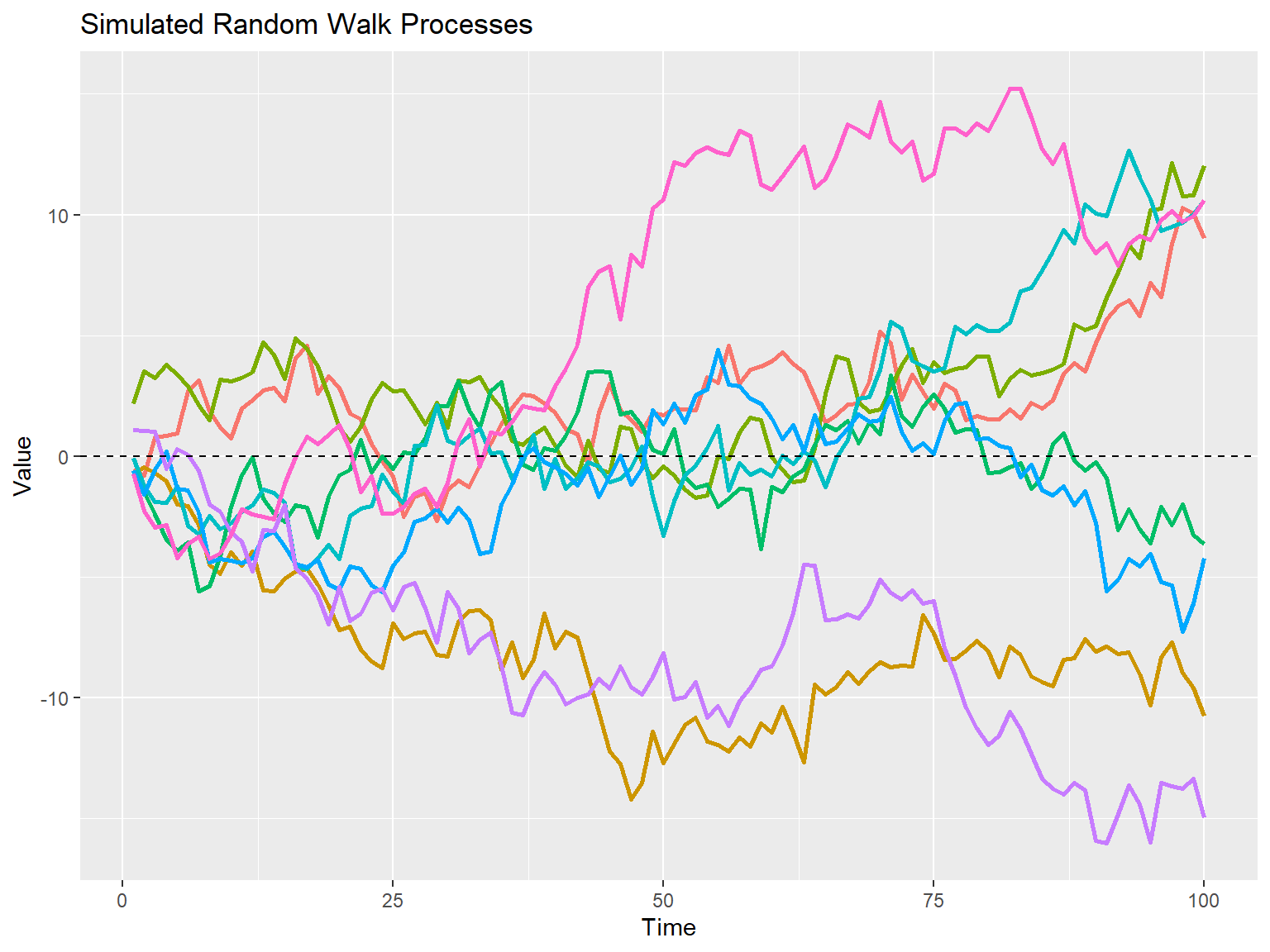

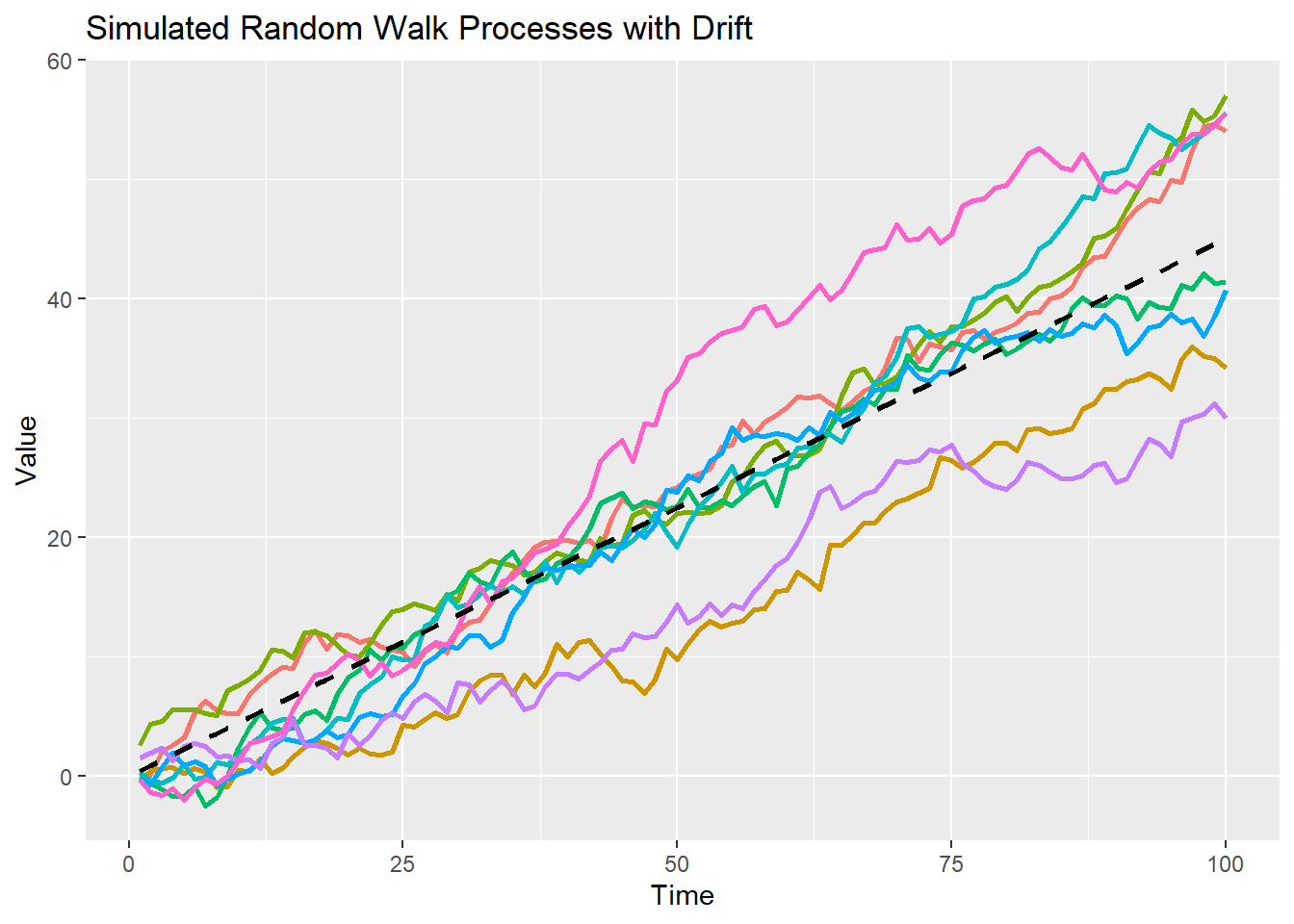

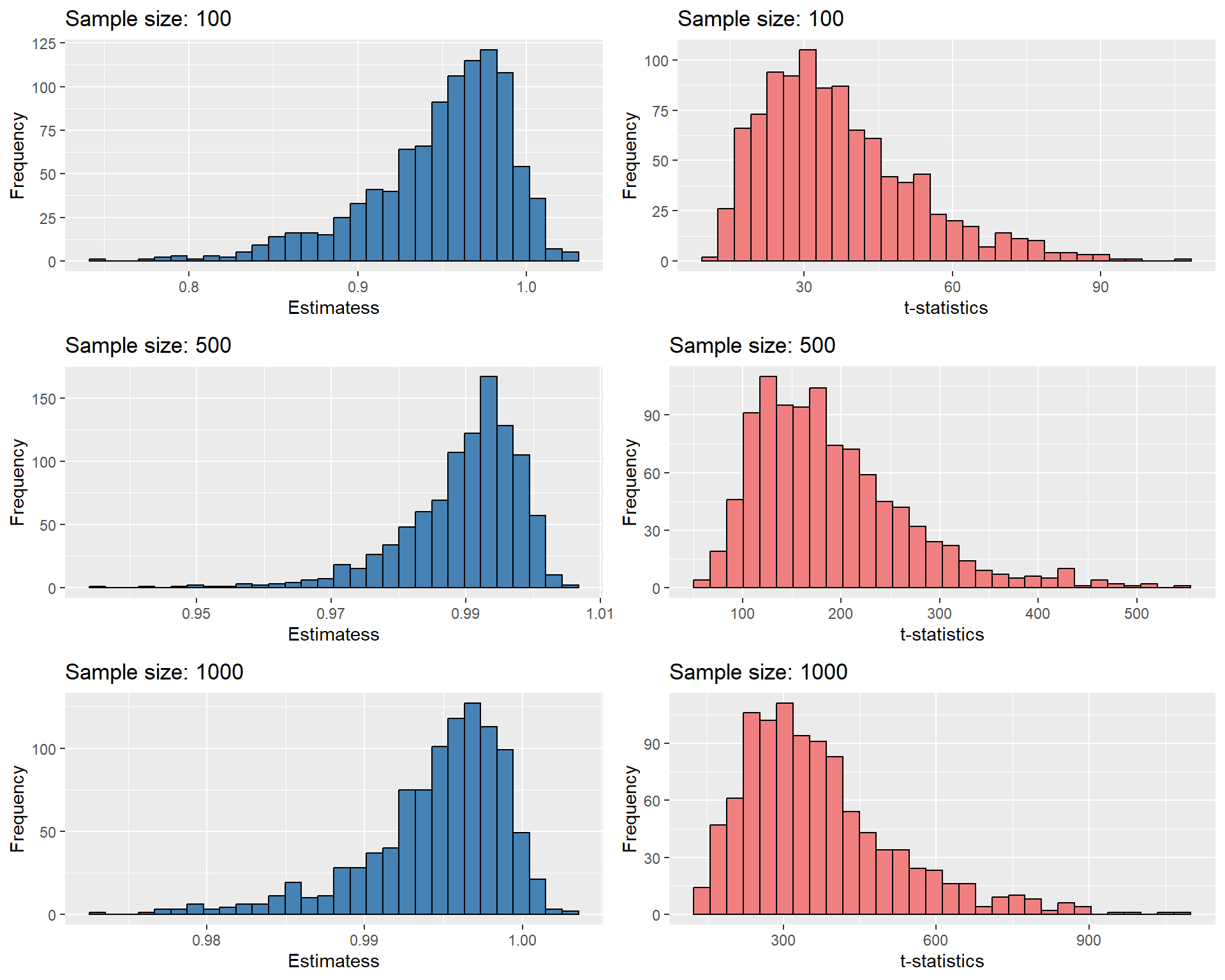

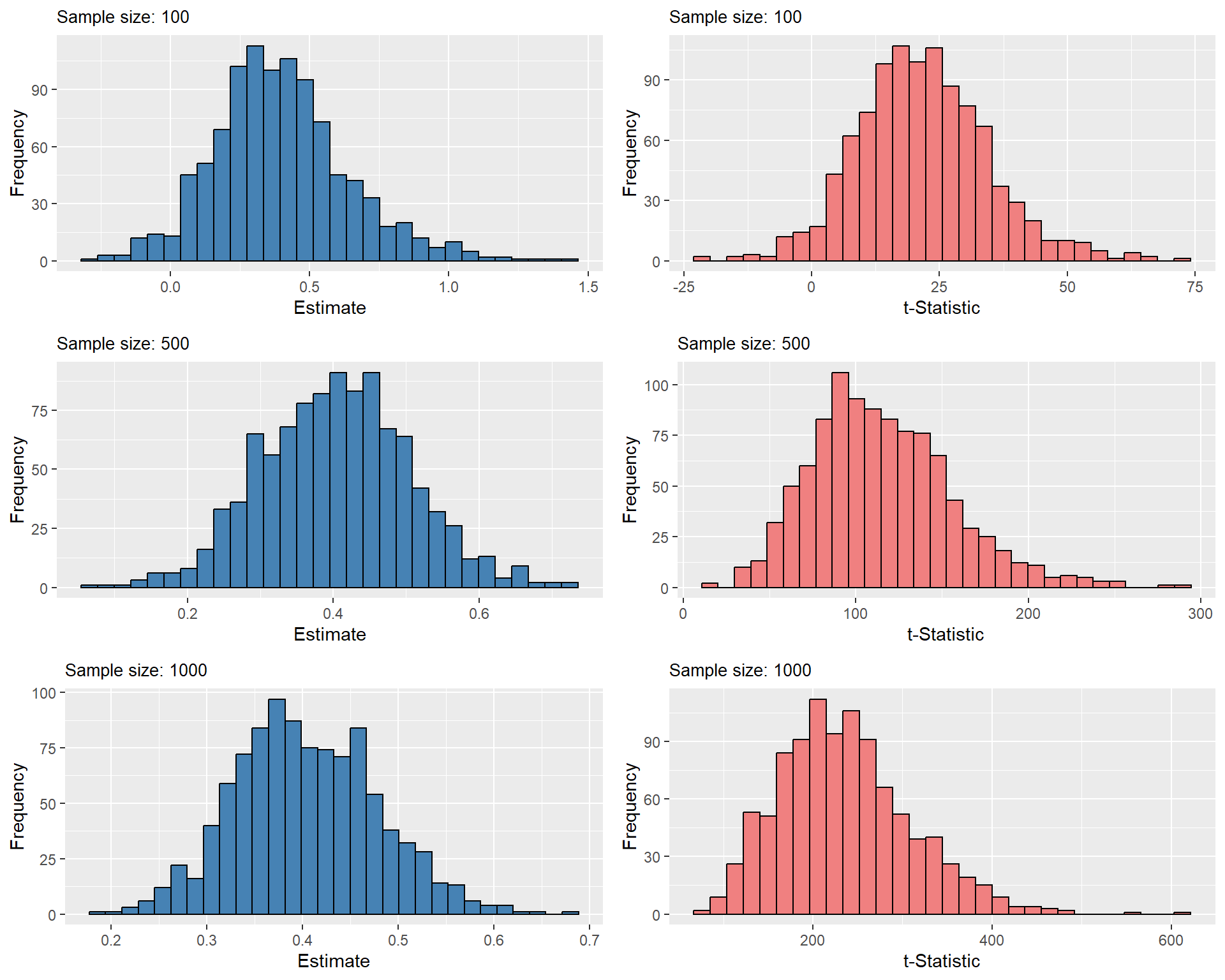

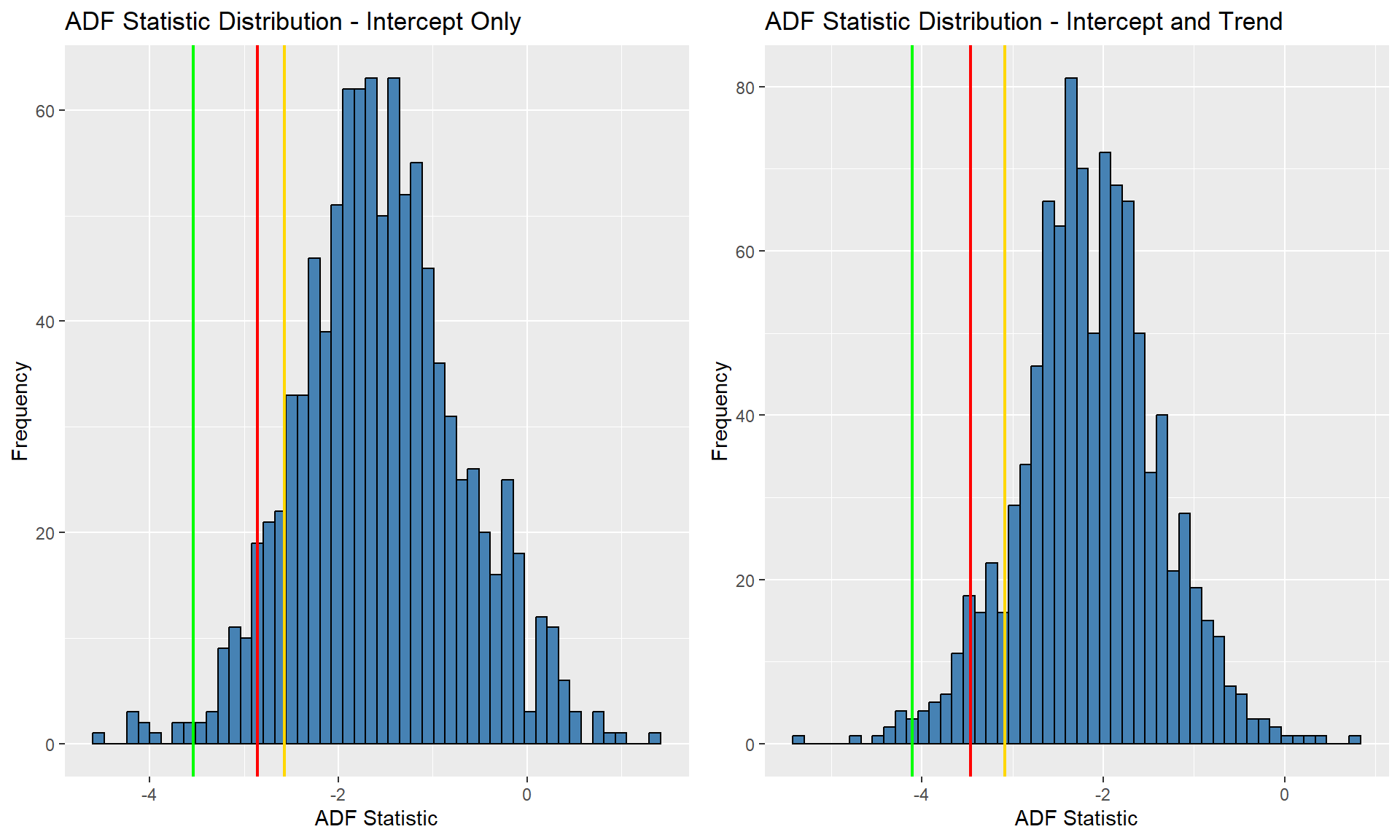

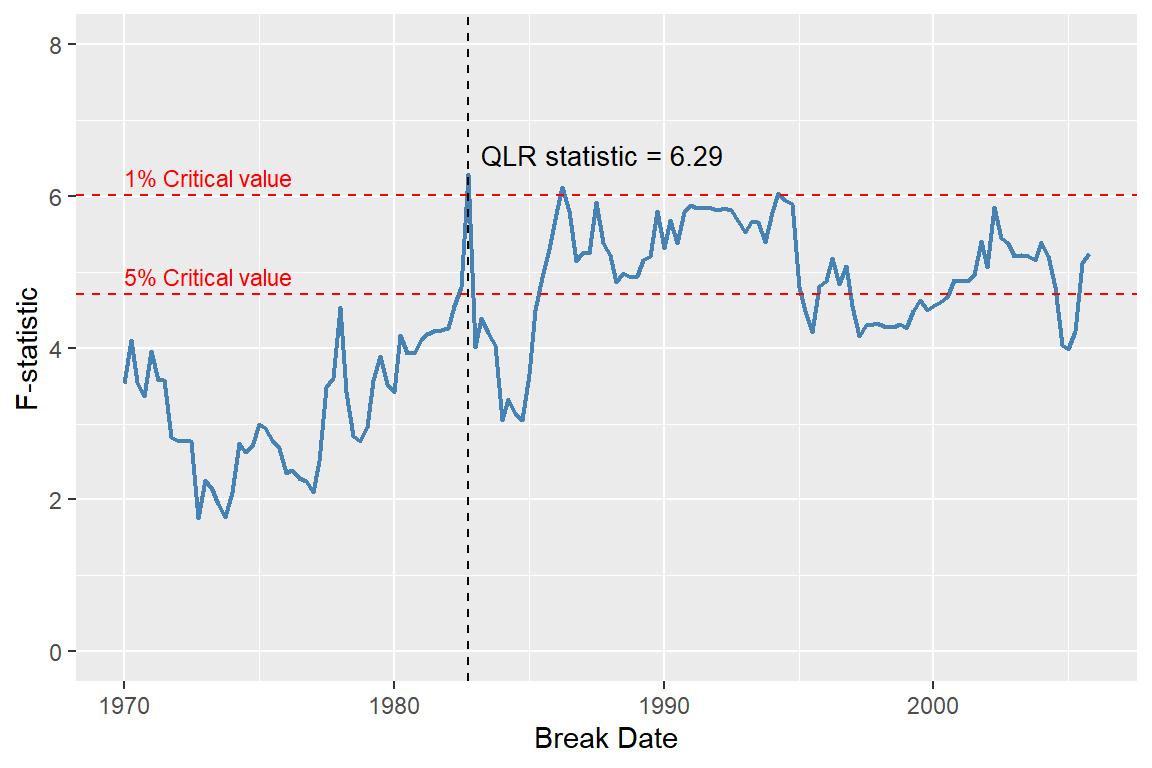

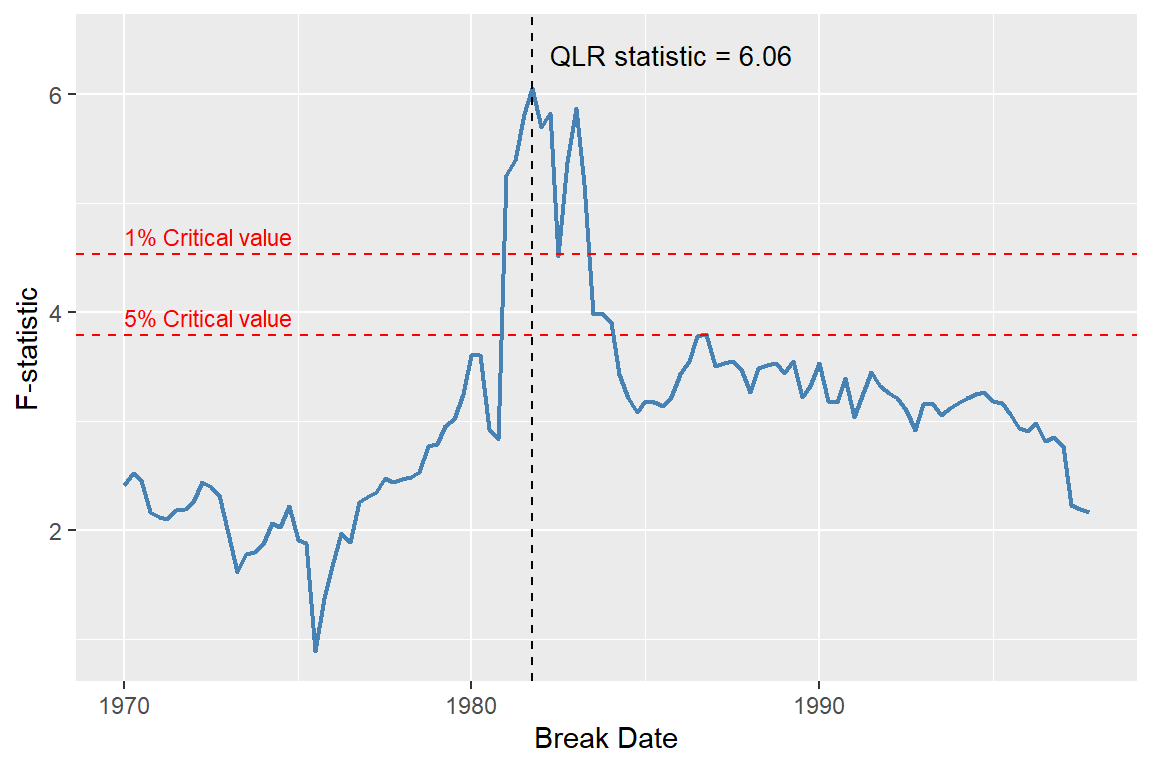

Delta_Inf ~ L(Delta_Inf, 1) + L(Delta_Inf, 2) + L(Delta_Inf, 3) + L(Delta_Inf, 4) +