# Load necessary libraries

library(tidyverse)

library(gridExtra)

library(modelsummary)

library(sandwich)

library(lmtest)

library(knitr)

library(readxl)

library(car)

options("modelsummary_factory_default" = "gt") 17 Nonlinear Regression Functions

17.1 Introduction

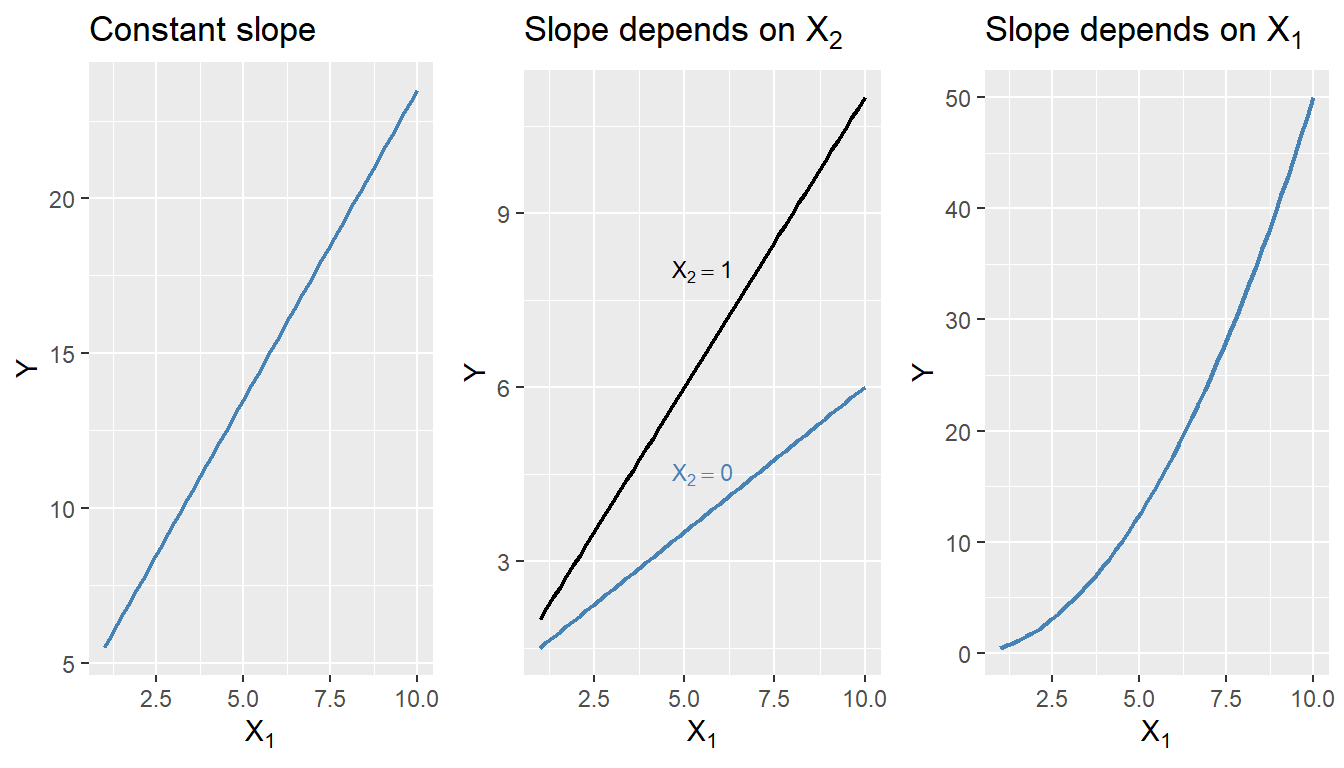

We start our regression analysis in Chapter 13 by assuming a linear population function \(\E(Y|X_1)=\beta_0+\beta_1X_1\). However, in many cases, the relationship between \(Y\) and \(X_1\) is nonlinear. In this case, the effect of a change in \(X_1\) on \(Y\) is not constant. In Figure 17.1, we illustrate population regression functions that have different slopes. In the linear case, as shown in the first plot of Figure 17.1, the slope of the population regression function is constant. However, in the second plot, the slope of the population regression function depends on the value of another variable \(X_2\). In the third plot, the slope of the population regression function depends on the value of \(X_1\). In this chapter, we consider how to model nonlinear regression functions.

# Generate data

x <- seq(1, 10, length.out = 50)

df1 <- data.frame(x = x, y = 3.5 + 2 * x)

df2 <- data.frame(

x = rep(x, 2),

y = c(1 + 0.5 * x,

1 + 0.5 * x + 0.5 * x),

X2 = factor(rep(c("When X2 = 0", "When X2 = 1"), each = length(x)))

)

df3 <- data.frame(x = x, y = 0.5 * x^2)

p1 <- ggplot(df1, aes(x, y)) +

geom_line(color = "steelblue", linewidth = 0.8) +

labs(title = "Constant slope", x = expression(X[1]), y = "Y")

p2 <- ggplot(df2, aes(x = x, y = y, color = X2)) +

geom_line(linewidth = 0.8) +

labs(

title = expression("Slope depends on " * X[2]),

x = expression(X[1]),

y = "Y"

) +

scale_color_manual(values = c("steelblue", "black")) +

theme(legend.position = "none") +

annotate(

"text",

x = 5.5,

y = 4.5,

label = "X[2] == 0",

parse = TRUE,

color = "steelblue",

size = 3

) +

annotate(

"text",

x = 5.5,

y = 8,

label = "X[2] == 1",

parse = TRUE,

color = "black",

size = 3

)

p3 <- ggplot(df3, aes(x, y)) +

geom_line(color = "steelblue", linewidth = 0.8) +

labs(

title = expression("Slope depends on " * X[1]),

x = expression(X[1]),

y = "Y"

)

grid.arrange(p1, p2, p3, nrow = 1)

17.2 A general strategy for modeling nonlinear regression functions

The general nonlinear population regression model is \[ \begin{align} Y_i=f(X_{1i},X_{2i},\dots,X_{ki})+u_i,\quad i=1,2,\dots,n, \end{align} \]

where \(f(X_{1i},X_{2i},\dots,X_{ki})\) is the population nonlinear regression function and \(u_i\) is the error term. We will consider this model under the four least squares assumptions given in Chapter 15. Then, under the zero-conditional mean assumption, we have \(\E(Y|X_{1},X_{2},\dots,X_{k})=f(X_{1},X_{2},\dots,X_{k})\). Because of the nonlinear relationship between the conditional mean \(\E(Y|X_{1},X_{2},\dots,X_{k})\) and the regressors, the effect of a change in one regressor on the conditional mean requires a more complex analysis than in the linear case.

Let us assume that \(X_1\) changes by \(\Delta X_1\) and let \(\E(Y^*|X_{1}, X_{2},\dots,X_{k})=f(X_{1}+\Delta X_1,X_{2},\dots,X_{k})\) be the expected value of \(Y\) when \(X_1\) changes by \(\Delta X_1\). Then, the expected change in \(Y\) associated with the change in \(X_1\) by \(\Delta X_1\), holding \(X_2,\dots, X_k\) constant, is \[ \begin{align} \Delta Y&=\E(Y^*|X_{1}+\Delta X_1, X_{2},\dots,X_{k})-\E(Y|X_{1},X_{2},\dots,X_{k}),\\ &=f(X_{1}+\Delta X_1, X_{2},\dots,X_{k})-f(X_{1}, X_{2},\dots,X_{k}). \end{align} \]

Let \(\hat{f}\) be an estimator of \(f\). Then, the predicted values of \(Y\) based on \(\hat{f}\) is \(\hat{f}(X_{1},X_{2},\dots,X_{k})\). Thus, the predicted change in \(Y\) is \[ \begin{align*} \Delta\hat{Y}=\hat{f}(X_{1}+\Delta X_1, X_{2},\dots,X_{k})-\hat{f}(X_{1}, X_{2},\dots,X_{k}). \end{align*} \]

We can compute the predicted change in \(Y\) for different values of \(\Delta X_1\) and plot the predicted change in \(Y\) against \(\Delta X_1\). The plot will show how the predicted change in \(Y\) depends on the change in \(X_1\).

17.3 Nonlinear functions of a single independent variable

In this section, we consider two nonlinear regression models: the polynomial regression model and the logarithmic regression model. For the sake of brevity, we consider the case of a single independent variable \(X_1\), though the results can be generalized to multiple independent variables.

17.3.1 Polynomial regression models

A polynomial regression model is a special case of a nonlinear regression model. A polynomial regression model of degree \(m\) is \[ \begin{align} Y_i=\beta_0+\beta_1X_{i}+\beta_2X_{i}^2+\cdots+\beta_mX_{i}^m+u_i,\quad i=1,2,\dots,n. \end{align} \]

In terms of the notation introduced in the previous section, we have \(f(X_i)=\beta_0+\beta_1X_{i}+\beta_2X_{i}^2+\cdots+\beta_mX_{i}^m\). The linear model is a special case of the polynomial model with \(m=1\). This model reduces to the linear model if we fail to reject the joint null hypothesis \(H_0:\beta_2=\beta_3=\cdots=\beta_m=0\) against the alternative hypothesis \(H_1:\) At least one of the coefficients is not zero. When \(m=2\), the polynomial model is called a quadratic model, and when \(m=3\), the polynomial model is called a cubic model. As with the linear model, the quadratic and cubic models can be tested against the polynomial regression model of degree \(m\) by adjusting the null and alternative hypotheses accordingly. These joint null hypotheses can be tested using the F-statistic introduced in Chapter 16.

Note that since the polynomial model is linear in the parameters, we can use the OLS estimator to estimate the model. The degree of the model can be selected using a sequential hypothesis testing approach based on the individual \(t\)-statistic. This approach consists of the following steps:

- Use economic theory and expert judgement to choose the maximum degree of the polynomial model, \(m\). In many cases involving economic data, \(m = 2\) or \(m = 3\) is sufficient.

- Estimate the polynomial model of degree \(m\). If the \(t\)-statistic for the highest-order term is statistically significant, then the polynomial model of degree \(m\) is the appropriate model. If the \(t\)-statistic for the highest-order term is not statistically significant, then estimate the polynomial model of degree \(m - 1\).

- Repeat this process until the \(t\)-statistic for the highest-order term is statistically significant.

The final model obtained through this sequential testing approach is the polynomial model of the highest degree for which the \(t\)-statistic is statistically significant.

As an example, we consider the test scores data available in the caschool.xlsx file. The following code chunk imports the data and displays the column names.

# Import data

mydata <- read_excel("data/caschool.xlsx")

colnames(mydata) [1] "Observation Number" "dist_cod" "county"

[4] "district" "gr_span" "enrl_tot"

[7] "teachers" "calw_pct" "meal_pct"

[10] "computer" "testscr" "comp_stu"

[13] "expn_stu" "str" "avginc"

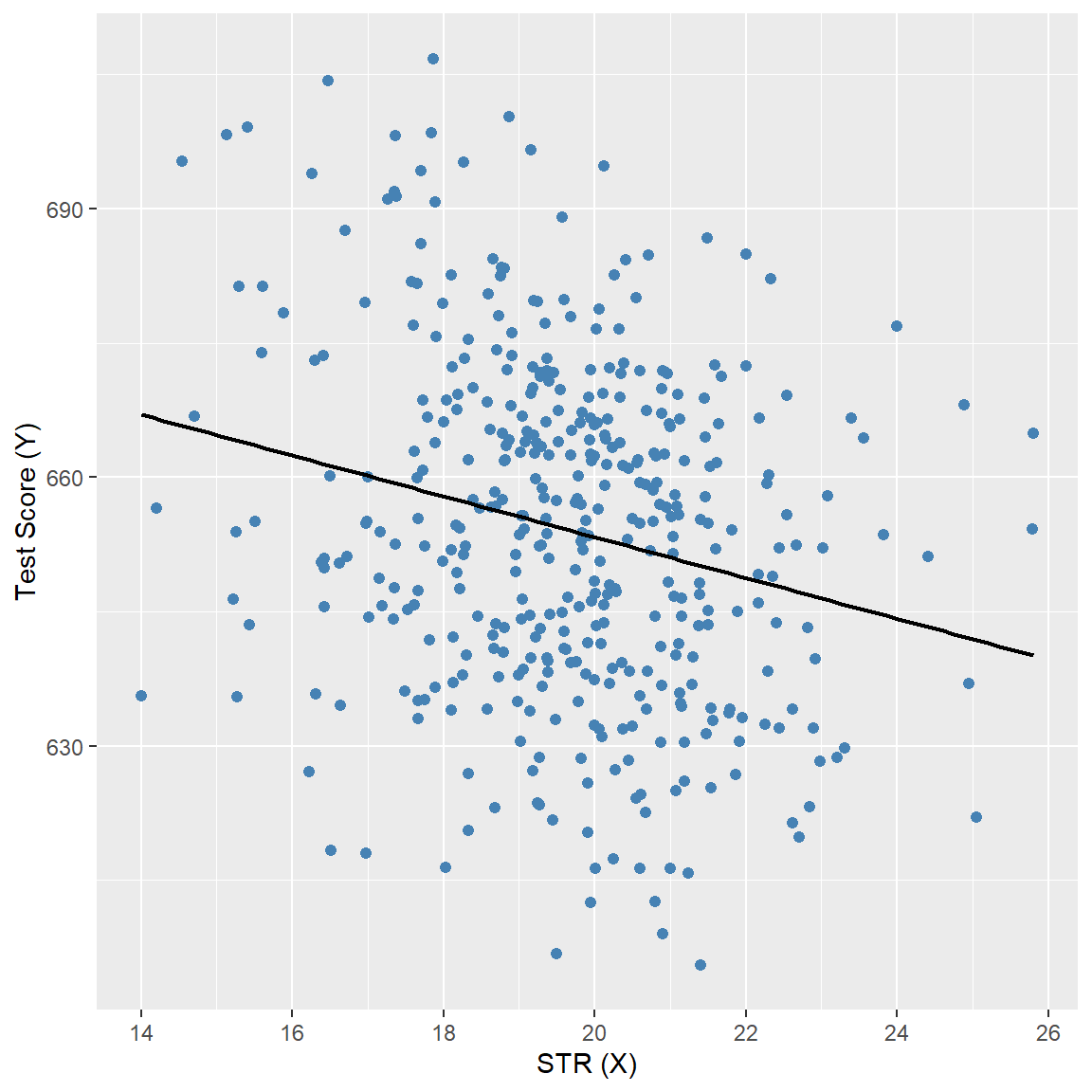

[16] "el_pct" "read_scr" "math_scr" We consider the relationship between the test scores (testscr) and two other variables: the student-teacher ratio (str), and the average district income (avginc). The average district income is measured in thousands of 1998 dollars (i.e., one unit increase in avginc means 1000 dollars increase). In Figure 17.2, we present scatter plots of test scores against the student-teacher ratio and average district income. The scatter plot of test scores against the student-teacher ratio suggests a linear relationship, whereas the scatter plot of test scores against average district income suggests a nonlinear relationship.

# Test Score and STR

p1 <- ggplot(mydata, aes(x = str, y = testscr)) +

geom_point(color = "steelblue", size = 1.8) +

geom_smooth(

method = "lm",

formula = y ~ x,

se = FALSE,

color = "black",

linewidth = 0.8

) +

labs(

x = "STR (X)",

y = "Test Score (Y)"

)

# Test Score and Income

p2 <- ggplot(mydata, aes(x = avginc, y = testscr)) +

geom_point(color = "steelblue", size = 1.8) +

geom_smooth(

method = "lm",

formula = y ~ x,

se = FALSE,

color = "black",

linewidth = 0.8

) +

labs(

x = "Income (X)",

y = "Test Score (Y)"

)

p1

p2

We consider the following polynomial regression models between the test scores and district income:

\[ \begin{align} &\text{Linear}:\quad \text{TestScore}=\beta_0+\beta_1\text{Income}+u,\label{eq3}\\ &\text{Quadratic}:\quad \text{TestScore}=\beta_0+\beta_1\text{Income}+\beta_2\text{Income}^2+u,\label{eq4}\\ &\text{Cubic}:\quad \text{TestScore}=\beta_0+\beta_1\text{Income}+\beta_2\text{Income}^2+\beta_3\text{Income}^3+u.\label{eq5} \end{align} \]

We estimate these models using the OLS estimator. In the following code chunk, we use the I() function to create the quadratic and cubic terms directly within the regression formula. Otherwise, we would need to create these variables manually and add them to the data frame mydata. The estimation results are presented in Table 17.1.

# Linear model

model1 <- lm(testscr ~ avginc, data = mydata)

# Quadratic model

model2 <- lm(testscr ~ avginc + I(avginc^2), data = mydata)

# Cubic model

model3 <- lm(testscr ~ avginc + I(avginc^2) + I(avginc^3), data = mydata)# Print results in table form

modelsummary(

list("Linear" = model1, "Quadratic" = model2, "Cubic" = model3),

vcov = "HC1",

fmt = 3,

stars = TRUE,

gof_omit = "AIC|BIC|Log.Lik"

)| Linear | Quadratic | Cubic | |

|---|---|---|---|

| (Intercept) | 625.384*** | 607.302*** | 600.079*** |

| (1.868) | (2.902) | (5.102) | |

| avginc | 1.879*** | 3.851*** | 5.019*** |

| (0.114) | (0.268) | (0.707) | |

| I(avginc^2) | -0.042*** | -0.096** | |

| (0.005) | (0.029) | ||

| I(avginc^3) | 0.001* | ||

| (0.000) | |||

| Num.Obs. | 420 | 420 | 420 |

| R2 | 0.508 | 0.556 | 0.558 |

| R2 Adj. | 0.506 | 0.554 | 0.555 |

| F | 273.289 | 428.523 | 270.180 |

| RMSE | 13.35 | 12.68 | 12.65 |

| Std.Errors | HC1 | HC1 | HC1 |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | |||

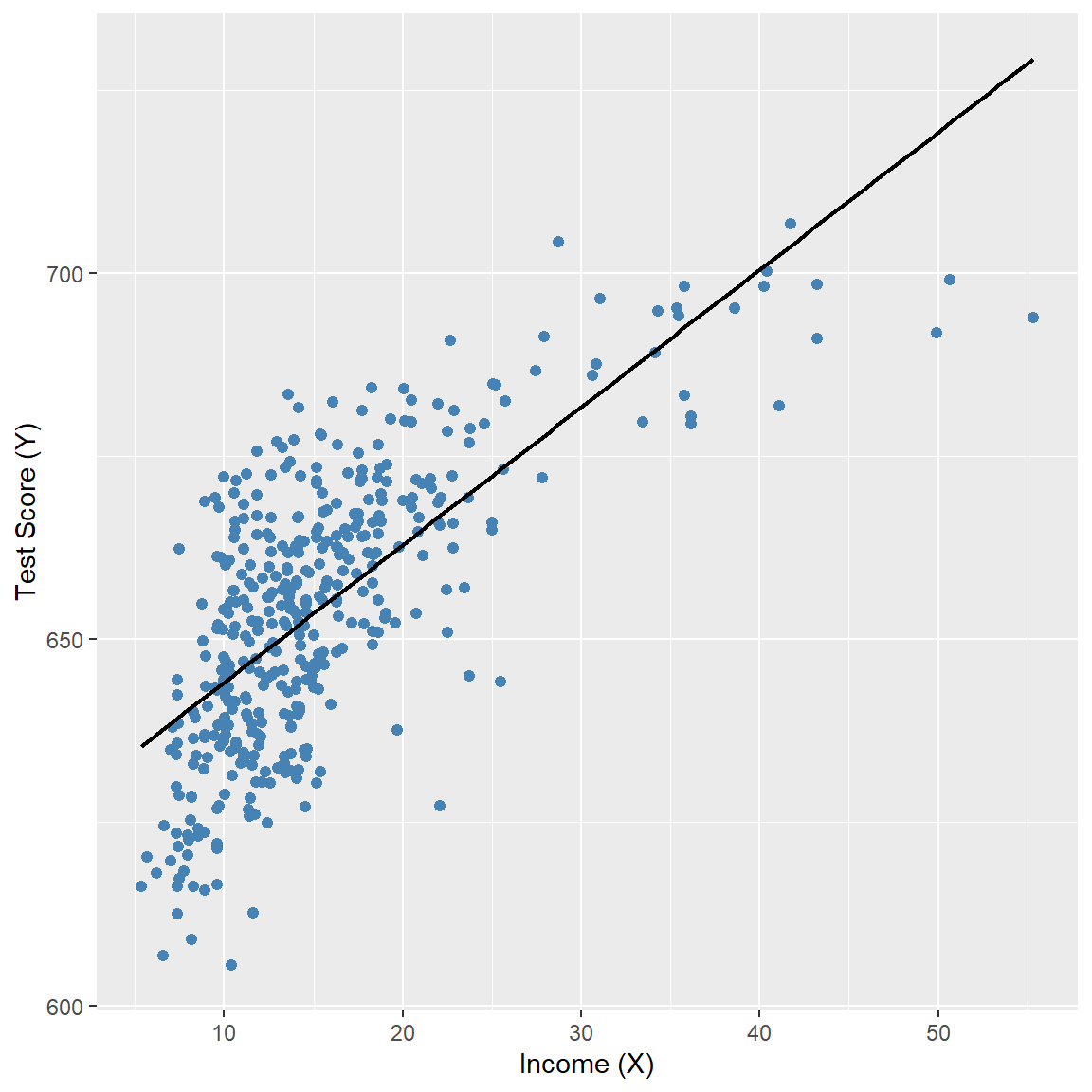

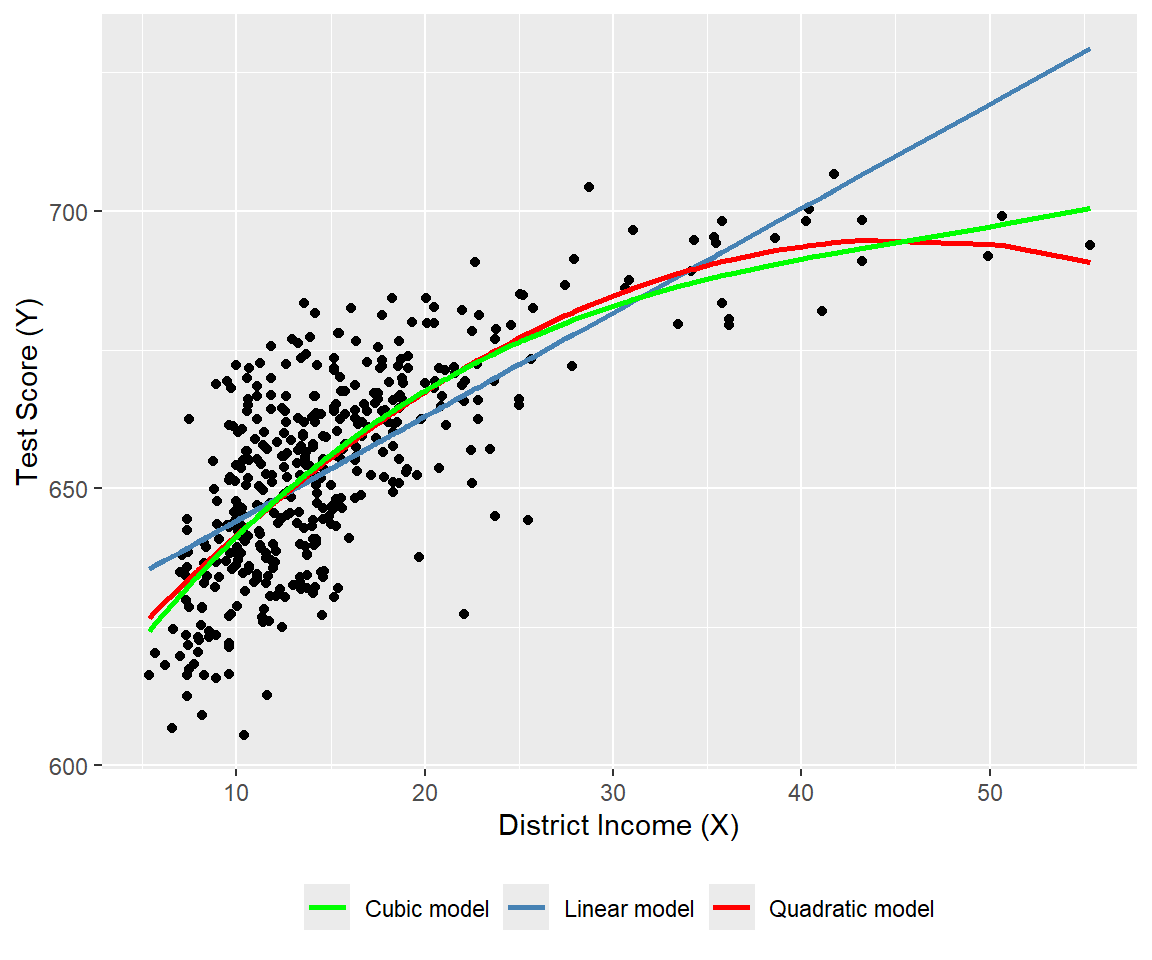

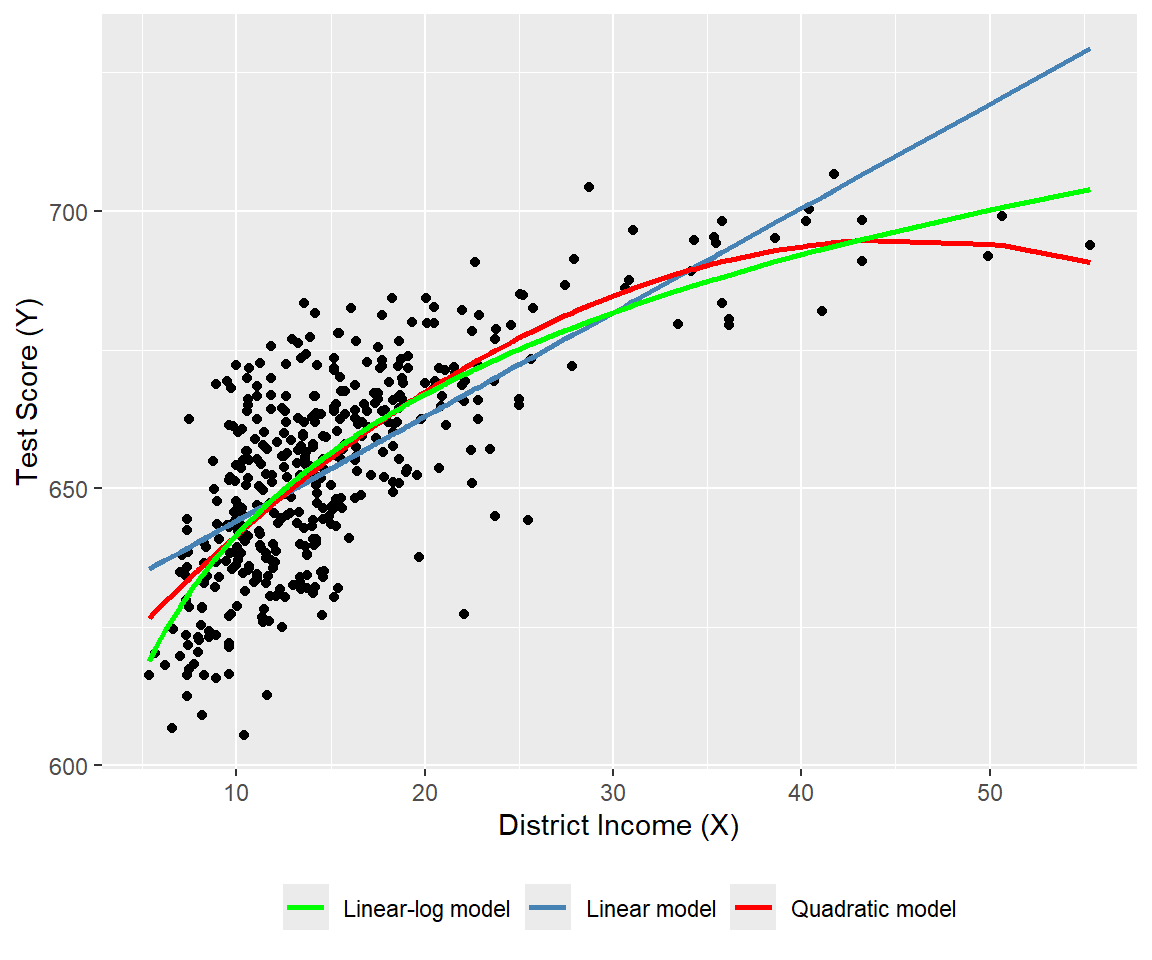

The estimated regression functions are plotted in Figure 17.3, superimposed on the scatterplot of the data. As shown, the quadratic and cubic functions capture the curvature in the scatterplot, exhibiting steep slopes at low values of district income that gradually flatten as district income increases.

# Polynomial regression models

# Prepare data

ord <- order(mydata$avginc)

df_plot <- data.frame(

avginc = mydata$avginc[ord],

testscr = mydata$testscr[ord],

fit_lin = fitted(model1)[ord],

fit_quad = fitted(model2)[ord],

fit_cubic = fitted(model3)[ord]

)

# Plot: scatter + polynomial regression functions

ggplot(df_plot, aes(x = avginc, y = testscr)) +

geom_point(color = "black", size = 1.5) +

geom_line(aes(y = fit_lin, color = "Linear model"), linewidth = 0.9) +

geom_line(aes(y = fit_quad, color = "Quadratic model"), linewidth = 0.9) +

geom_line(aes(y = fit_cubic, color = "Cubic model"), linewidth = 0.9) +

scale_color_manual(

values = c(

"Linear model" = "steelblue",

"Quadratic model" = "red",

"Cubic model" = "green"

)

) +

labs(

x = "District Income (X)",

y = "Test Score (Y)",

color = NULL

) +

theme(

legend.position = "bottom"

)

The estimation results in Table 17.1 show that the coefficients of the quadratic and cubic terms are statistically significant. For testing the null hypothesis of linearity, against the alternative that the population regression is quadratic and/or cubic, we consider the following joint null and alternative hypotheses. \[ \begin{align*} &H_0:\beta_2=0,\,\beta_3=0,\\ &H_1:\text{At least one coefficient is not zero}. \end{align*} \]

To test \(H_0\), we use the linearHypothesis from the car package. The \(F\)-statistic is around 37.7 with a \(p\)-value of 9.043e-16. Thus, we can reject the null hypothesis at the \(5\%\) significance level.

linearHypothesis(

model3,

c("I(avginc^2) = 0", "I(avginc^3) = 0"),

white.adjust = "hc1"

)

Linear hypothesis test:

I(avginc^2) = 0

I(avginc^3) = 0

Model 1: restricted model

Model 2: testscr ~ avginc + I(avginc^2) + I(avginc^3)

Note: Coefficient covariance matrix supplied.

Res.Df Df F Pr(>F)

1 418

2 416 2 37.691 9.043e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 117.3.2 Logarithmic regression models

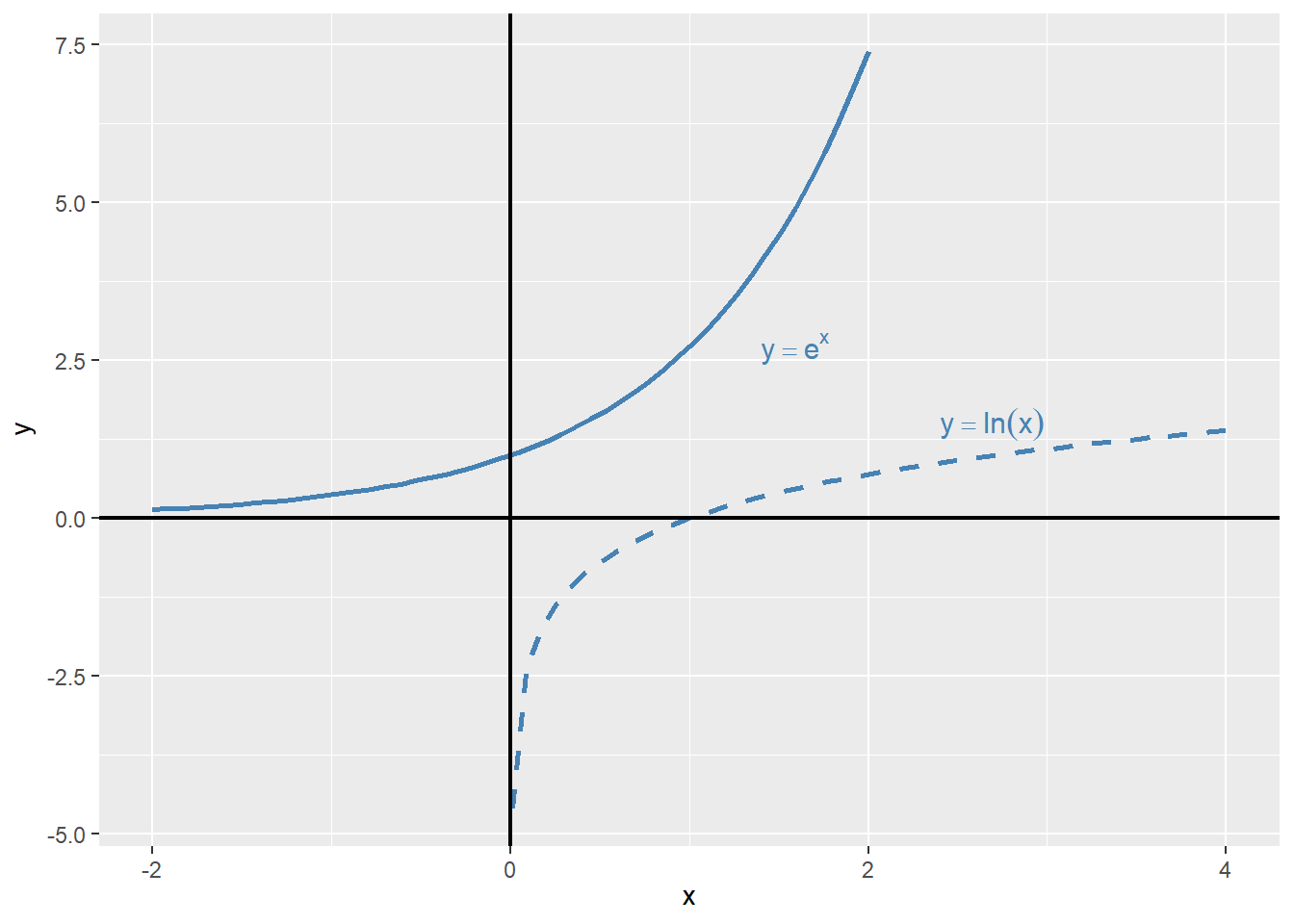

In logarithmic regression models, we specify specify a nonlinear relationship between \(Y\) and \(X\) using the natural logarithm of \(Y\) and/or \(X\). The logarithmic transformations allow us to model relationships in percentage terms (such as elasticities), rather than linearly. Before introducing logarithmic regression models, we need to recall some properties of exponential and logarithmic functions. The exponential function is \(e^x\), where \(e\) is the base of the natural logarithm. The natural logarithm function is denoted by \(\ln(x)\), where \(x>0\). The natural logarithm function has the following properties:

- \(\ln(xy)=\ln(x)+\ln(y)\),

- \(\ln(x/y)=\ln(x)-\ln(y)\),

- \(\ln(x^n)=n\ln(x)\).

In Figure 17.2, we plot the exponential function \(y=e^x\) and the natural logarithm function \(y=\ln(x)\). The exponential function is increasing and convex, while the natural logarithm function is increasing and concave.

# Exponential and logarithm functions

# Generate values

x_exp <- seq(-2, 2, length.out = 50)

x_log <- seq(0.01, 4, length.out = 50)

df_exp <- data.frame(x = x_exp, y = exp(x_exp))

df_log <- data.frame(x = x_log, y = log(x_log))

# Plot with direct annotations

ggplot() +

# Exponential curve

geom_line(

data = df_exp,

aes(x = x, y = y),

color = "steelblue",

linewidth = 0.9

) +

# Logarithmic curve

geom_line(

data = df_log,

aes(x = x, y = y),

color = "steelblue",

linewidth = 0.9,

linetype = "dashed"

) +

# Axes at zero

geom_vline(xintercept = 0, color = "black", linewidth = 0.8) +

geom_hline(yintercept = 0, color = "black", linewidth = 0.8) +

# Direct annotations

annotate(

"text",

x = 1.4,

y = 2.7,

label = "y == e^x",

parse = TRUE,

color = "steelblue",

hjust = 0,

size = 4

) +

annotate(

"text",

x = 2.4,

y = 1.5,

label = "y == ln(x)",

parse = TRUE,

color = "steelblue",

hjust = 0,

size = 4

) +

labs(

x = expression(x),

y = expression(y)

)

Example 17.1 (Maclaurin series expansions) The Maclaurin series expansions of \(e^x\), \(\ln(1-x)\), and \(\ln(1+x)\) are given by \[ \begin{align} &e^x=1+x+\frac{x^2}{2}+\frac{x^3}{6}+\cdots=\sum_{n=0}^{\infty}\frac{x^n}{n!},\\ &\ln(1-x)=-x-\frac{x^2}{2}-\frac{x^3}{3}-\cdots=-\sum_{n=1}^{\infty}\frac{x^n}{n},\\ &\ln(1+x)=x-\frac{x^2}{2}+\frac{x^3}{3}-\cdots=\sum_{n=1}^{\infty}(-1)^{n+1}\frac{x^n}{n}. \end{align} \]

The first series converges for all \(x\), while the last two series converge for \(|x|<1\). Note that when \(x\) is small, the higher-order terms become negligible in these expansions. For example, we can approximate \(\ln(1+x)\) by \(x\), i.e., \(\ln(1+x)\approx x\), when \(x\) is small.

Using \(\ln(1+x)\approx x\) from Example 17.1, we can express \(\ln(x+\Delta x)-\ln(x)\) as \[ \begin{align} \ln(x+\Delta x)-\ln(x)=\ln\left(\frac{x+\Delta x}{x}\right)=\ln\left(1+\frac{\Delta x}{x}\right)\approx\frac{\Delta x}{x}, \end{align} \tag{17.1}\]

where the last approximation follows when \(\Delta x/x\) is small. Then, using Equation 17.1, we have \(\ln(x_2)-\ln(x_1)\approx\frac{\Delta x}{x_1}\), where \(\Delta x=x_2-x_1\). We call \(\frac{\Delta x}{x}\) the relative change (or the growth rate) in \(x\), and \(100\times\frac{\Delta x}{x}\) the percentage change (or the percentage growth rate) in \(x\).

Example 17.2 (Logarithms and percentages) Assume that \(x=100\) and \(\Delta x=1\), then \(\Delta x/x = 1/100 = 0.01\) (or \(1\%\)), while \(\ln(x+\Delta x)-\ln(x)=\ln(101)-\ln(100)=0.00995\) or \(0.995\%\). Thus \(\Delta x/x\) (which is 0.01) is very close to \(\ln(x+\Delta x)-\ln(x)\) (which is \(0.00995\)).

Using logarithms, we can formulate the following three specifications:

- The linear-log model: \(Y_i=\beta_0+\beta_1\ln(X_i)+u_i\),

- The log-linear model: \(\ln(Y_i)=\beta_0+\beta_1X_i+u_i\), and

- The log-log model: \(\ln(Y_i)=\beta_0+\beta_1\ln(X_i)+u_i\).

Consider the linear-log model and assume that \(X\) changes to \(X+\Delta X\). Then, the change in \(Y\) can be computed from comparing the models before and after the change in \(X\).

- Before the change: \(Y=\beta_0+\beta_1\ln(X)+u\),

- After the change: \(Y+\Delta Y=\beta_0+\beta_1\ln(X+\Delta X)+u\).

Thus, subtracting the model before the change from the model after the change gives \(\Delta Y\) as \[ \begin{align*} &\Delta Y=\left[\ln(X+\Delta X)-\ln(X)\right]\beta_1\approx\beta_1\times\frac{\Delta X}{X}\\ &\implies\Delta Y\approx(0.01\times\beta_1)\left(100\times\frac{\Delta X}{X}\right). \end{align*} \]

Thus, in the linear-log model, if \(X\) changes by \(1\%\), i.e., \(100\times\Delta X/X=1\), then \(Y\) on average changes by \(0.01\times\beta_1\) units.

In the case of the log-linear model, we can express the effect of \(\Delta X\) on \(Y\) by considering the following models:

- Before the change: \(\ln(Y)=\beta_0+\beta_1X+u\),

- After the change: \(\ln(Y+\Delta Y)=\beta_0+\beta_1(X+\Delta X)+u\).

Thus, we can express \(\ln(Y+\Delta Y)-\ln(Y)\) as \[ \begin{align*} &\ln(Y+\Delta Y)-\ln(Y)=\beta_1\Delta X\implies\frac{\Delta Y}{Y}\approx\beta_1\times\Delta X\\ &\implies100\times\frac{\Delta Y}{Y}\approx (100\times\beta_1)\times\Delta X. \end{align*} \]

Thus, in the log-linear model, if \(X\) changes by 1 unit, i.e., \(\Delta X=1\), then \(Y\) on average changes by \((100\times\beta_1)\) percent.

Finally, in the log-log model, we can express the effect of \(\Delta X\) on \(Y\) by considering the following models:

- Before the change: \(\ln(Y)=\beta_0+\beta_1\ln(X)+u\),

- After the change: \(\ln(Y+\Delta Y)=\beta_0+\beta_1\ln(X+\Delta X)+u\).

Thus, we can express \(\ln(Y+\Delta Y)-\ln(Y)\) as \[ \begin{align*} &\ln(Y+\Delta Y)-\ln(Y)=\beta_1\ln\left(1+\frac{\Delta X}{X}\right)\implies\frac{\Delta Y}{Y}\approx\beta_1\times\frac{\Delta X}{X}\\ &\implies100\times\frac{\Delta Y}{Y}\approx\beta_1\times100\times\frac{\Delta X}{X}. \end{align*} \]

Thus, in the log-log model, if \(X\) changes by \(1\%\), i.e., \(100\times\Delta X/X=1\), then \(Y\) on average changes by \(\beta_1\) percent.

For our test scores example, we consider the following regression models:

- The linear model: \(\text{TestScore}=\beta_0+\beta_1\text{Income}+u\),

- The quadratic model: \(\text{TestScore}=\beta_0+\beta_1\text{Income}+\beta_2\text{Income}^2+u\),

- The linear-log model: \(\text{TestScore}=\beta_0+\beta_1\ln(\text{Income})+u\),

- The log-linear model: \(\ln(\text{TestScore})=\beta_0+\beta_1\text{Income}+u\),

- The log-log model: \(\ln(\text{TestScore})=\beta_0+\beta_1\ln(\text{Income})+u\).

# Linear model

r1 <- lm(testscr ~ avginc, data = mydata)

# Quadratic model

r2 <- lm(testscr ~ avginc + I(avginc^2), data = mydata)

# Linear–log model

r3 <- lm(testscr ~ log(avginc), data = mydata)

# Log–linear model

r4 <- lm(log(testscr) ~ avginc, data = mydata)

# Log–log model

r5 <- lm(log(testscr) ~ log(avginc), data = mydata)# Print results in table form

modelsummary(

list(

"Linear" = r1,

"Quadratic" = r2,

"Linear–log" = r3,

"Log–linear" = r4,

"Log–log" = r5

),

vcov = "HC1",

gof_omit = "AIC|BIC|Log.Lik",

stars = TRUE

)| Linear | Quadratic | Linear–log | Log–linear | Log–log | |

|---|---|---|---|---|---|

| (Intercept) | 625.384*** | 607.302*** | 557.832*** | 6.439*** | 6.336*** |

| (1.868) | (2.902) | (3.840) | (0.003) | (0.006) | |

| avginc | 1.879*** | 3.851*** | 0.003*** | ||

| (0.114) | (0.268) | (0.000) | |||

| I(avginc^2) | -0.042*** | ||||

| (0.005) | |||||

| log(avginc) | 36.420*** | 0.055*** | |||

| (1.397) | (0.002) | ||||

| Num.Obs. | 420 | 420 | 420 | 420 | 420 |

| R2 | 0.508 | 0.556 | 0.563 | 0.498 | 0.558 |

| R2 Adj. | 0.506 | 0.554 | 0.561 | 0.497 | 0.557 |

| F | 273.289 | 428.523 | 679.697 | 263.855 | 667.780 |

| RMSE | 13.35 | 12.68 | 12.59 | 0.02 | 0.02 |

| Std.Errors | HC1 | HC1 | HC1 | HC1 | HC1 |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | |||||

The estimation results are presented in Table 17.2. All estimated coefficients presented in Table 17.2 are statistically significant at 5% level. The estimated coefficient of ln(avginc) in the linear-log model is around 36.420, which implies that a \(1\%\) increase in district income is associated with a 0.3642 points increase in test scores. Using the linear-log model, we can compute the predicted difference in test scores for districts with different income levels. For example, for districts with average income levels of 10000 and 11000, the predicted difference in test scores is \[

\begin{align}

\widehat{\Delta\text{TestScore}}&=36.420\times\left[\ln(11000)-\ln(10000)\right]\\

&=36.420\times\ln(1.1)\approx 36.420\times 0.0953\approx 3.47.

\end{align}

\]

On the other hand, the predicted difference in test scores for districts with average income levels of \(40000\) and \(41000\) is \[ \begin{align} \widehat{\Delta\text{TestScore}}&=36.420\times\left[\ln(41000)-\ln(40000)\right]\\ &=36.420\times\ln(1.025)\approx 36.420\times 0.0247\approx 0.90. \end{align} \]

Thus, the predicted difference in test scores is higher for districts with lower income levels.

The results in Table 17.2 indicates that the estimated coefficient of avginc in the log-linear model is around 0.003, which implies that a 1 unit increase in district income (one unit increase means 1000 dollars increase in average income) is associated with a \(0.3\%\) increase in test scores. Finally, the estimated coefficient of ln(avginc) in the log-log model is around 0.055, which implies that a \(1\%\) increase in district income is associated with a \(0.055\%\) increase in test scores.

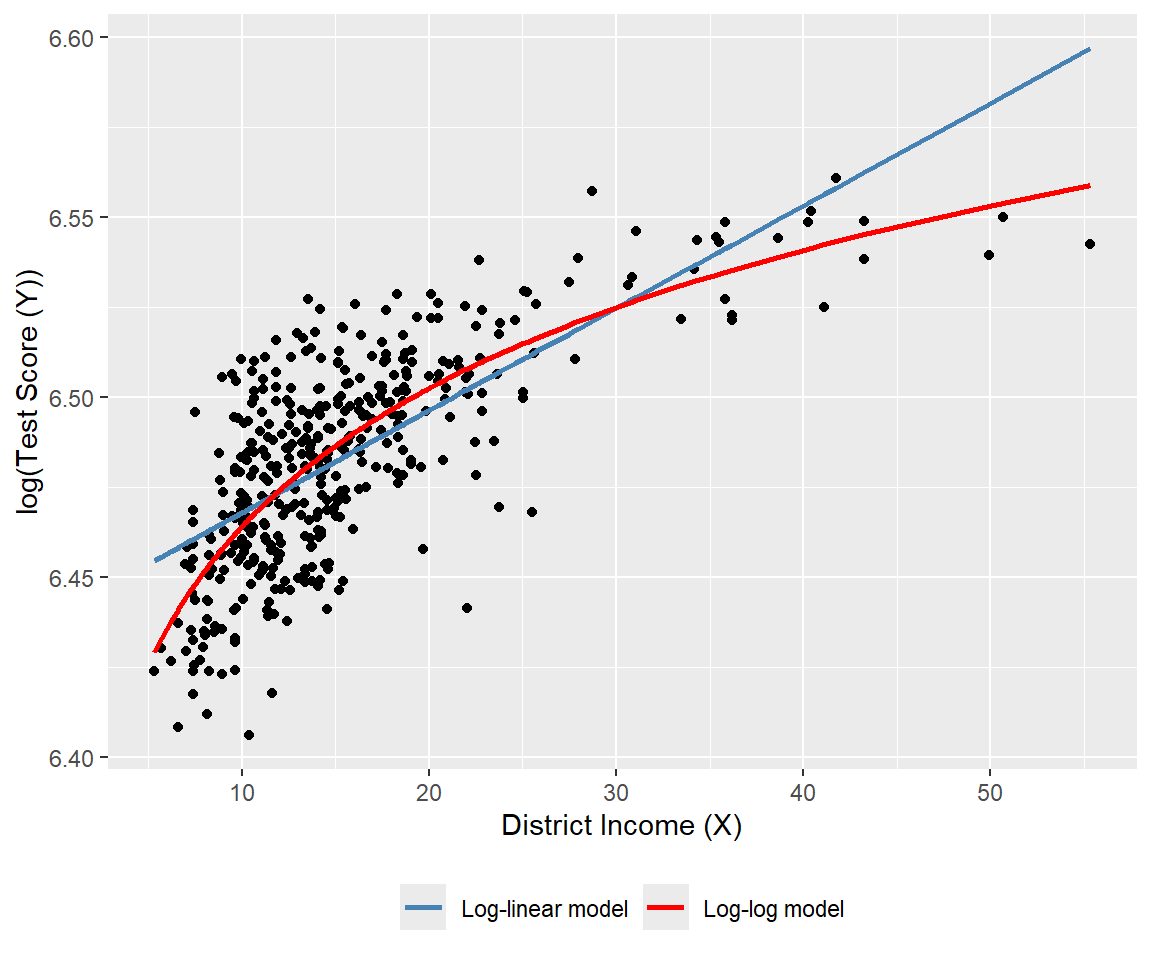

We plot the estimated regression functions in Figure 17.5 and Figure 17.6. In Figure 17.5, the quadratic model appears to provide a better fit for the data, while in Figure 17.6, the log-log model seems to fit the data more closely.

# Linear, quadratic and linear-log models

# Sort data for smooth fitted curves

mydata_sorted <- mydata[order(mydata$avginc), ]

# Add fitted values

mydata_sorted$fit_linear <- fitted(r1)[order(mydata$avginc)]

mydata_sorted$fit_quadratic <- fitted(r2)[order(mydata$avginc)]

mydata_sorted$fit_linlog <- fitted(r3)[order(mydata$avginc)]

# Plot

ggplot(mydata, aes(x = avginc, y = testscr)) +

geom_point(color = "black", size = 1.5) +

geom_line(

data = mydata_sorted,

aes(y = fit_linear, color = "Linear model"),

linewidth = 1

) +

geom_line(

data = mydata_sorted,

aes(y = fit_quadratic, color = "Quadratic model"),

linewidth = 1

) +

geom_line(

data = mydata_sorted,

aes(y = fit_linlog, color = "Linear-log model"),

linewidth = 1

) +

scale_color_manual(

values = c(

"Linear model" = "steelblue",

"Quadratic model" = "red",

"Linear-log model" = "green"

)

) +

labs(

x = "District Income (X)",

y = "Test Score (Y)",

color = NULL

) +

theme(legend.position = "bottom")

# Log-linear and log-log models

# Sort data for smooth fitted curves

ord <- order(mydata$avginc)

mydata_sorted <- mydata[ord, ]

# Add fitted values

mydata_sorted$fit_loglin <- fitted(r4)[ord]

mydata_sorted$fit_loglog <- fitted(r5)[ord]

# Plot

ggplot(mydata, aes(x = avginc, y = log(testscr))) +

geom_point(color = "black", size = 1.5) +

geom_line(

data = mydata_sorted,

aes(y = fit_loglin, color = "Log-linear model"),

linewidth = 1

) +

geom_line(

data = mydata_sorted,

aes(y = fit_loglog, color = "Log-log model"),

linewidth = 1

) +

scale_color_manual(

values = c(

"Log-linear model" = "steelblue",

"Log-log model" = "red"

)

) +

labs(

x = "District Income (X)",

y = "log(Test Score (Y))",

color = NULL

) +

theme(legend.position = "bottom")

Finally, we can use the adjusted \(R^2\) to determine which model best fits the data. However, caution is needed when using the adjusted \(R^2\) to compare models with different dependent variables, as it should only be used for models with the same dependent variable. The adjusted \(R^2\) values for the linear, quadratic, and linear-log models are approximately 0.506, 0.554, and 0.561, respectively. Therefore, the linear-log model has the highest adjusted \(R^2\) and is the best fit for the data. For the log-linear and log-log models, the adjusted \(R^2\) values are about 0.497 and 0.557, respectively; hence, the log-log model provides relatively better fit.

Since the dependent variables differ between the linear-log and log-log models, we cannot directly compare their adjusted \(R^2\) values. Stock and Watson (2020) suggest using economic theory or expert judgment to decide whether to specify the model with \(Y\) or \(\ln(Y)\) as the dependent variable. They write, “In modeling test scores, it seems natural (to us, anyway) to discuss test results in terms of points on the test rather than percentage increases in the test scores, so we focus on models in which the dependent variable is the test score rather than its logarithm.”

17.4 Interactions between independent variables

In this section, we consider the interaction terms formed by the product of two independent variables. In models with interaction terms, the effect of an independent variable on \(Y\) depends also on the variable with which it is interacted.

17.4.1 Interactions between two binary variables

Consider the following regression model: \[ \begin{align} Y_i=\beta_0+\beta_1D_{1i}+\beta_2D_{2i}+u_i,\quad i=1,2,\cdots,n, \end{align} \] where \(D_1\) and \(D_2\) are binary variables, i.e., dummy variables. In this model, the effect of changing \(D_1=0\) to \(D_1=1\) is given by \(\beta_1\), which does not depend on the value of \(D_2\). To allow the effect of changing \(D_1\) to depend on \(D_2\), we include the interaction term (or the interacted regressor) \(D_{1i}\times D_{2i}\) as a regressor to the model: \[ \begin{align} Y_i=\beta_0+\beta_1D_{1i}+\beta_2D_{2i}+\beta_3\left(D_{1i}\times D_{2i}\right)+u_i. \end{align} \]

The interaction term \(\beta_3\left(D_{1i}\times D_{2i}\right)\) captures the effect of changing \(D_1\) on \(Y\) when \(D_2=1\). To see this, consider the conditional expectation of \(Y\) given \(D_1\) and \(D_2\): \[ \begin{align*} &\E(Y_i|D_{1i}=0,D_{2i}=d_2)=\beta_0+\beta_2d_2,\\ &\E(Y_i|D_{1i}=1,D_{2i}=d_2)=\beta_0+\beta_1+\beta_2d_2+\beta_3d_2. \end{align*} \]

Then, we can express \(\E(Y_i|D_{1i}=1,D_{2i}=d_2)-\E(Y_i|D_{1i}=0,D_{2i}=d_2)\) as \[ \begin{align} \E(Y_i|D_{1i}=1,D_{2i}=d_2)-\E(Y_i|D_{1i}=0,D_{2i}=d_2)=\beta_1+\beta_3d_2, \end{align} \] which depends on \(d_2\). If \(d_2=0\), the effect of changing \(D_1\) from 0 to 1 on \(Y\) is \(\beta_1\), while if \(d_2=1\), the effect of changing \(D_1\) from 0 to 1 on \(Y\) is \(\beta_1 + \beta_3\).

As an example, we consider the following regression model for the test scores data: \[ \begin{align} \text{TestScore}_i=\beta_0+\beta_1\text{HiSTR}_i+\beta_2\text{HiEL}_i+\beta_3\left(\text{HiSTR}_i\times\text{HiEL}_i\right)+u_i, \end{align} \] where \[ \begin{align*} \text{HiSTR}_i= \begin{cases} 1,\,\text{if STR}_i\geq20,\\ 0,\,\text{if STR}_i<20. \end{cases} \qquad \text{HiEL}_i= \begin{cases} 1,\,\text{if PctEL}_i\geq10,\\ 0,\,\text{if PctEL}_i<10. \end{cases} \end{align*} \]

Here, \(\text{HiSTR}_i\) is a binary variable equal to 1 if the student-teacher ratio is 20 or more, and 0 otherwise; \(\text{HiEL}_i\) is another binary variable equal to 1 if the percentage of English learners is 10% or more, and 0 otherwise. We use the following code chunk to estimate the model and present the results in Table 17.3.

# Generate dummy variables

mydata$histr = as.integer(mydata$str >= 20)

mydata$hiel = as.integer(mydata$el_pct >= 10)

# Estimation

r1 <- lm(testscr ~ histr + hiel + histr:hiel, data = mydata)# Print results in a table form

modelsummary(

list("Model" = r1),

vcov = "HC1",

stars = TRUE,

fmt = 3,

gof_omit = "AIC|BIC|Log.Lik"

)| Model | |

|---|---|

| (Intercept) | 664.143*** |

| (1.388) | |

| histr | -1.908 |

| (1.932) | |

| hiel | -18.163*** |

| (2.346) | |

| histr × hiel | -3.494 |

| (3.121) | |

| Num.Obs. | 420 |

| R2 | 0.296 |

| R2 Adj. | 0.290 |

| F | 60.195 |

| RMSE | 15.97 |

| Std.Errors | HC1 |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | |

According to the estimation results in Table 17.3, the estimated model is \[ \begin{align} \widehat{\text{TestScore}}_i=664.1-1.9\text{HiSTR}_i-18.2\text{HiEL}_i-3.5\left(\text{HiSTR}_i\times\text{HiEL}_i\right). \end{align} \]

The effect of \(\text{HiSTR}\) on the average test score when \(\text{HiEL} = 0\) is \(-1.9\) points, whereas the effect of \(\text{HiSTR}\) on the average test score when \(\text{HiEL} = 1\) is \(-1.9 - 3.5 = -5.4\) points. When the percentage of English learners is low (i.e., when \(\text{HiEL} = 0\)), a district with a high student-teacher ratio has, on average, 1.9 fewer points than a district with a low student-teacher ratio. On the other hand, when the percentage of English learners is high (i.e., when \(\text{HiEL} = 1\)), a district with a high student-teacher ratio has, on average, 5.4 fewer points than a district with a low student-teacher ratio.

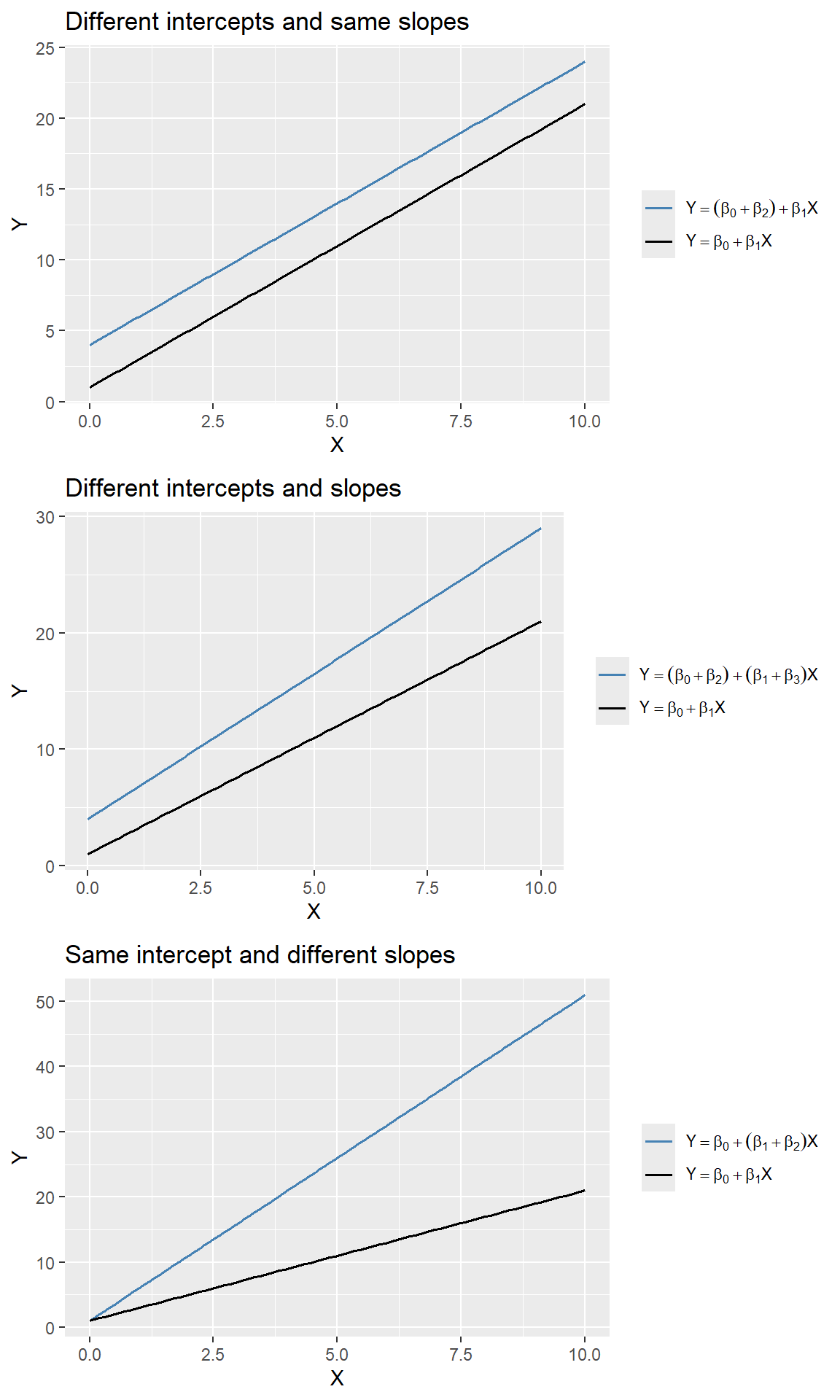

17.4.2 Interactions between a continuous and a binary variable

In this section, we consider regression models that include an interaction term formulated with a continuous and a binary variable. Consider the following model: \[ \begin{align} Y_i=\beta_0+\beta_1D_{i}+\beta_2X_{i}+u_i,\quad i=1,2,\dots,n, \end{align} \] where \(D\) is a binary and \(X\) is a continuous variable. In this model, the effect of \(X\) on \(Y\) is \(\beta_2\) and does not depend on the value of \(D\). However, the dummy variable \(D\) can affect the intercept of the regression function. When \(D=0\), the intercept is \(\beta_0\), while when \(D=1\), the intercept is \(\beta_0+\beta_1\). This case is illustrated in the first plot of Figure 17.7.

To allow the effect of \(X\) to depend on \(D\), we expend the model by including the interaction term \(D_i\times X_i\) as a regressor: \[ \begin{align} Y_i=\beta_0+\beta_1D_{i}+\beta_2X_{i}+\beta_3\left(D_i\times X_i\right)+u_i,\quad i=1,2,\cdots,n. \end{align} \] When \(D=0\), the population regression function is \(Y=\beta_0+\beta_2X\), while when \(D=1\), the population regression function is \(Y=(\beta_0+\beta_1)+(\beta_2+\beta_3)X\). In particular, the effect of one unit change in \(X\) on \(Y\) is \(\beta_2\) when \(D=0\) and \(\beta_2+\beta_3\) when \(D=1\). This case is illustrated in the second plot of Figure 17.7.

A third case arises when the slope of the regression function only depends on the value of the binary variable. Consider the following model: \[ \begin{align} Y_i=\beta_0+\beta_1X_{i}+\beta_2\left(D_i\times X_i\right)+u_i,\quad i=1,2,\cdots,n. \end{align} \] This case is illustrated in the third plot of Figure 17.7.

# Parameters

beta_0 <- 1

beta_1 <- 2

beta_2 <- 3

beta_3 <- 0.5

x <- seq(0, 10, length.out = 100)

# Data for each panel

# Panel 1: Different intercepts, same slopes

df1 <- data.frame(

x = rep(x, 2),

y = c((beta_0 + beta_2) + beta_1 * x,

beta_0 + beta_1 * x),

group = rep(c("g1", "g2"), each = length(x))

)

# Panel 2: Different intercepts and slopes

df2 <- data.frame(

x = rep(x, 2),

y = c((beta_0 + beta_2) + (beta_1 + beta_3) * x,

beta_0 + beta_1 * x),

group = rep(c("g1", "g2"), each = length(x))

)

# Panel 3: Same intercept, different slopes

df3 <- data.frame(

x = rep(x, 2),

y = c(beta_0 + (beta_1 + beta_2) * x,

beta_0 + beta_1 * x),

group = rep(c("g1", "g2"), each = length(x))

)

# Plot 1

p1 <- ggplot(df1, aes(x = x, y = y, color = group)) +

geom_line(linewidth = 0.7) +

labs(

title = "Different intercepts and same slopes",

x = expression(X),

y = expression(Y)

) +

scale_color_manual(

values = c("steelblue", "black"),

labels = c(

expression(Y == (beta[0]+beta[2]) + beta[1]*X),

expression(Y == beta[0] + beta[1]*X)

)

) +

theme(legend.title = element_blank())

# Plot 2

p2 <- ggplot(df2, aes(x = x, y = y, color = group)) +

geom_line(linewidth = 0.7) +

labs(

title = "Different intercepts and slopes",

x = expression(X),

y = expression(Y)

) +

scale_color_manual(

values = c("steelblue", "black"),

labels = c(

expression(Y == (beta[0]+beta[2]) + (beta[1]+beta[3])*X),

expression(Y == beta[0] + beta[1]*X)

)

) +

theme(legend.title = element_blank())

# Plot 3

p3 <- ggplot(df3, aes(x = x, y = y, color = group)) +

geom_line(linewidth = 0.7) +

labs(

title = "Same intercept and different slopes",

x = expression(X),

y = expression(Y)

) +

scale_color_manual(

values = c("steelblue", "black"),

labels = c(

expression(Y == beta[0] + (beta[1]+beta[2])*X),

expression(Y == beta[0] + beta[1]*X)

)

) +

theme(legend.title = element_blank())

# Arrange 2×2 layout (bottom-right empty)

grid.arrange(grobs = list(p1, p2, p3), ncol = 1)

In the test scores example, we consider the following regression model: \[ \begin{align} \text{TestScore}_i = \beta_0+\beta_1\text{STR}_i+\beta_2\text{HiEL}_i+\beta_3\left(\text{STR}_i\times\text{HiEL}_i\right)+u_i, \end{align} \] where \(\text{HiEL}_i\) is a binary variable that equals \(1\) if the percentage of English learners is 10% or more and 0 otherwise. The estimation results are presented in Table 17.4.

# Estimation

r2 <- lm(testscr ~ str * hiel, data = mydata)# Table output (HC1 robust SEs)

modelsummary(

list("Model" = r2),

vcov = "HC1",

gof_omit = "AIC|BIC|Log.Lik",

stars = TRUE,

fmt = 3

)| Model | |

|---|---|

| (Intercept) | 682.246*** |

| (11.868) | |

| str | -0.968 |

| (0.589) | |

| hiel | 5.639 |

| (19.515) | |

| str × hiel | -1.277 |

| (0.967) | |

| Num.Obs. | 420 |

| R2 | 0.310 |

| R2 Adj. | 0.305 |

| F | 63.673 |

| RMSE | 15.80 |

| Std.Errors | HC1 |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | |

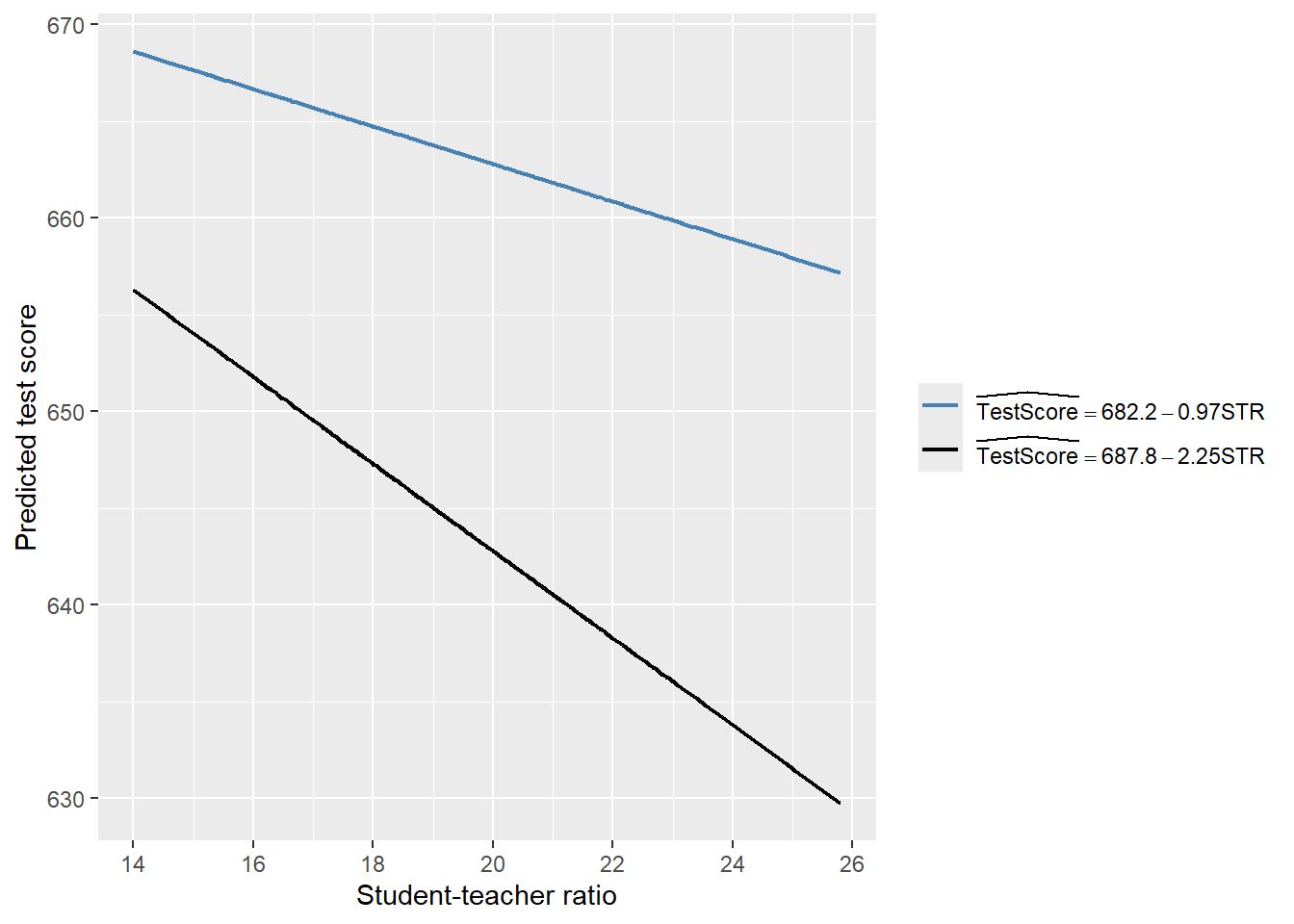

According to the estimation results presented in Table 17.4, the estimated model is \[ \begin{align} \widehat{\text{TestScore}}_i=682.2-0.97\text{STR}_i+5.6\text{HiEL}_i-1.28\left(\text{STR}_i\times\text{HiEL}_i\right). \end{align} \]

Thus, for districts with a low percentage of English learners (\(\text{HiEL}_i=0\)), the estimated regression line is \(\widehat{\text{TestScore}}_i=682.2-0.97\text{STR}_i\). In contrast, for districts with a high percentage of English learners (\(\text{HiEL}_i=1\)), the estimated regression line becomes \(\widehat{\text{TestScore}}_i=682.2+5.6-0.97\text{STR}_i-1.28\text{STR}_i=687.8-2.25\text{STR}_i\). These two regression lines are plotted in Figure 17.8. According to these estimates, when STR increases by \(1\), the test score declines on average by \(0.97\) points in districts with low percentage of English learners. On the other hand, when STR increases by \(1\) student, the test score declines on average by \(2.25\) points in districts with high percentage of English learners.

# Parameters

beta_0 <- 682.2

beta_1 <- -0.97

beta_2 <- 5.6

beta_3 <- -1.28

# Data

x <- mydata$str

df <- data.frame(

str = rep(x, 2),

y = c(

beta_0 + beta_1 * x,

(beta_0 + beta_2) + (beta_1 + beta_3) * x

),

model = rep(c("m1", "m2"), each = length(x))

)

# Plot

ggplot(df, aes(x = str, y = y, color = model)) +

geom_line(linewidth = 0.8) +

labs(

x = "Student-teacher ratio",

y = "Predicted test score"

) +

scale_color_manual(

values = c("steelblue", "black"),

labels = c(

expression(widehat(TestScore) == 682.2 - 0.97*STR),

expression(widehat(TestScore) == 687.8 - 2.25*STR)

)

) +

theme(

legend.title = element_blank()

)

Finally, we consider the following joint null and alternative hypotheses: \[ \begin{align*} H_0: \beta_2=0,\,\beta_3=0\quad\text{versus}\quad H_1:\text{At least one is nonzero}. \end{align*} \]

We use the heteroskedasticity-robust \(F\)-statistic to test this hypothesis. The \(F\)-statistic is around 89.9 with a \(p\)-value less than 0.05. Thus, we reject the null hypothesis.

linearHypothesis(

r2,

c("hiel = 0", "str:hiel = 0"),

vcov = vcovHC(r2, type = "HC1"),

test = "F"

)

Linear hypothesis test:

hiel = 0

str:hiel = 0

Model 1: restricted model

Model 2: testscr ~ str * hiel

Note: Coefficient covariance matrix supplied.

Res.Df Df F Pr(>F)

1 418

2 416 2 89.939 < 2.2e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 117.4.3 Interactions between two continuous variables

In this section, we consider interection terms formulated with continuous variables. To allow the effect of the continuous variable \(X_1\) on \(Y\) to depend on another continuous variable \(X_2\), we include the interaction term \(X_1\times X_2\) as a regressor in th following model: \[ \begin{align} Y_i=\beta_0+\beta_1X_{1i}+\beta_2X_{2i}+\beta_3\left(X_{1i}\times X_{2i}\right)+u_i,\quad i=1,2,\dots,n. \end{align} \tag{17.2}\]

Assume that \(X_1\) changes to \(X_1+\Delta X_1\). Then, the change in \(Y\) can be computed as \[ \begin{align*} Y+\Delta Y=\beta_0+\beta_1(X_1+\Delta X_1)+\beta_2X_2+\beta_3\left((X_1+\Delta X_1)\times X_2\right)+u. \end{align*} \tag{17.3}\]

Subtracting Equation 17.2 from Equation 17.3, we have \[ \begin{align*} \Delta Y=\beta_1\Delta X_1+\beta_3X_2\times\Delta X_1\implies \frac{\Delta Y}{\Delta X_1}=\beta_1+\beta_3X_2. \end{align*} \] Thus, the effect of achange in \(X_1\) on \(Y\) depends on \(X_2\).

In the test scores example, we consider the following regression model:

\[ \begin{align}\label{eq12} \text{TestScore}_i=\beta_0+\beta_1\text{STR}_i+\beta_2\text{PctEL}_i+\beta_3\left(\text{STR}_i\times\text{PctEL}_i\right)+u_i. \end{align} \]

We use the following code chunk to estimate the model and present the results in Table 17.5.

# Estimation

r3 <- lm(testscr ~ str * el_pct, data = mydata)# Table output with HC1 robust SEs

modelsummary(

list("Model" = r3),

vcov = vcovHC(r3, type = "HC1"),

gof_omit = "AIC|BIC|Log.Lik",

stars = TRUE,

fmt = 3

)| Model | |

|---|---|

| (Intercept) | 686.339*** |

| (11.759) | |

| str | -1.117+ |

| (0.588) | |

| el_pct | -0.673+ |

| (0.374) | |

| str × el_pct | 0.001 |

| (0.019) | |

| Num.Obs. | 420 |

| R2 | 0.426 |

| R2 Adj. | 0.422 |

| RMSE | 14.41 |

| Std.Errors | Custom |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | |

The estimated model is \[ \begin{align} \widehat{\text{TestScore}}_i=683.3-1.12\text{STR}_i-0.67\text{PctEL}_i+0.0012\left(\text{STR}_i\times\text{PctEL}_i\right). \end{align} \]

The estimated effect of class size reduction is not constant because the size of the effect itself depends on PctEL: \[

\frac{\Delta\widehat{\text{TestScore}}}{\Delta\text{STR}}=-1.12 + 0.0012\text{PctEL}.

\]

Consider the effect of STR when PctEL is at its median value PctEL=8.85. Then, we have \[

\frac{\Delta\widehat{\text{TestScore}}}{\Delta\text{STR}} = -1.12 + 0.0012 \text{PctEL} = -1.12 + 0.0012 \times 8.85 = -1.11.

\]

Thus, when PctEL=8.85, the estimated effect of one-unit increase in STR is a decrease in average test scores by 1.11 points.

17.5 Nonlinear effects on test scores of the student–teacher ratio

In this section, we address three specific questions regarding the relationship between test scores and the student-teacher ratio:

- After controlling for the economic conditions of school districts, does the effect of the student-teacher ratio on test scores depend on the fraction of English learners?

- Is there a non-linear relationship between test scores and the student-teacher ratio?

- What is the quantitative effect of decreasing the student-teacher ratio by two on test scores after accounting for economic characteristics and nonlinearities?

To answer these questions, we also consider the following variables on the economic conditions of school districts:

el_pct: the percentage of English learning students.HiEL: a binary variable that equals 1 if the percentage of English learners is 10% or more, and 0 otherwise.meal_pct: the share of students that qualify for a subsidized or a free lunch at school.avginc: the average district income (in $1000’s).

We consider a total of seven models, some of which are nonlinear regression specifications:

\[ \begin{align*} &1.\, \text{TestScore}_i=\beta_0+\beta_1\text{STR}_i+\beta_2\text{PctEL}_i+{\color{steelblue}\beta_3\text{meal\_pct}_i}+u_i,\\ &2.\, \text{TestScore}_i=\beta_0+\beta_1\text{STR}_i+\beta_2\text{PctEL}_i+{\color{steelblue}\beta_3\text{meal\_pct}_i+\beta_4\ln\left(\text{avginc}_i\right)}+u_i,\\ &3.\, \text{TestScore}_i=\beta_0+\beta_1\text{STR}_i+\beta_2\text{HiEL}_i+\beta_3(\text{HiEL}_i\times\text{STR}_i)+u_i,\\ &4.\, \text{TestScore}_i=\beta_0+\beta_1\text{STR}_i+\beta_2\text{HiEL}_i+\beta_3(\text{HiEL}_i\times\text{STR}_i)+{\color{steelblue}\beta_4\text{meal\_pct}_i+\beta_5\ln\left(\text{avginc}_i\right)}+u_i,\\ &5.\, \text{TestScore}_i=\beta_0+\beta_1\text{STR}_i+\beta_2\text{STR}^2_i+\beta_3\text{STR}^3_i+\beta_4\text{HiEL}_i+{\color{steelblue}\beta_5\text{meal\_pct}_i+\beta_6\ln\left(\text{avginc}_i\right)}+u_i,\\ &6.\, \text{TestScore}_i=\beta_0+\beta_1\text{STR}_i+\beta_2\text{STR}^2_i+\beta_3\text{STR}^3_i+\beta_4\text{HiEL}_i+\beta_5(\text{HiEL}_i\times\text{STR}_i)\\ &\quad\quad\quad\quad\quad\quad+\beta_6(\text{HiEL}_i\times\text{STR}^2_i)+\beta_7(\text{HiEL}_i\times\text{STR}^3_i)+{\color{steelblue}\beta_8\text{meal\_pct}_i+\beta_9\ln\left(\text{avginc}_i\right)}+u_i,\\ &7.\, \text{TestScore}_i=\beta_0+\beta_1\text{STR}_i+\beta_2\text{STR}^2_i+\beta_3\text{STR}^3_i+\beta_4\text{PctEL}_i+{\color{steelblue}\beta_5\text{meal\_pct}_i+\beta_6\ln\left(\text{avginc}_i\right)}+u_i.\\ \end{align*} \]

The following code chunks can be used to produce the estimation results given in Table 17.6.

# Model 1

r1 <- lm(testscr ~ str + el_pct + meal_pct, data = mydata)

# Model 2

r2 <- lm(testscr ~ str + el_pct + meal_pct + log(avginc), data = mydata)

# Model 3

r3 <- lm(testscr ~ str * hiel, data = mydata)

# Model 4

r4 <- lm(testscr ~ str * hiel + meal_pct + log(avginc), data = mydata)

# Model 5 (HC3 robust SEs in Python)

r5 <- lm(testscr ~ str + I(str^2) + I(str^3) +

hiel + meal_pct + log(avginc),

data = mydata)

# Model 6

r6 <- lm(testscr ~ (str + I(str^2) + I(str^3)) * hiel +

meal_pct + log(avginc),

data = mydata)

# Model 7

r7 <- lm(testscr ~ str + I(str^2) + I(str^3) +

el_pct + meal_pct + log(avginc),

data = mydata)modelsummary(

list(

"Model 1" = r1,

"Model 2" = r2,

"Model 3" = r3,

"Model 4" = r4,

"Model 5" = r5,

"Model 6" = r6,

"Model 7" = r7

),

vcov = "HC1",

gof_omit = "AIC|BIC|Log.Lik",

stars = TRUE,

fmt = 3

)| Model 1 | Model 2 | Model 3 | Model 4 | Model 5 | Model 6 | Model 7 | |

|---|---|---|---|---|---|---|---|

| (Intercept) | 700.150*** | 658.552*** | 682.246*** | 653.666*** | 252.051 | 122.354 | 244.809 |

| (5.568) | (8.642) | (11.868) | (9.869) | (163.634) | (185.519) | (165.722) | |

| str | -0.998*** | -0.734** | -0.968 | -0.531 | 64.339** | 83.701** | 65.285* |

| (0.270) | (0.257) | (0.589) | (0.342) | (24.861) | (28.497) | (25.259) | |

| el_pct | -0.122*** | -0.176*** | -0.166*** | ||||

| (0.033) | (0.034) | (0.034) | |||||

| meal_pct | -0.547*** | -0.398*** | -0.411*** | -0.420*** | -0.418*** | -0.402*** | |

| (0.024) | (0.033) | (0.029) | (0.029) | (0.029) | (0.033) | ||

| log(avginc) | 11.569*** | 12.124*** | 11.748*** | 11.800*** | 11.509*** | ||

| (1.819) | (1.798) | (1.771) | (1.778) | (1.806) | |||

| hiel | 5.639 | 5.498 | -5.474*** | 816.075* | |||

| (19.515) | (9.795) | (1.034) | (327.674) | ||||

| str × hiel | -1.277 | -0.578 | -123.282* | ||||

| (0.967) | (0.496) | (50.213) | |||||

| I(str^2) | -3.424** | -4.381** | -3.466** | ||||

| (1.250) | (1.441) | (1.271) | |||||

| I(str^3) | 0.059** | 0.075** | 0.060** | ||||

| (0.021) | (0.024) | (0.021) | |||||

| I(str^2) × hiel | 6.121* | ||||||

| (2.542) | |||||||

| I(str^3) × hiel | -0.101* | ||||||

| (0.043) | |||||||

| Num.Obs. | 420 | 420 | 420 | 420 | 420 | 420 | 420 |

| R2 | 0.775 | 0.796 | 0.310 | 0.797 | 0.801 | 0.803 | 0.801 |

| R2 Adj. | 0.773 | 0.794 | 0.305 | 0.795 | 0.798 | 0.799 | 0.798 |

| F | 453.479 | 417.196 | 63.673 | 335.846 | 281.141 | 199.822 | 280.808 |

| RMSE | 9.04 | 8.59 | 15.80 | 8.57 | 8.49 | 8.45 | 8.50 |

| Std.Errors | HC1 | HC1 | HC1 | HC1 | HC1 | HC1 | HC1 |

| + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001 | |||||||

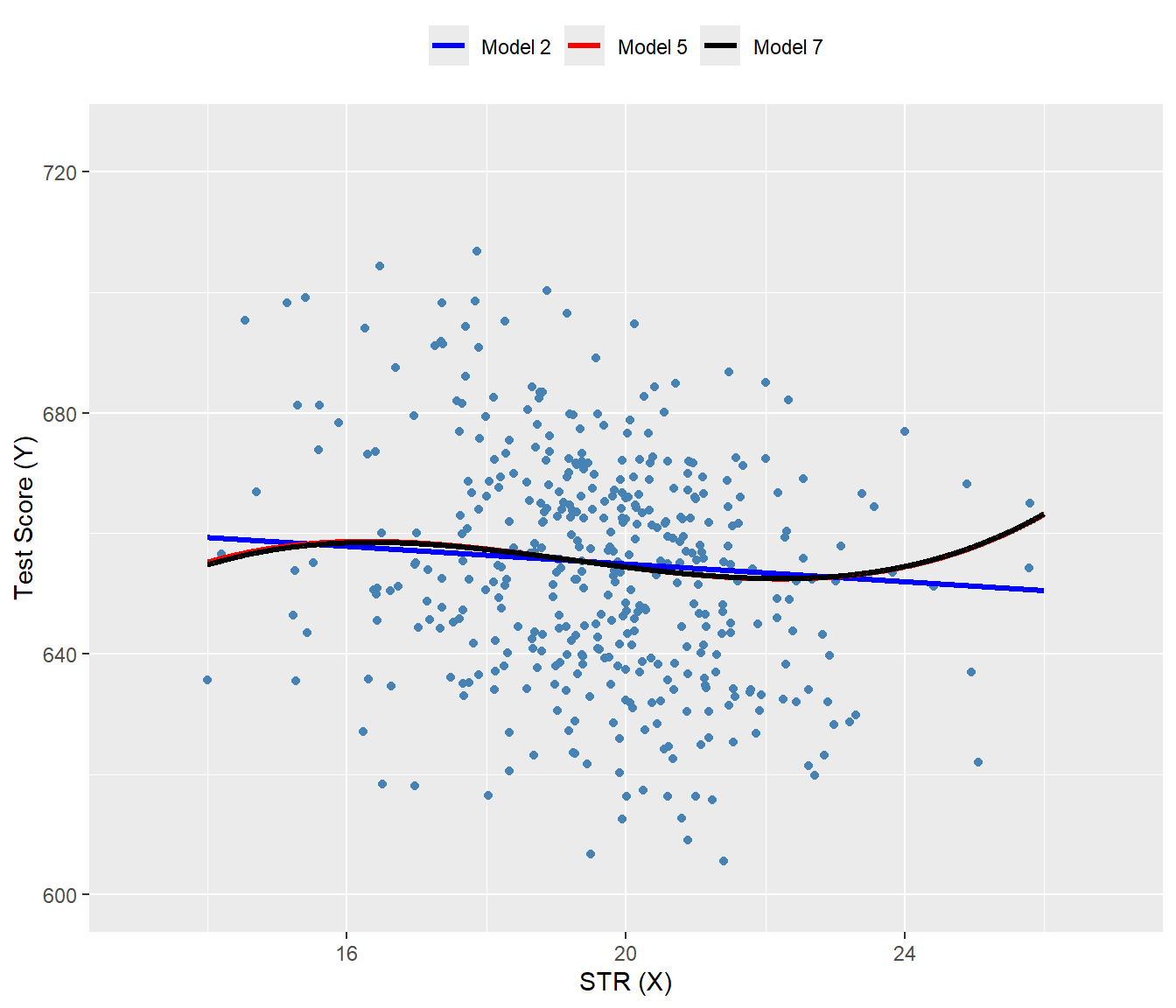

Starting with the results for Model 1, we see that the estimated coefficient on STR is statistically significant. The results for Model 2 show that adding ln(avginc) reduces the estimated coefficient on STR to -0.734, though this estimate remains statistically significant. In Models 3 and 4, we aim to assess the effect of allowing for an interaction term, STR × HiEL, both without and with economic control variables. In both models, we observe that the coefficient on the interaction term and the coefficient on HiEL are not statistically significant. Thus, even with economic controls, we cannot reject the null hypothesis that the effect of the student-teacher ratio on test scores is the same for districts with high and low shares of English learners.

Model 5 includes a cubic term for the student-teacher ratio and omits the interaction STR × HiEL. The results indicate that there is a nonlinear effect of STR on test scores. We can verify this non-linear relationship by testing the joint null hypothesis: \(H_0:\beta_2=0,\beta_3=0\). The F-statistic is around 5 with a \(p\)-value less than 0.05. Thus, we reject the null hypothesis at the \(5\%\) significance level.

linearHypothesis(

r5,

c("I(str^2) = 0", "I(str^3) = 0"),

vcov = vcovHC(r5, type = "HC3"),

test = "F"

)

Linear hypothesis test:

I(str^2) = 0

I(str^3) = 0

Model 1: restricted model

Model 2: testscr ~ str + I(str^2) + I(str^3) + hiel + meal_pct + log(avginc)

Note: Coefficient covariance matrix supplied.

Res.Df Df F Pr(>F)

1 415

2 413 2 5.0205 0.00701 **

---

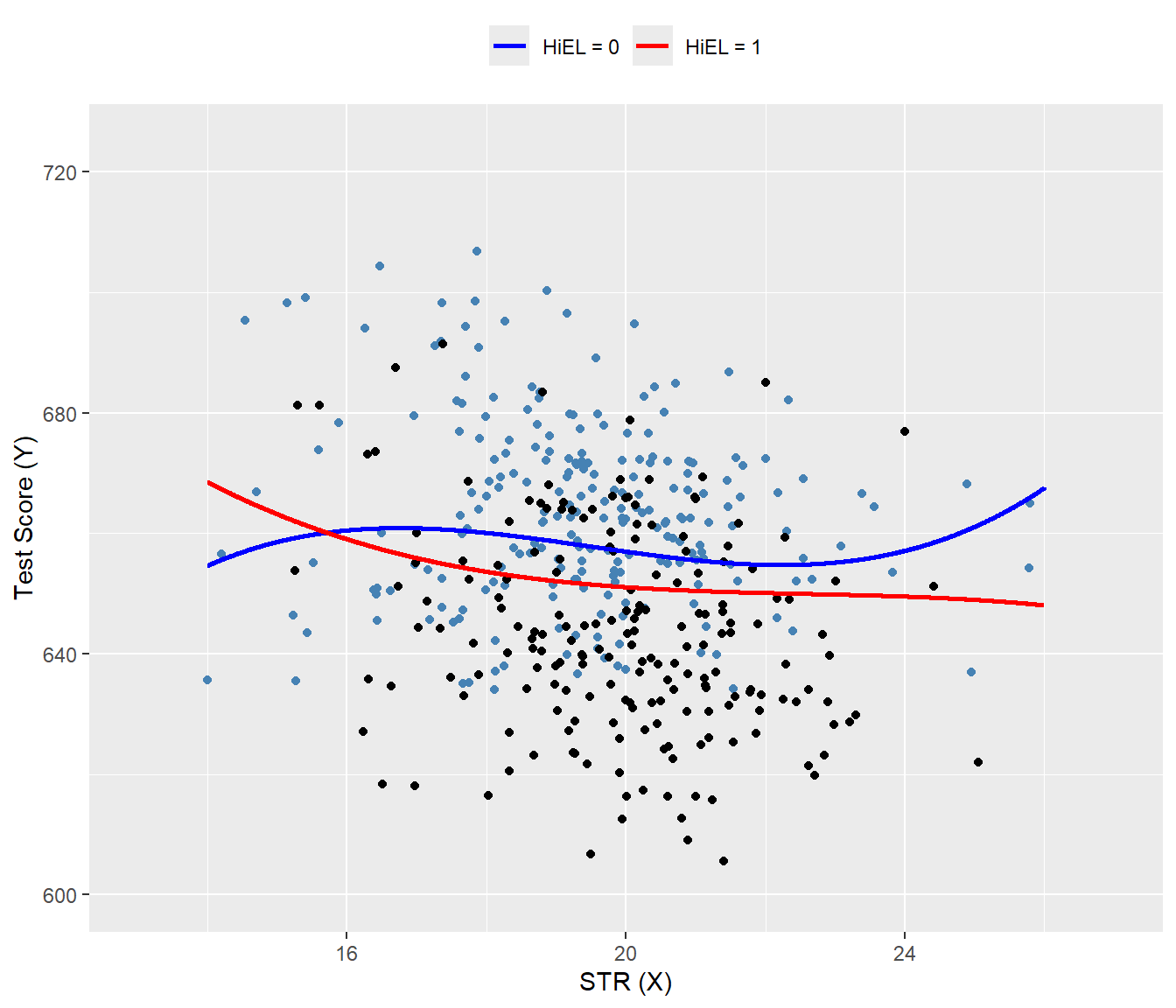

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Model 6 further examines whether the effect of STR depends not just on its value but also on the fraction of English learners. All individual t-statistics indicate that the estimated coefficients on HiEL and its interaction terms are statistically significant. We can further check this by using a robust \(F\)-test for the joint null hypothesis: \(H_0:\beta_5=0,\beta_6=0,\beta_7=0\). The F-statistic is around 2.69 with a \(p\)-value around 0.046. We reject the null hypothesis at the 5% significance level.

linearHypothesis(

r6,

c(

"str:hiel = 0",

"I(str^2):hiel = 0",

"I(str^3):hiel = 0"

),

vcov = vcovHC(r6, type = "HC1"),

test = "F"

)

Linear hypothesis test:

str:hiel = 0

I(str^2):hiel = 0

I(str^3):hiel = 0

Model 1: restricted model

Model 2: testscr ~ (str + I(str^2) + I(str^3)) * hiel + meal_pct + log(avginc)

Note: Coefficient covariance matrix supplied.

Res.Df Df F Pr(>F)

1 413

2 410 3 2.6902 0.04597 *

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Model 7 is a modification of Model 5, in which the continuous variable PctEL is used instead of the binary variable HiEL to control for the effect of the percentage of English learners. The coefficients on the other regressors do not change substantially when this modification is made, indicating that the results in Model 5 are not sensitive to whether we use PctEL or HiEL.

The nonlinear specifications in Table 17.6 are most easily interpreted graphically. In Figure 17.9, we present plots of the estimated regression functions for Models 2, 5, and 7, along with a scatterplot of testscr and str. For each curve, we compute predicted values by setting each independent variable, other than str, to its sample average. These estimated regression functions show the predicted test scores as a function of str, with other independent variables fixed at their sample averages. The figure illustrates that the estimated regression functions are all quite similar to one another.

mydata$hiel <- as.integer(mydata$hiel)

# New data for prediction

str_grid <- seq(14, 26, by = 0.01)

new_data <- data.frame(

str = str_grid,

el_pct = mean(mydata$el_pct),

meal_pct = mean(mydata$meal_pct),

avginc = mean(mydata$avginc),

hiel = mean(mydata$hiel)

)

# Predictions

new_data$r2_fitted <- predict(r2, newdata = new_data)

new_data$r5_fitted <- predict(r5, newdata = new_data)

new_data$r7_fitted <- predict(r7, newdata = new_data)

# Plot

ggplot() +

geom_point(

data = mydata,

aes(x = str, y = testscr),

color = "steelblue",

size = 1.5

) +

geom_line(

data = new_data,

aes(x = str, y = r2_fitted, color = "Model 2"),

linewidth = 1.1

) +

geom_line(

data = new_data,

aes(x = str, y = r5_fitted, color = "Model 5"),

linewidth = 1.1

) +

geom_line(

data = new_data,

aes(x = str, y = r7_fitted, color = "Model 7"),

linewidth = 1.1

) +

scale_color_manual(

values = c(

"Model 2" = "blue",

"Model 5" = "red",

"Model 7" = "black"

)

) +

coord_cartesian(xlim = c(13, 27), ylim = c(600, 725)) +

labs(

x = "STR (X)",

y = "Test Score (Y)",

color = NULL

) +

theme(

legend.position = "top"

)

In Figure 17.10, we present the estimated regression functions for Model 6 when HiEL = 0 and HiEL = 1. Although the two regression functions appear different, the slopes are nearly identical when str take values between 17 and 23. Since this range encompasses nearly all observations, we can reasonably conclude that nonlinear interactions between the fraction of English learners and the student-teacher ratio can be disregarded.

# Sequence of STR values

str_grid <- seq(14, 26, by = 0.01)

# New data for predictions

new_data_hiel_0 <- data.frame(

str = str_grid,

el_pct = mean(mydata$el_pct),

meal_pct = mean(mydata$meal_pct),

avginc = mean(mydata$avginc),

hiel = 0

)

new_data_hiel_1 <- new_data_hiel_0

new_data_hiel_1$hiel <- 1

# Predictions

r6_fitted_hiel_0 <- predict(r6, newdata = new_data_hiel_0)

r6_fitted_hiel_1 <- predict(r6, newdata = new_data_hiel_1)

# Combine predictions with new_data for plotting

new_data_hiel_0$pred <- r6_fitted_hiel_0

new_data_hiel_1$pred <- r6_fitted_hiel_1

# Plot

ggplot() +

# Scatter points

geom_point(

data = subset(mydata, hiel == 0),

aes(x = str, y = testscr),

color = "steelblue",

size = 1.5

) +

geom_point(

data = subset(mydata, hiel == 1),

aes(x = str, y = testscr),

color = "black",

size = 1.5

) +

# Fitted lines

geom_line(

data = new_data_hiel_0,

aes(x = str, y = pred, color = "HiEL = 0"),

linewidth = 1

) +

geom_line(

data = new_data_hiel_1,

aes(x = str, y = pred, color = "HiEL = 1"),

linewidth = 1

) +

# Colors and labels

scale_color_manual(

values = c("HiEL = 0" = "blue", "HiEL = 1" = "red")

) +

coord_cartesian(xlim = c(13, 27), ylim = c(600, 725)) +

labs(

x = "STR (X)",

y = "Test Score (Y)",

color = NULL

) +

theme(

legend.position = "top"

)

Finally, following Stock and Watson (2020), we can summarize the main findings of the empirical application as follows:

- The results for Models 3, 4, and 6 show that, after controlling for economic background, there is weak statistical evidence that the effect of a change in

strdepends on whether there are many or few English learners in the district. - The results for Models 5 and 6 show that after controlling for economic background, there is evidence of a nonlinear relationship between test scores and the student-teacher ratio.

- Acoording to estimated Model 2, if

strdeclines by two students, test scores are expected to increase by \(-0.73\times (-2)=1.46\) points. Since the model is linear, this effect is independent of the class size. - Using the estimation results for Model 5 and assuming that

str=20, a decrease in str tostr = 18is expected to increase test scores by 3.3 points, as shown below: \[ \begin{align*} &\Delta\widehat{\text{TestScore}}=64.33\times 18+18^2\times(-3.424)+18^3\times(0.059)\\ &-\left(64.33\times 20+20^2\times(-3.424)+20^3\times(0.059)\right)\approx3.3. \end{align*} \]